I spoke with Hallie Cotnam on CBC Ottawa Morning on 07-Aug-2022 about this issue.

On Friday, 08-Jul-2022, the Rogers network suffered a massive outage. Rogers is a major ISP and cellular provider in Canada. Just how massive might surprise anyone not living here. They have 35% of the national market share for mobile connections and 30% of all Canadian home internet connections.

On top of that, they have 2.25 million retail internet customers and another 7,000 enterprise customers.

Over a third of the country is online because of Rogers. Over a third of the country went dark for the entire day.

Much has been made of the outage (just check the references section at the end of this post) but when you wade through all of the opinions, it appears that the issue was the result of one mistake.

It’s the type of mistake that keeps network engineers and operations teams up at night. A simple misconfiguration that threads the wrong needle and is extremely difficult to rollback.

Cloudflare has a great summary of the issue as seen from the internet.

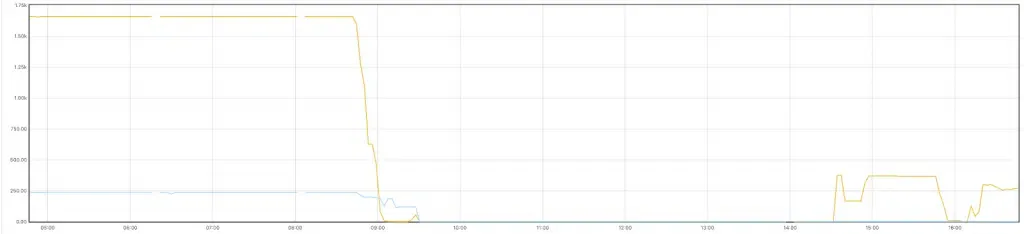

Cloudflare BGP data showing Rogers network drop off the internet on the day of the outage, 08-Jul-2022

👆 that big cliff? That’s not good.

Network Access

Most people will never see the inside of a data centre, including a lot of that network’s engineers. Most of the work is done remotely. That requires a secure access path into systems that can update the network resources in question.

Care to guess where simple mistakes escalate out of control?

If you said, “Remote access and update configurations?”, you win! …and by that, we all lost on July 8th.

Someone, somewhere made a simple mistake that apparently closed much needed update pathways and took most of the network offline.

How? These types of changes usually have both technical and process guardrails in place but they aren’t infallible. Mistakes still make it to production. It happens…thankfully rarely.

The good news? The root cause of the issue was probably located quickly.

The bad news? The issue had already taken enough of the network offline that bringing it back up presented its own, unique challenge.

While this network outage lasted almost 17 hours. All indications seem to point to the original issue being resolved reasonably quickly and then the rest of the time spent unravelling the nightmare of legacy systems.

Rogers took a lot of heat for this outage. Their stock drop 1.17% on the day. But while it’s easy to blame them, the reason the outage was so long was written in a thirty year build up of technical debt, business incentives, and the geographical challenges of the Canadian market.

Are There Any Takeaways?

Everyone impacted has called for change. The Government called Rogers, Bell, and others to the carpet to figure out how to prevent another outage this significant. Those efforts won’t drive any significant changes.

Canada is just too big and our population is too small to have a diverse set of telecommunications providers. That’s ok. We have reasonable—if expensive—coverage today. We need significantly better coverage in the Territories and some rural areas but most Canadians have access to reasonably fast internet.

Do we need change in this sector? Yes.

Lower costs would help. Regulation that prevents bundling of multiple services (discounts for more services from one provider) which forces Canadians to put all of eggs in one basket. Subsidized access to rural and northern areas.

But at the end of the day, this massive outage was from a mistake. A mistake that happened despite technical and process safeguards. Why? Because 💩 happens. 🤷

Thoughts On The Day

A Twitter thread from me on the day with my initial reactions;

as the @rogers outage rolls towards hour 18, the msg on their website keeps getting more empathetic

"catastrophic" isn't an exaggeration here

nationwide networks are complex. lots of opportunities for cascade faults that require rebuilds#nointernet #rogersoutage 🧵 pic.twitter.com/biCsycpr1E— Mark Nunnikhoven (@marknca) July 9, 2022

References

- Tweet from @CityNewsTO

- Tweet from @EliseGoodhoofd

- Tweet from @PnPCBC

- The Rogers outage disrupted services across Canada. A list of what was affected – The Globe and Mail

- Rogers’ nationwide outages starting to recover: company | CTV News

- NHL Draft Being Impacted by Massive Cell Phone Outage in Canada

- Rogers outage shows need for Plan B when wireless, internet services fail, analysts say | CBC News

- Massive Rogers outage affected Canadian phones, internet, ATMs, and debit cards – The Verge

- TVO | Current affairs, documentaries and education

- Cloudflare’s view of the Rogers Communications outage in Canada

- Rogers CEO apologizes for massive service outage, blames maintenance update | CBC News

- Rogers restores service for ‘vast majority’ of customers after massive outage – The Verge

- Tweet from @eastdakota

- Rogers CEO apologizes for massive service outage, blames maintenance update | CBC News

- Rogers network resuming after major outage hits millions of Canadians | Reuters

- Rogers outage: Here’s how it’s affecting services in Ottawa | CTV News

- Rogers customers grow increasingly frustrated on 3rd day without cell, internet service | CBC News

- Industry minister to meet with Rogers CEO after ‘unacceptable’ network outage | CBC News

- Industry Minister to meet with telecoms after ‘unacceptable’ Rogers outage

- Responding to the Rogers Outage: Time to Get Serious About Competition, Consumer Rights, and Communications Regulation – Michael Geist

- Ottawa announces it will require telecoms to provide backup for each other during outages following Rogers system failure

- ‘A crazy day’: Ottawa restaurants left reeling after Rogers outage | CBC News

- Rogers outage points to need for greater oversight of critical industry | CBC News

- CRTC ordering Rogers to explain in detail what caused massive network outage | CBC News

- Rogers promises investment to avoid future network outages | CBC News

- Rogers replaces chief technology officer in wake of nationwide outage – National | Globalnews.ca

- Rogers blames massive outage on error during network update | CBC News

- Rogers unable to switch customers to Bell, Telus, despite competing carrier offers

- How a coding error caused Rogers outage that left millions without service