This talk was delivered at AtlSecCon in Halifax, NS, on 10-Apr-2025

Abstract

When was the last time you felt like you had enough time in the day to get your work done? Are you exhausted by the never ending firehose of security challenges you have to deal with each and every day?

In this session, we are not going to change that reality. Sorry, security work is continuous, but it doesn’t have to be overwhelming.

This session looks at the workflows around your security practice and how it interacts with the business. Security is a service business, but teams are rarely set up in a way to deliver that service successfully.

There’s a lot of history that contributes to the current state of security teams, but that history typically isn’t serving a purpose. More often than not, the way we’ve built out our work leads to delays, frustrated colleagues, and eventually teams that work around us instead of with us.

This isn’t a talk about simply getting “buy in” from other leaders, it’s about breaking down our security goals and learning from other types of teams and businesses and how they are setup.

You’ll learn about the hidden challenges that impede your work, structures and workflows that can accelerate security improvements, and how to build stronger relationship with the rest of your organization.

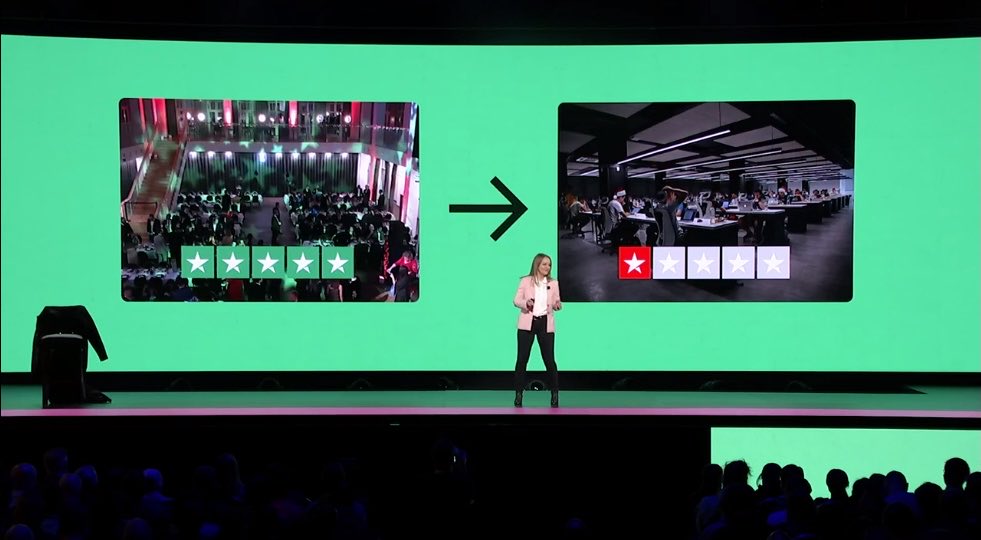

Are your customers happy?

I'm confident that most of security professionals will answer this in one of three ways;

"I don't know."

"I don't think they are."

"No."

None of those are great answers to the question.

Do you have enough resources?

Nope.

Why are you like this?

...organizationally 😉

When was the last time you designed a process for your team?

No, I don't mean writing down an playbook (though you should be doing that). I mean working through the steps of a systematic effort in order to design a process that works for your team and your customers.

Have you ever done that?

The security team

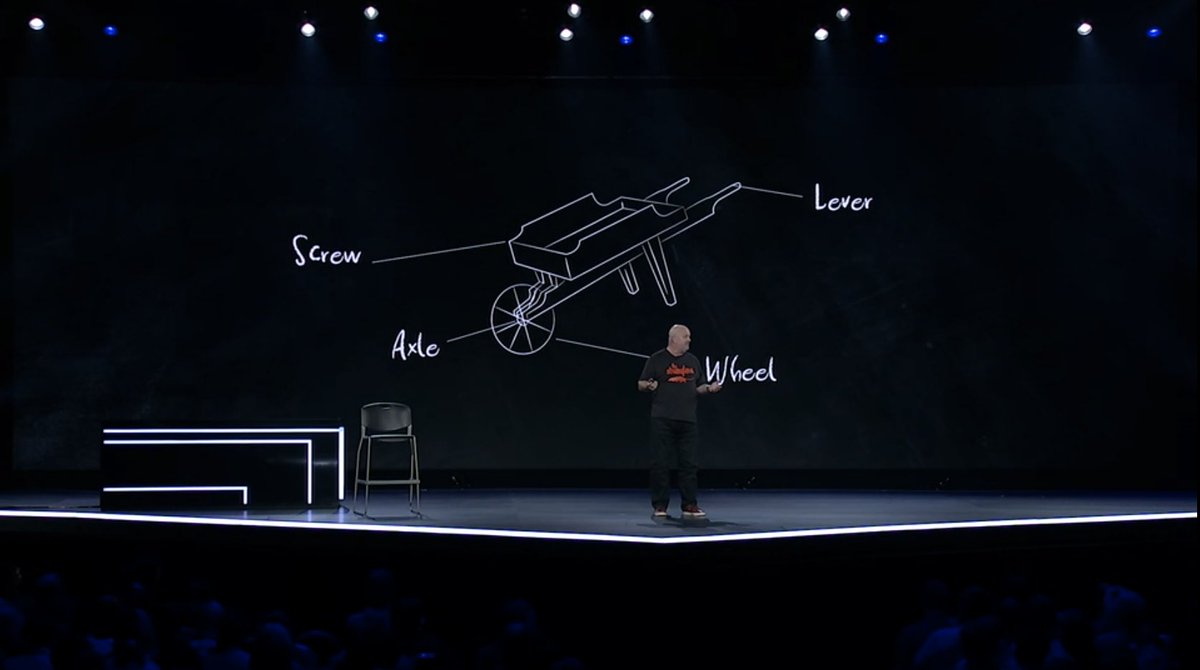

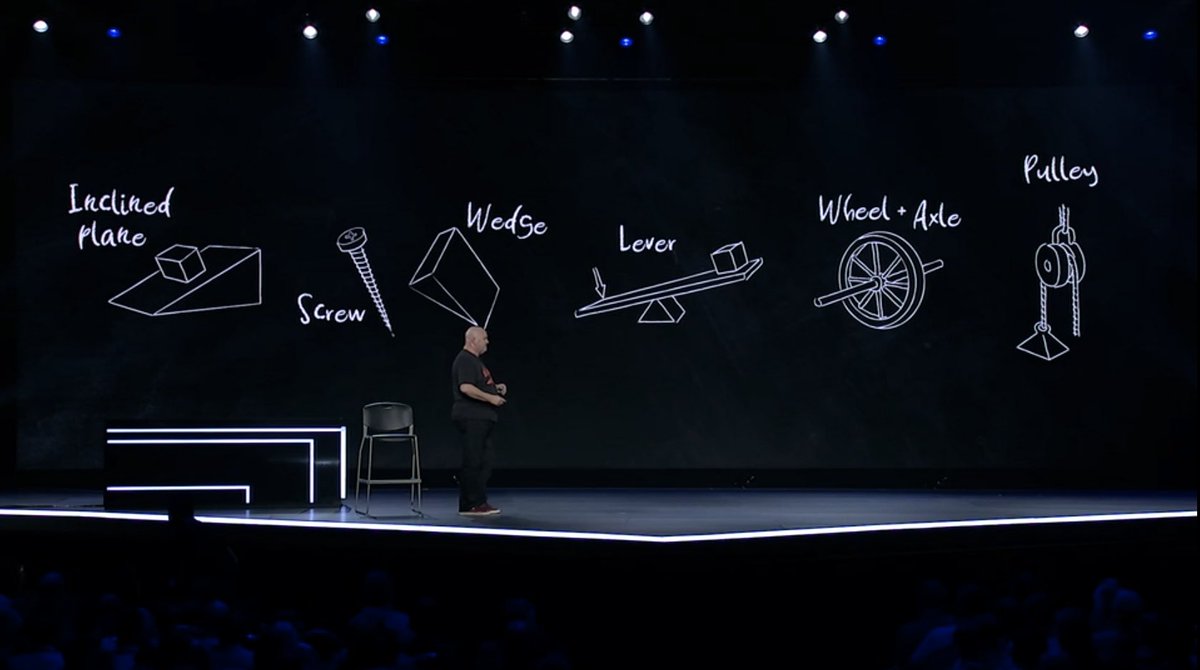

Let's start with first principles. There's always a reason why things end up in their current state and there's a lot we can learn from that history.

Why do most security teams organize the same way? Is that the best approach? Or just something we ended up with over time due to external factors?

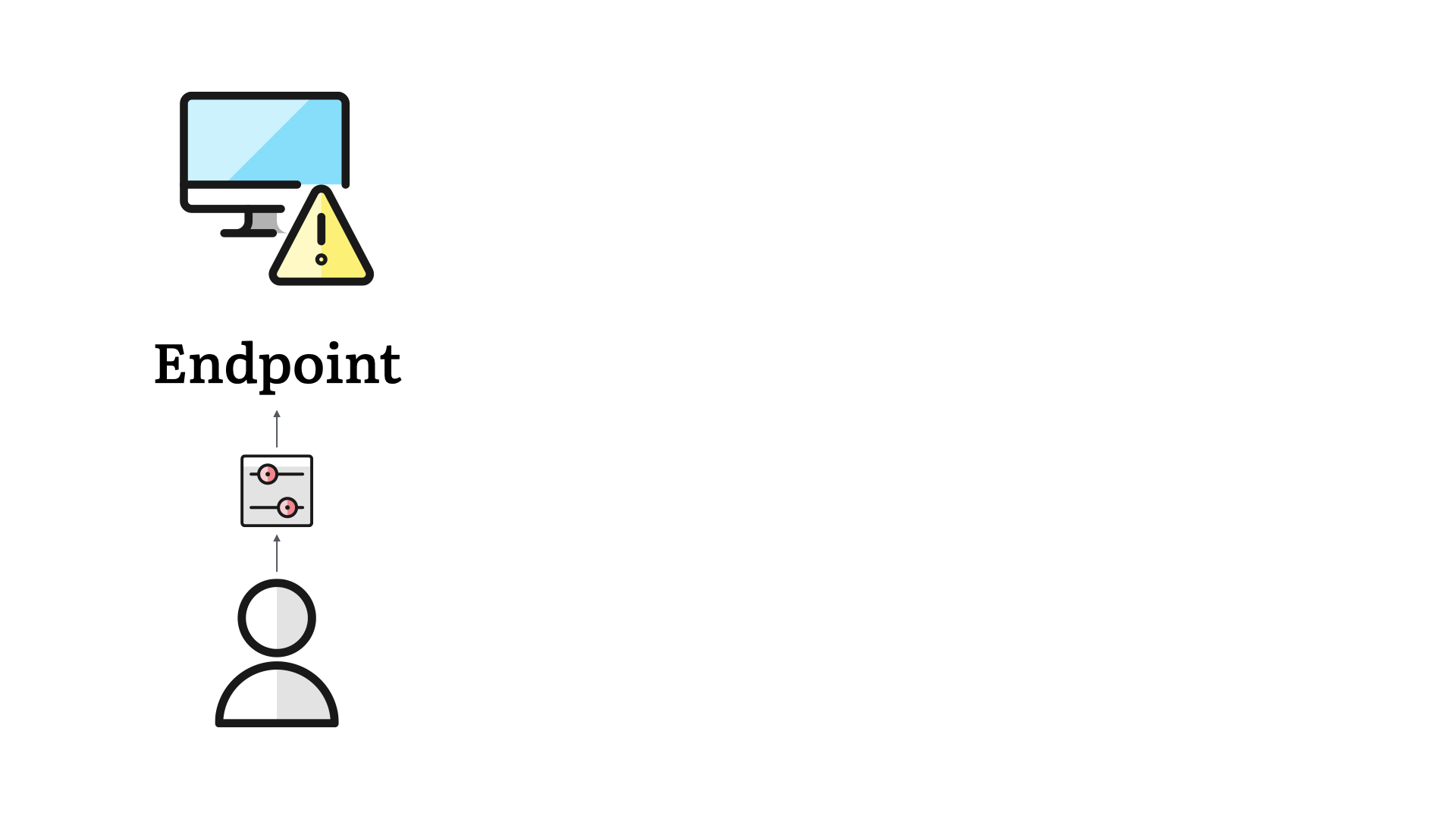

This all started with endpoints.

Acknowledging that there was risk with our desktops (yes, desktops), organizations started to have folks assigned to managing these systems.

Not like we do today, but the first steps were there. Organizing the OS and its updates, anti-virus software, and other steps to help protect the business.

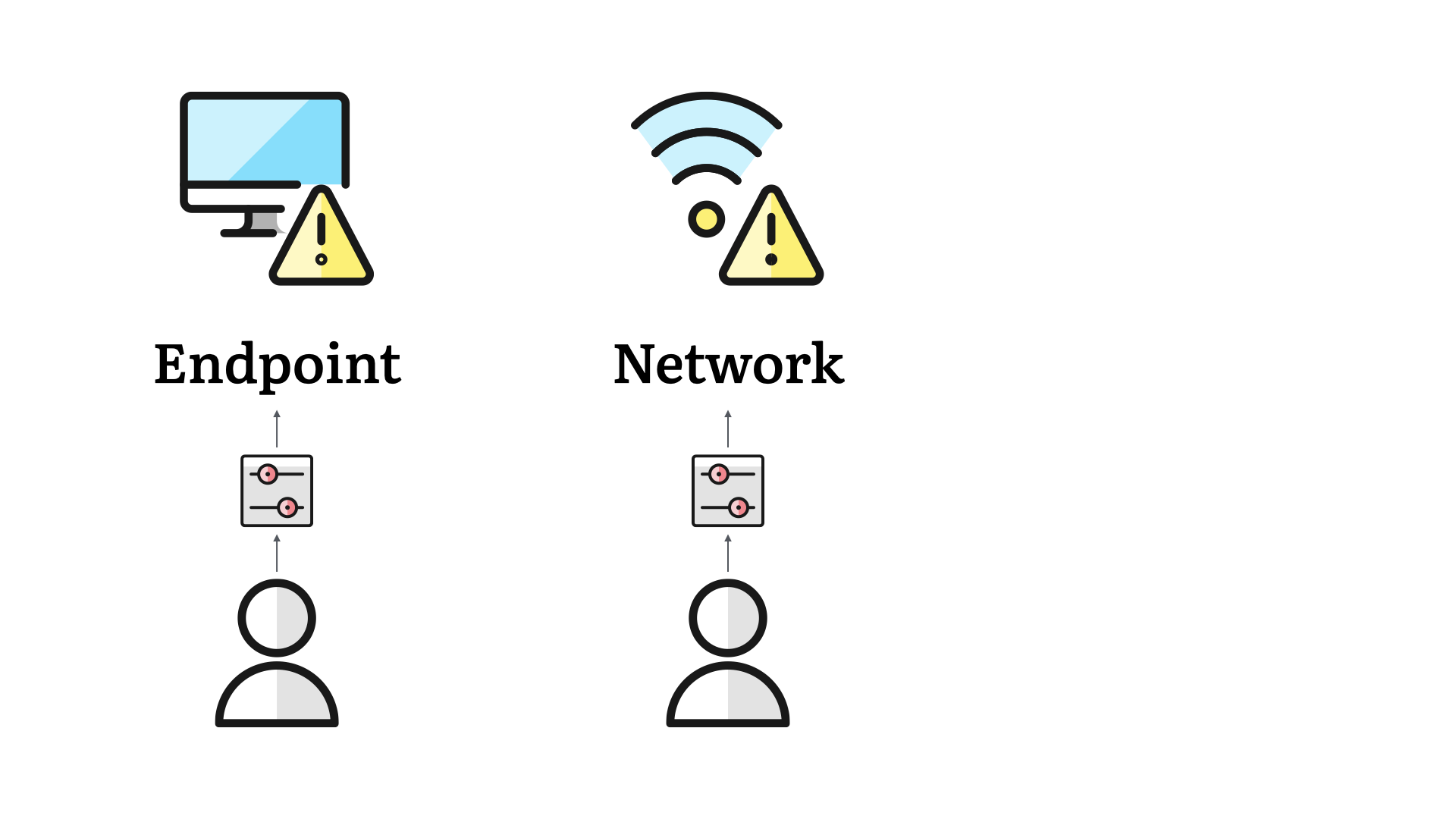

The real nucleus of what we know of as the security team came to be with network controls. Rolling out firewalls, then intrusion prevention, and other controls around the perimeter was enough work that dedicated teams were required.

No more—well, less—side of desk work. We now started to see teams responsibly for the castle wall protecting the "inside" of the business.

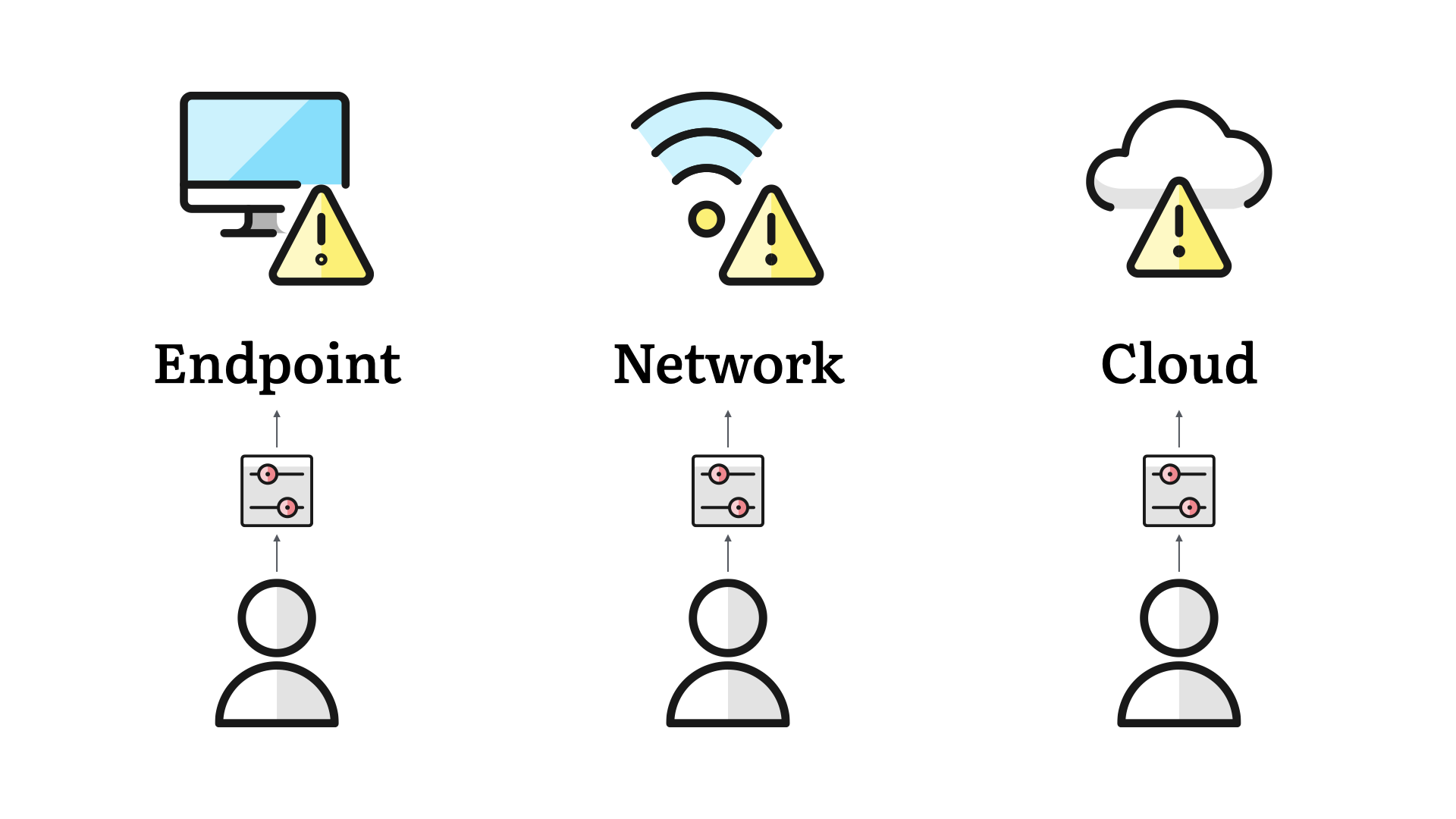

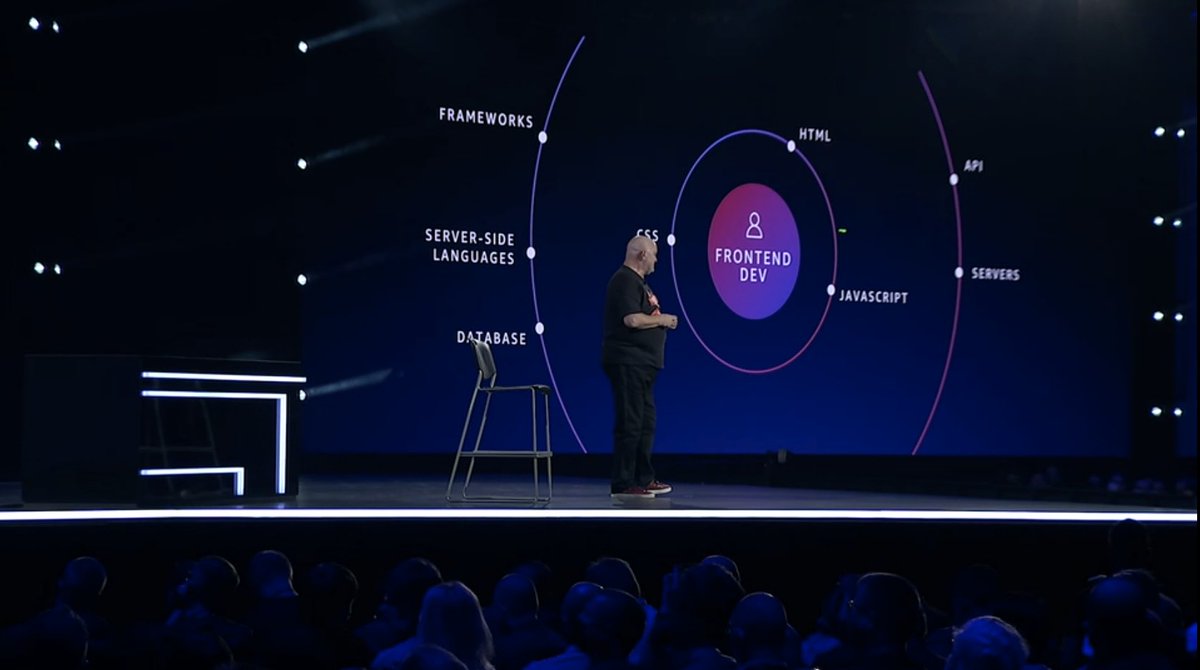

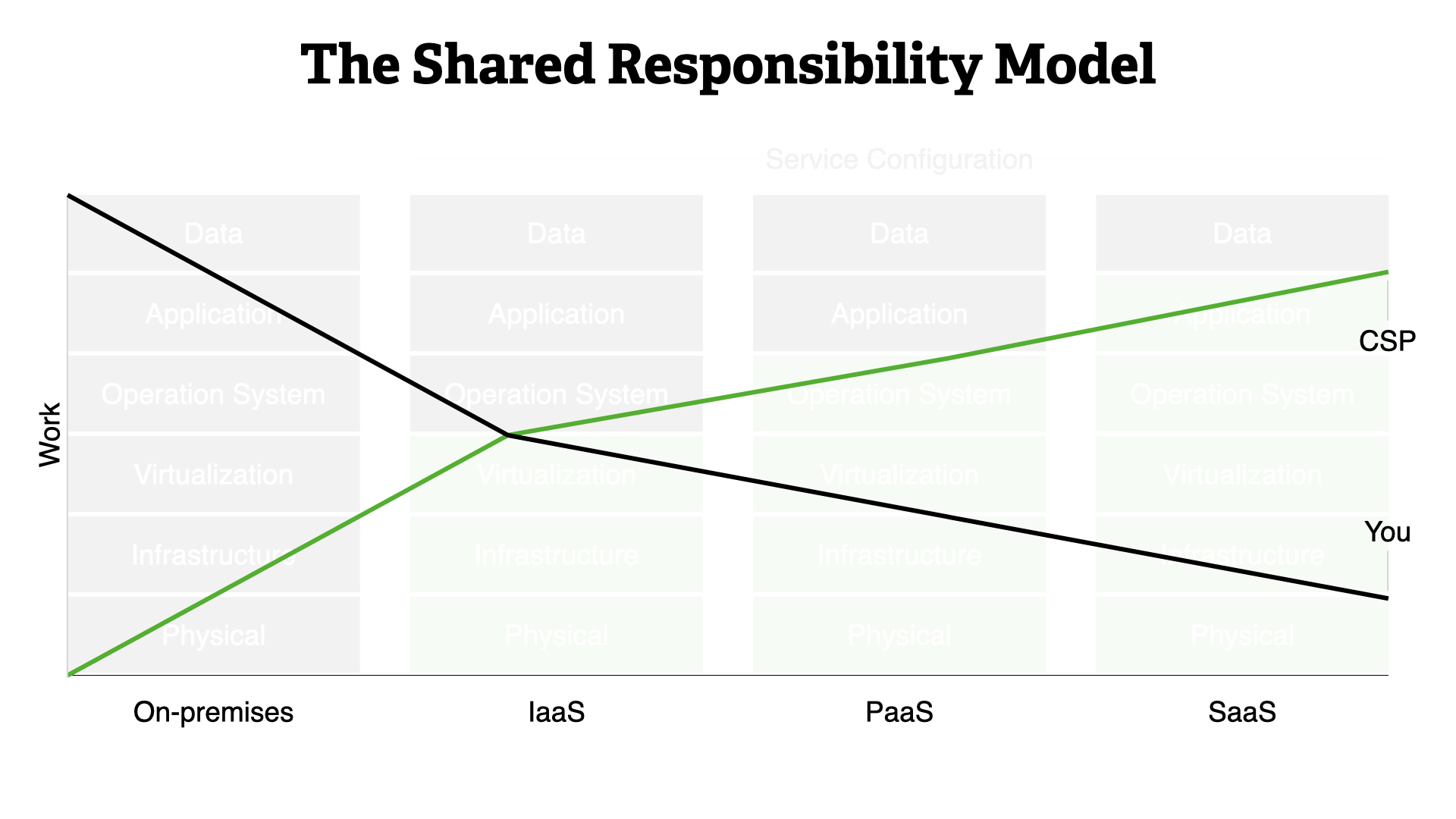

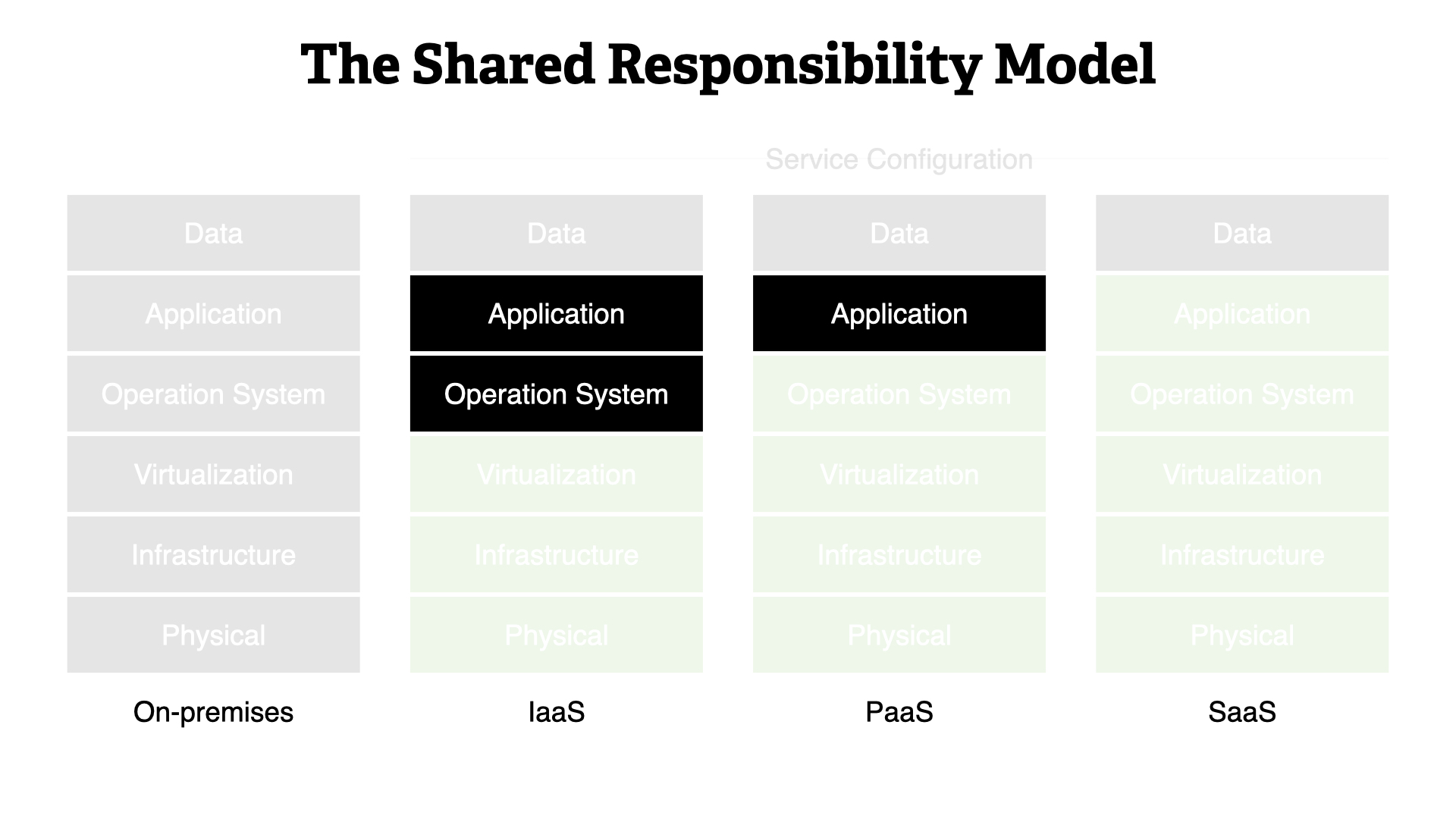

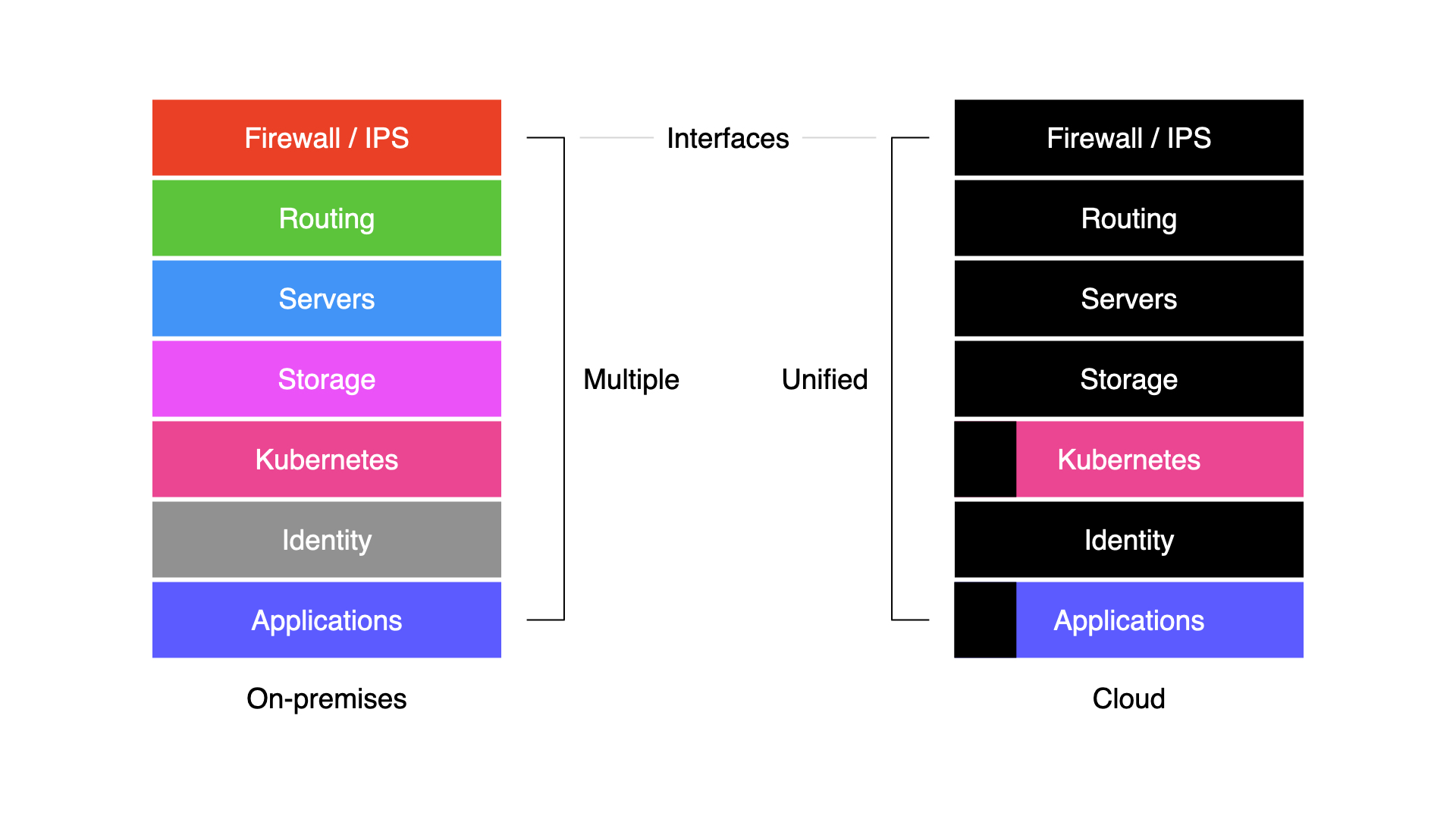

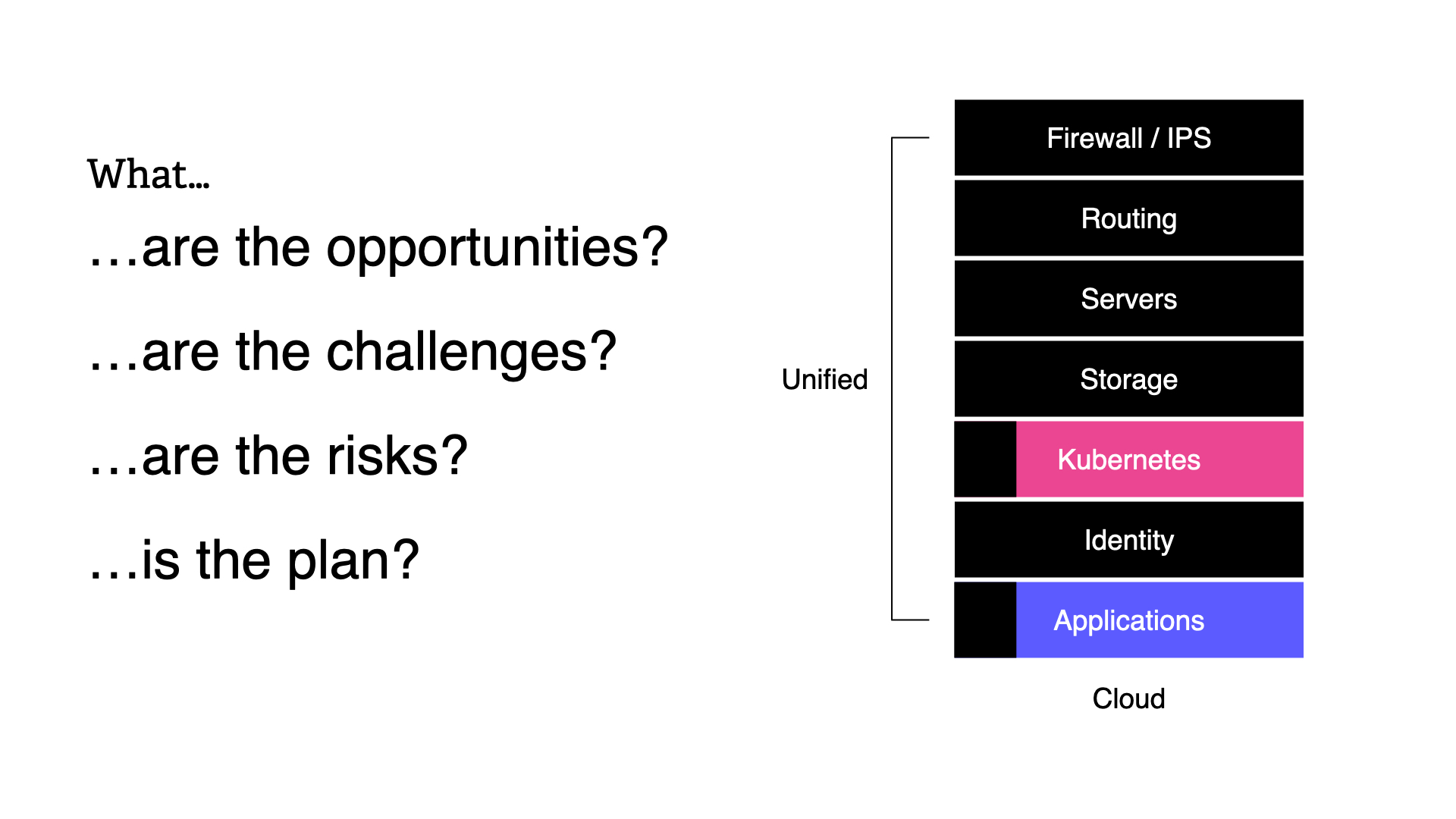

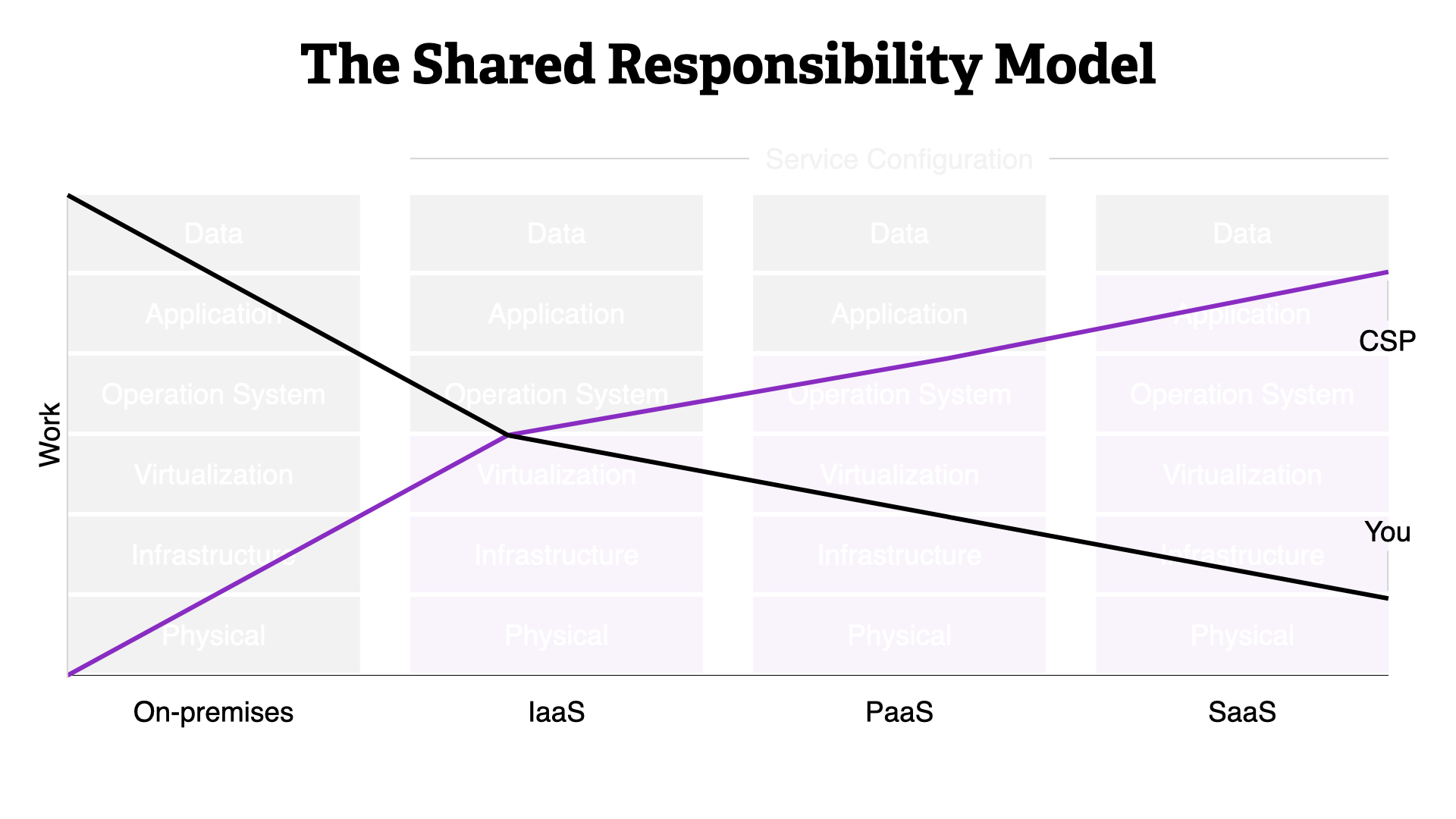

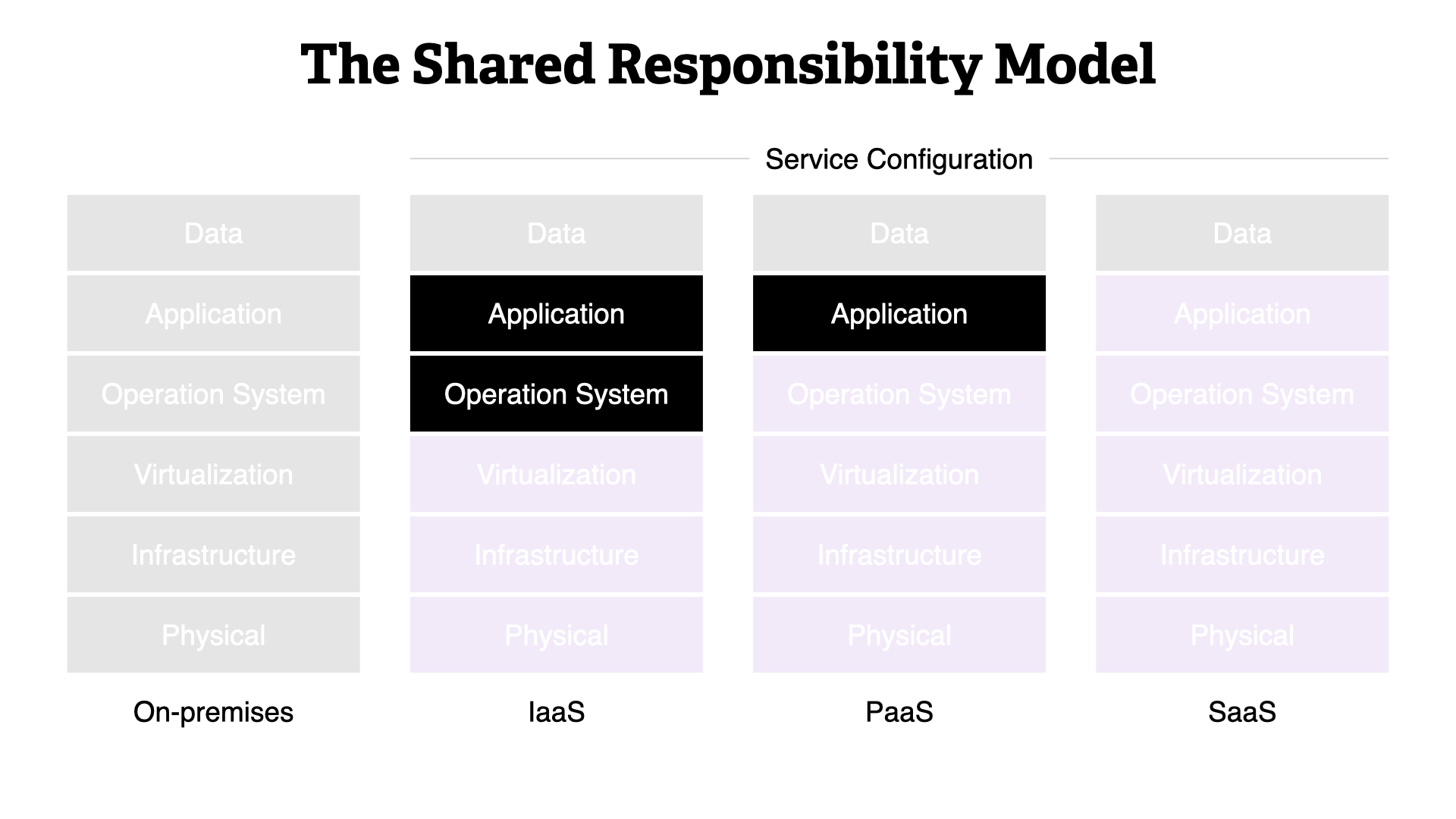

As connectivity expanded, we get closer to today. Teams are dealing with endpoint, network, and cloud controls.

While each of these areas contribute to defence in depth, we also approach them based on the security team's level of responsibility or influence.

Endpoint controls are still very much in the "OK, if it doesn't impact anything" bucket. Security teams tread lightly here, so as not to lose trust with the rest of the business.

Network controls are easier to roll out because they are typically entirely within the security team's purview, or at most involve a small handful of infrastructure teams.

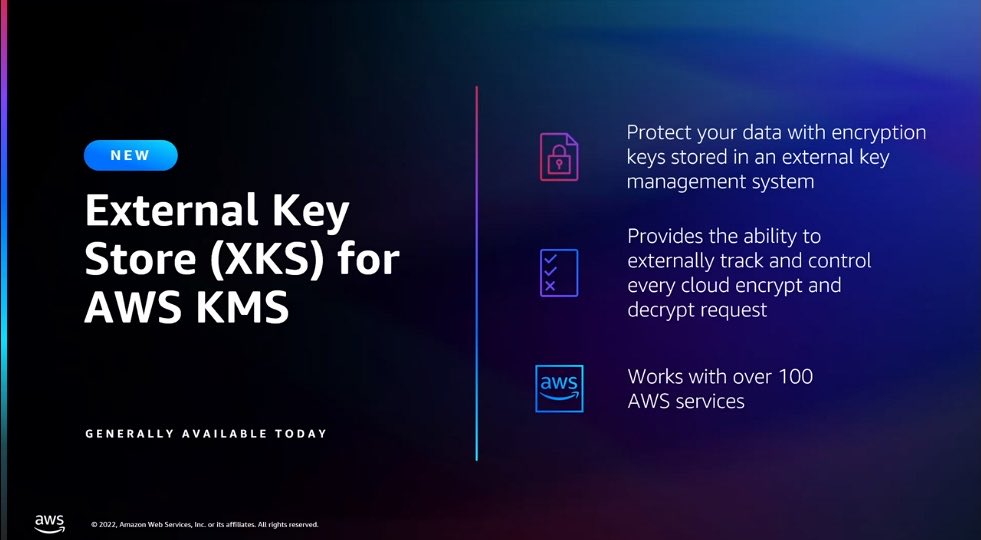

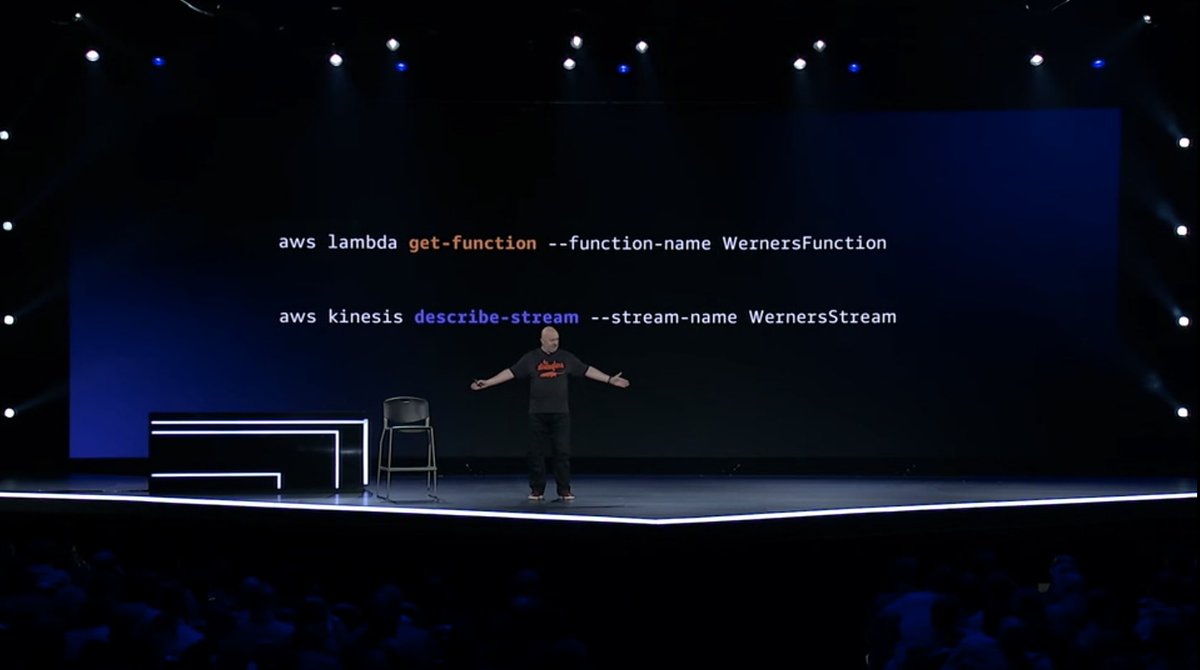

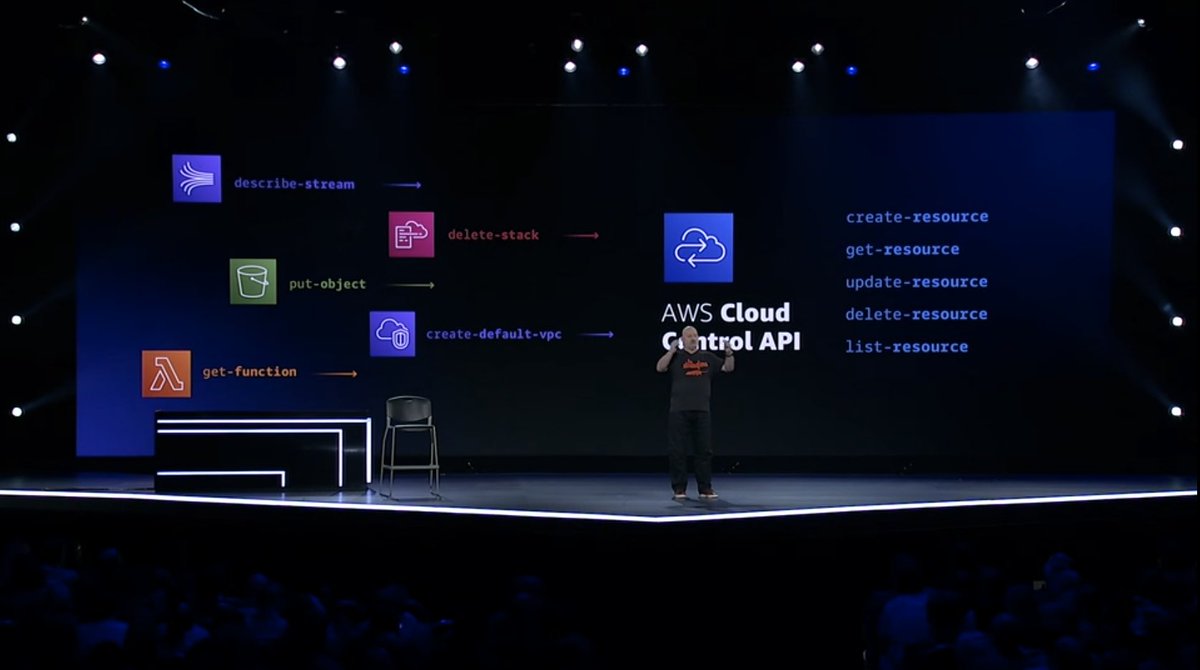

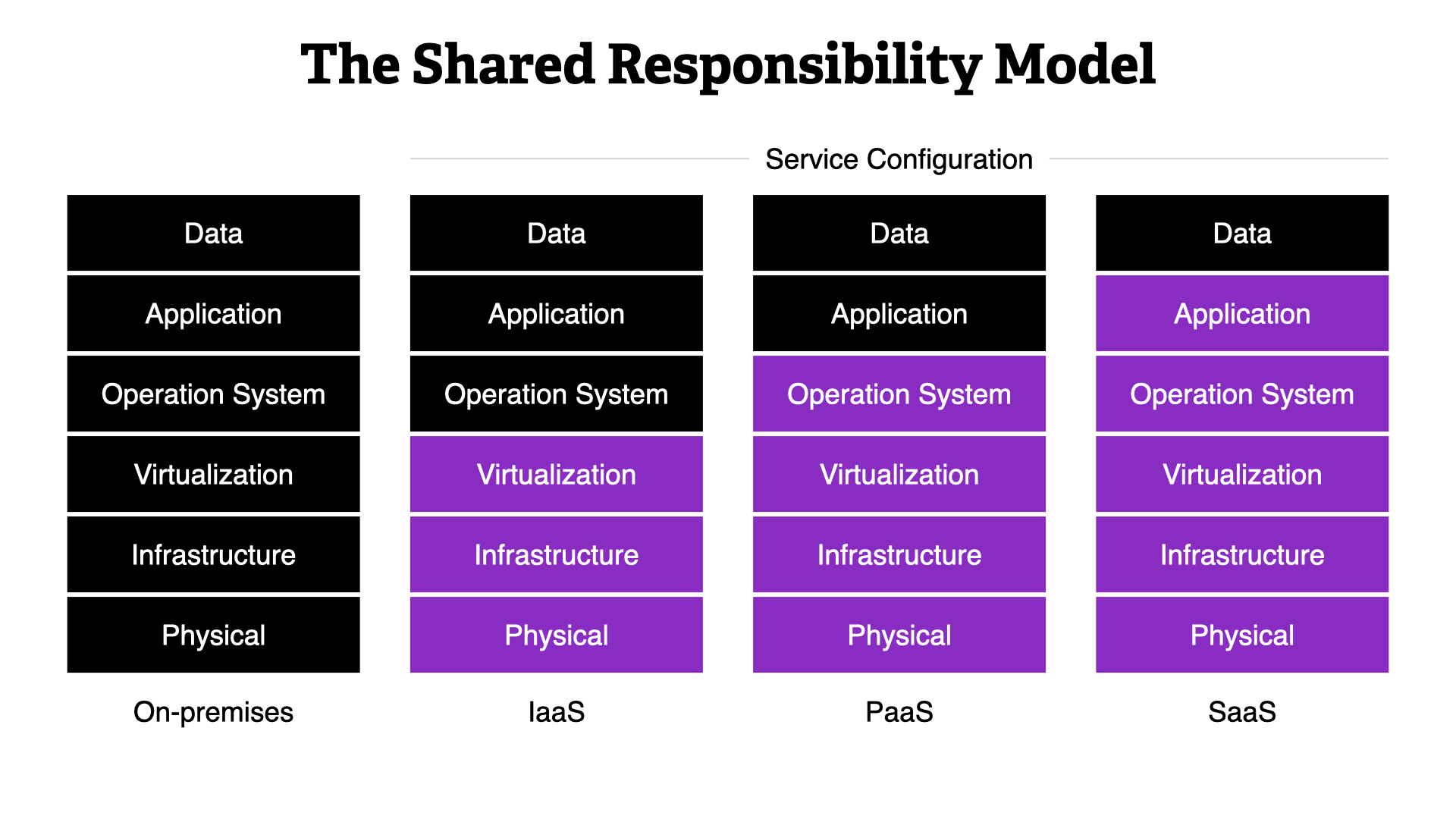

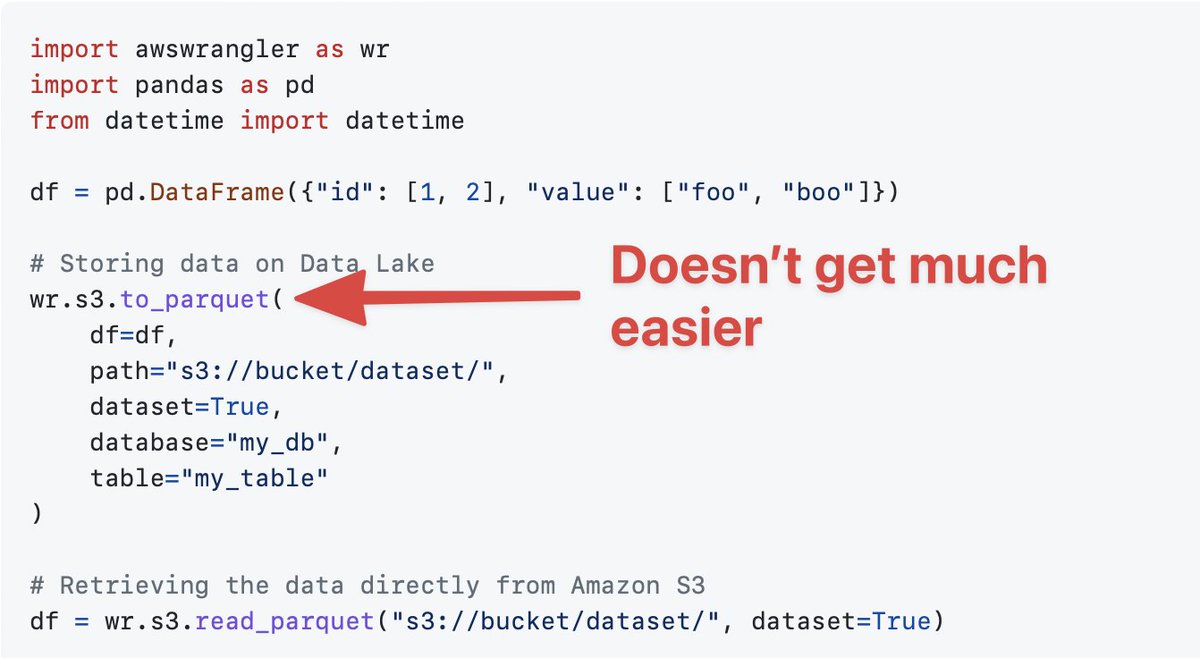

Deploying security controls in the cloud can be more direct. WIth all resources available via an API, connecting to systems, monitoring them, and gaining visibility are more straightforward than ever.

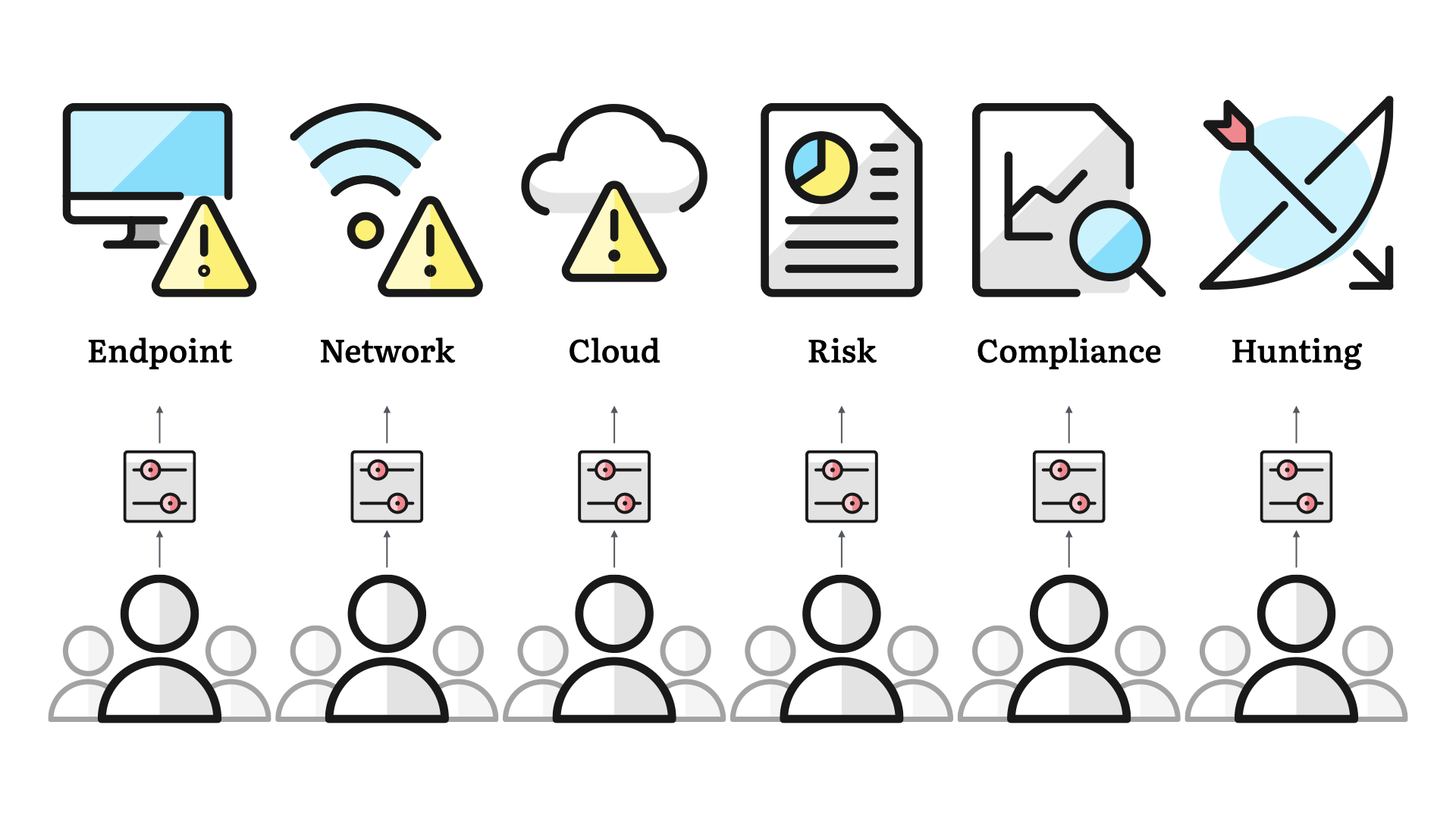

But there's more to security than just these three areas. We've expanded to risk practices, compliance activities, and proactive work like threat hunting.

Security teams in medium-sized enterprises, are likely to scale to have one or two—or more—dedicated resources to each of these areas. Larger organizations can even get to the point where they have dedicated teams for each of these areas.

But one thing that tends to hold true—even for the smallest of teams—is that we organize our teams based on function.

This is Francine, she is responsible for our risk practice. Jo takes care of compliance. Etc.

Functional structure

Function structures tend to exhibit these properties:

- They allocate resources based on their functions

- Information flows up and down easily (or least by default)

- Decisions tend to stay within each of the functions

- Individuals in each function will develop deep expertise in that area over time

- Explicit workflows are required to break silos

And it's this last point that is the source of most of our challenges.

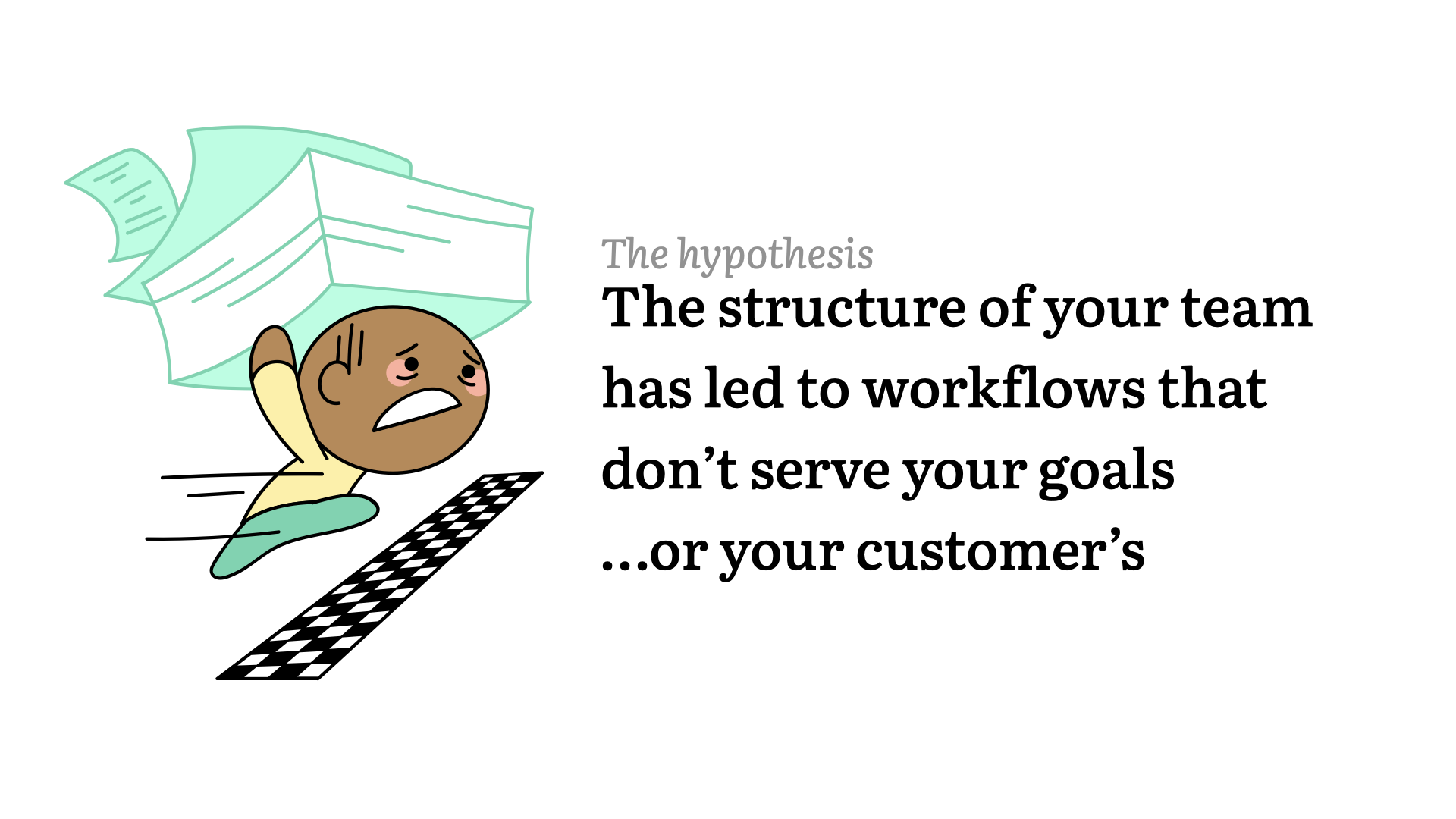

I don't think this structure is conducive to workflows that will meet your goals. Or the goals of your customers.

Worse, I don't think that we have the time/energy/awareness to step back and examine the link between our team structure and our workflows.

Simply put, we are too busy doing the work to understand how our approach to the work is making it harder for everyone.

A short activity

In this section, the audience is asked to—and politely does—participate in a group activity. They say each of the letters as they appear on screen.

A B C D

E F G

H I J K

Stop.

I'm not sure why y'all are doing it this way. Let's restart.

(In person, the audience almost always nails this part. They are saying each of the letters in English at the same time and nailing the beginnings of the song as well.)

A B C D

Stop.

I ask the audience, "Why are you saying it that way?"

They are confused. I then repeat the beginning of the alphabet in Dutch. The letter sounds are very different than the English ones.

The point of this callout is that I had very different expectations for the activity. Expectations that didn't line up with the audience's assumptions.

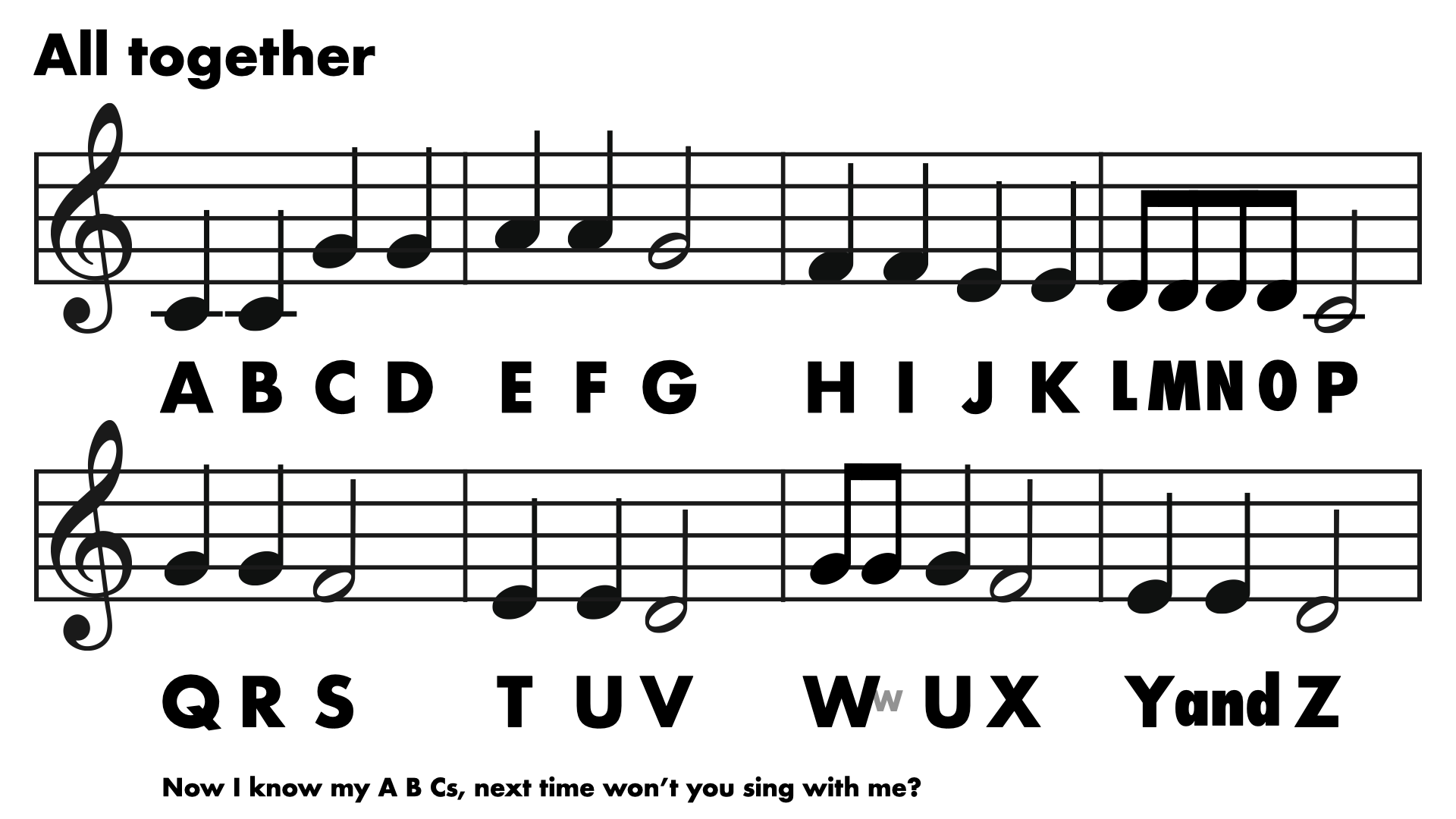

On the same page & language, we restart.

A B C D

E F G

I ask the audience, "How many vowels have we said so far?"

This breaks the flow of the recitation and song. It's an unexpected question, even though it's simple one to answer.

We restart for the 3rd time.

A B C D

E F G

H I J K

LMNO P

Inevitably, a North American audience will say L, M, N, and O as "elemenopee"

It's a fun call out and it runs counter to the previous pacing, but it aligns with the song.

The point of this is that it's an unspoken change that everyone just gets. They go along with it because of the ingrained cultural elements, not because they talked about it beforehand.

Everyone in the audience (or enough that the point is made) recites the alphabet using, "The ABC Song" instead of just saying each letter in turn.

There wasn't a discussion or agreement to do this. Outside of the subtle hinting in the visuals, it's just what everyone defaults to.

It's a cultural expectation. It's "the way we've always done it".

It's a direct parallel to a lot of activities in organizations and frequently the security team (me as the speaker in this case) is unaware of that expectation!

For fun, the audience gets to repeat the whole song without interruption.

A fantastic amount will also—always—add the bonus line, "Now I know my A B Cs, next time won't you sing with me?"

For a bonus, unreleased tangent, it's pointed out that most folks also can't repeat a segment of the alphabet without starting at A and ending up in a close approximation of the song as well. Human brains are weird!

What happened?

Everyone knew the song. You default to it, because you learned it and practiced it a lot as a child.

It's a shared experience that reinforces the original experience and understanding.

I restarted the group 4 times. Each time to clarify something for me or to force the group to confirm to my expectations and requirements.

That's a generally frustrating experience. While trying to fulfill my needs, I cost the group time and enjoyment.

...pausing to let that sink in...

Teams generally work well (enough) together.

Don't be the one who disrupts that.

Don't be the one who disrupts that to serve your own needs...even if those needs will help serve the group!

Self-checkouts

Let's pivot to an even more frustrating topic. But it's a topic that we can actually learn a lot from and relate to as a group.

In the beginning...

When they first rolled out, self-checkouts were hailed as technological advancement, a time saver, and an overall benefit to both the business and the customer.

There were some discussions about the balance of those benefits, but outside of the "old man yells at cloud" segment, there wasn't a lot of negativity...at first.

I bring up self-checkouts because I'd like to share a story to help illustrate my overall point of the importance of explicit service design. To help understand how we can all be more effective security practitioners, I'd like to talk to you about my local pharmacy...

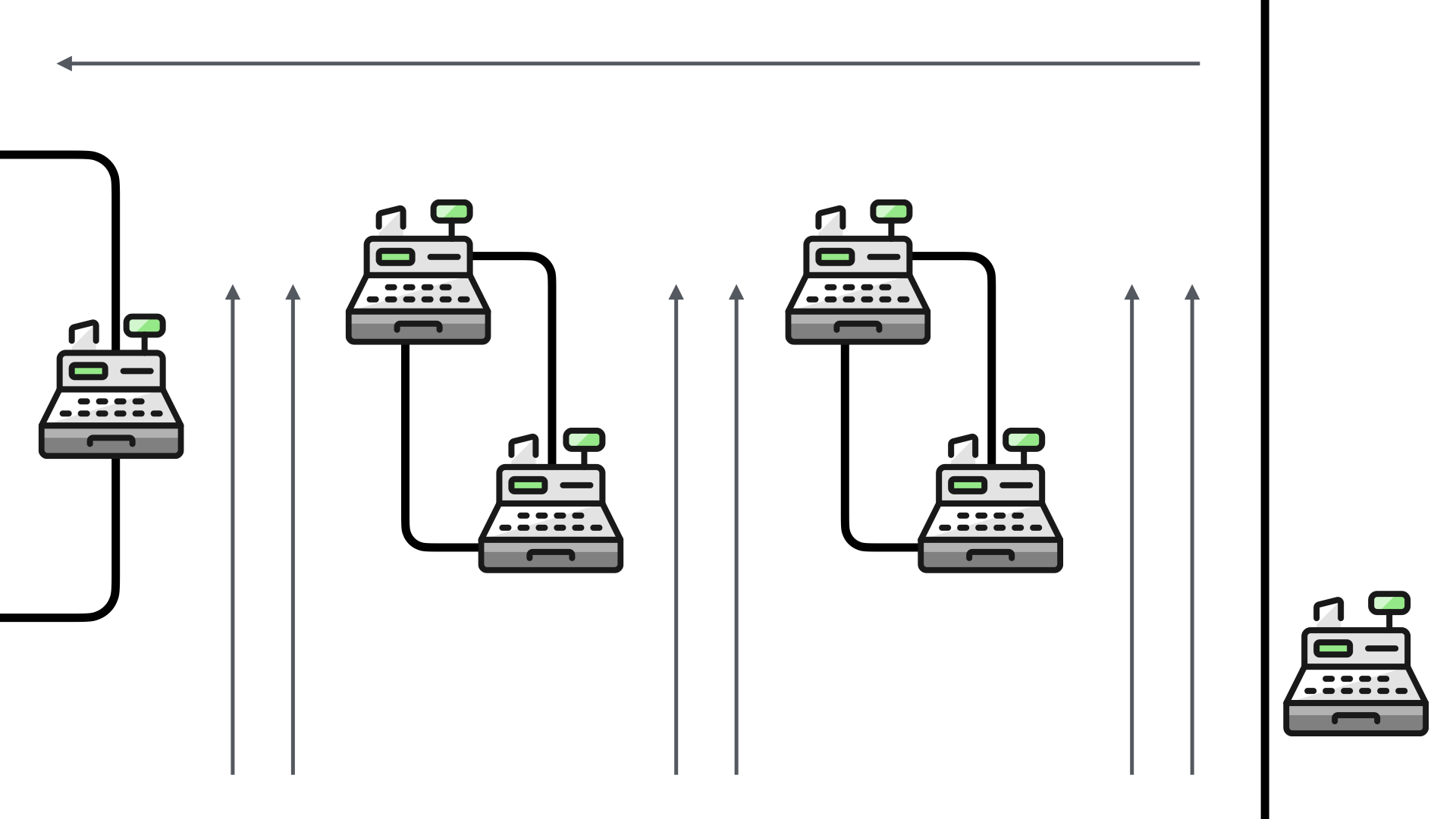

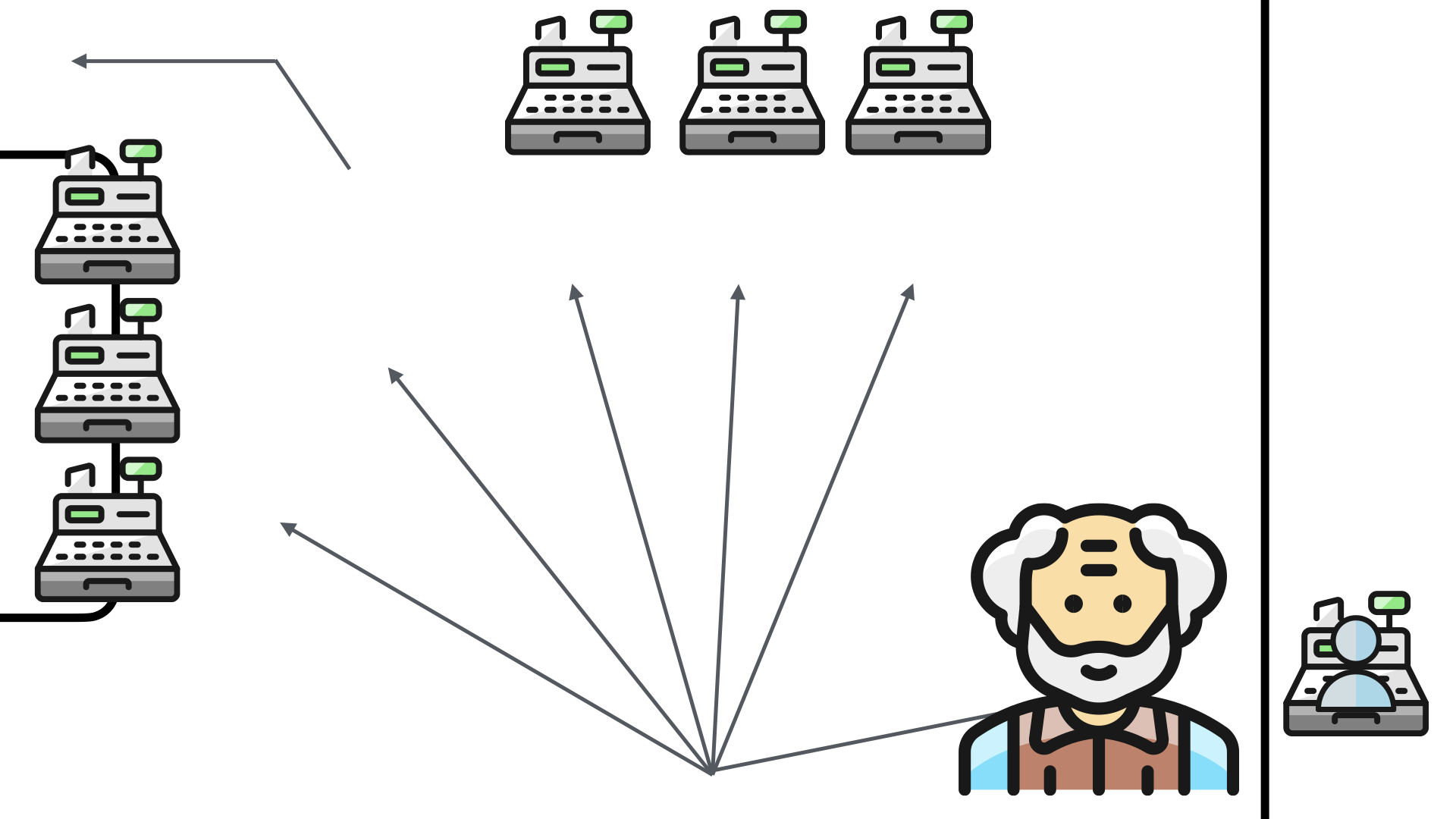

Before rolling out self-checkouts about 18 months ago, my pharmacy had six checkout lines.

Each one of the checkouts was staffed. In peak times, they had six employees running the six checkout lines.

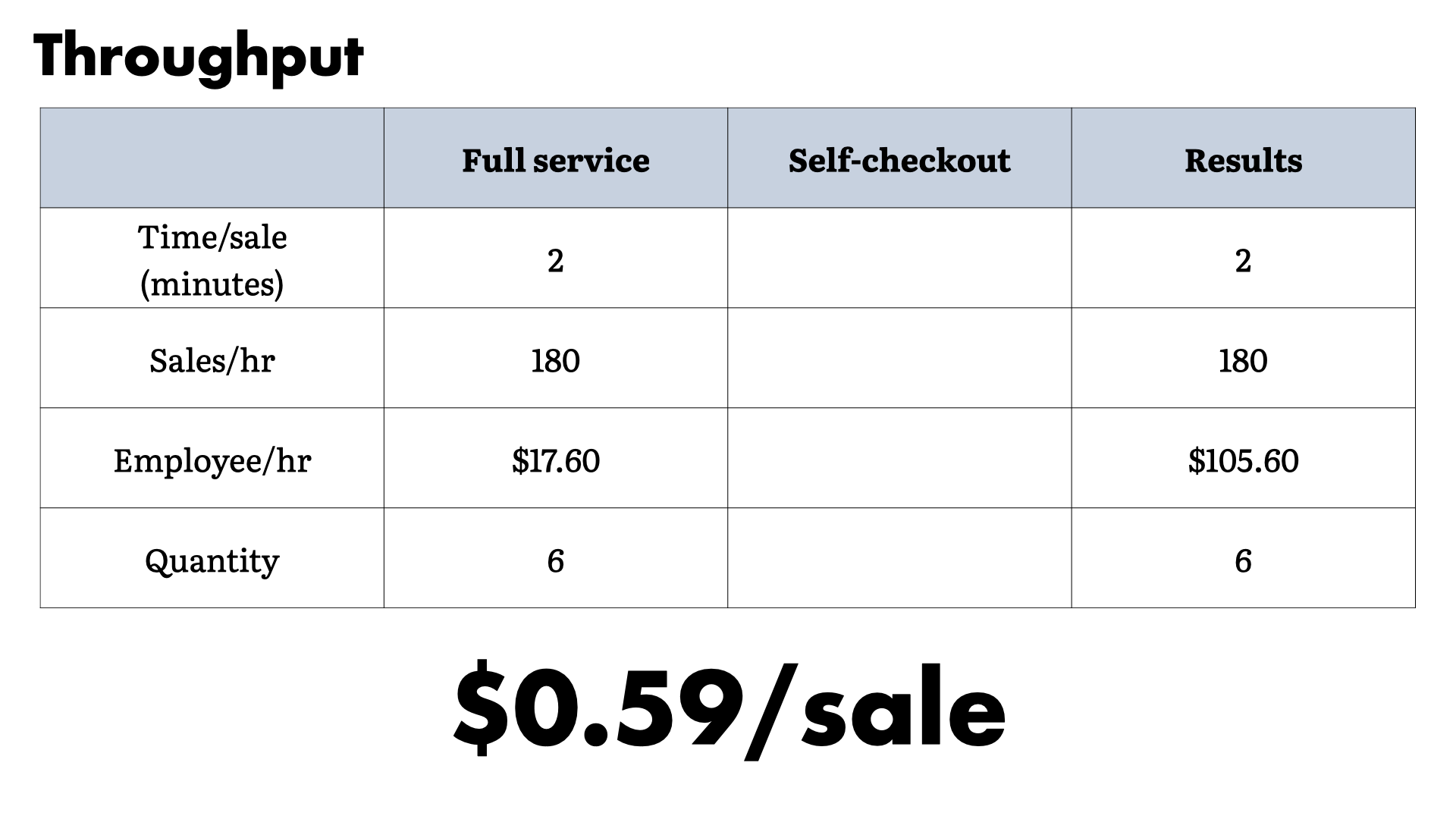

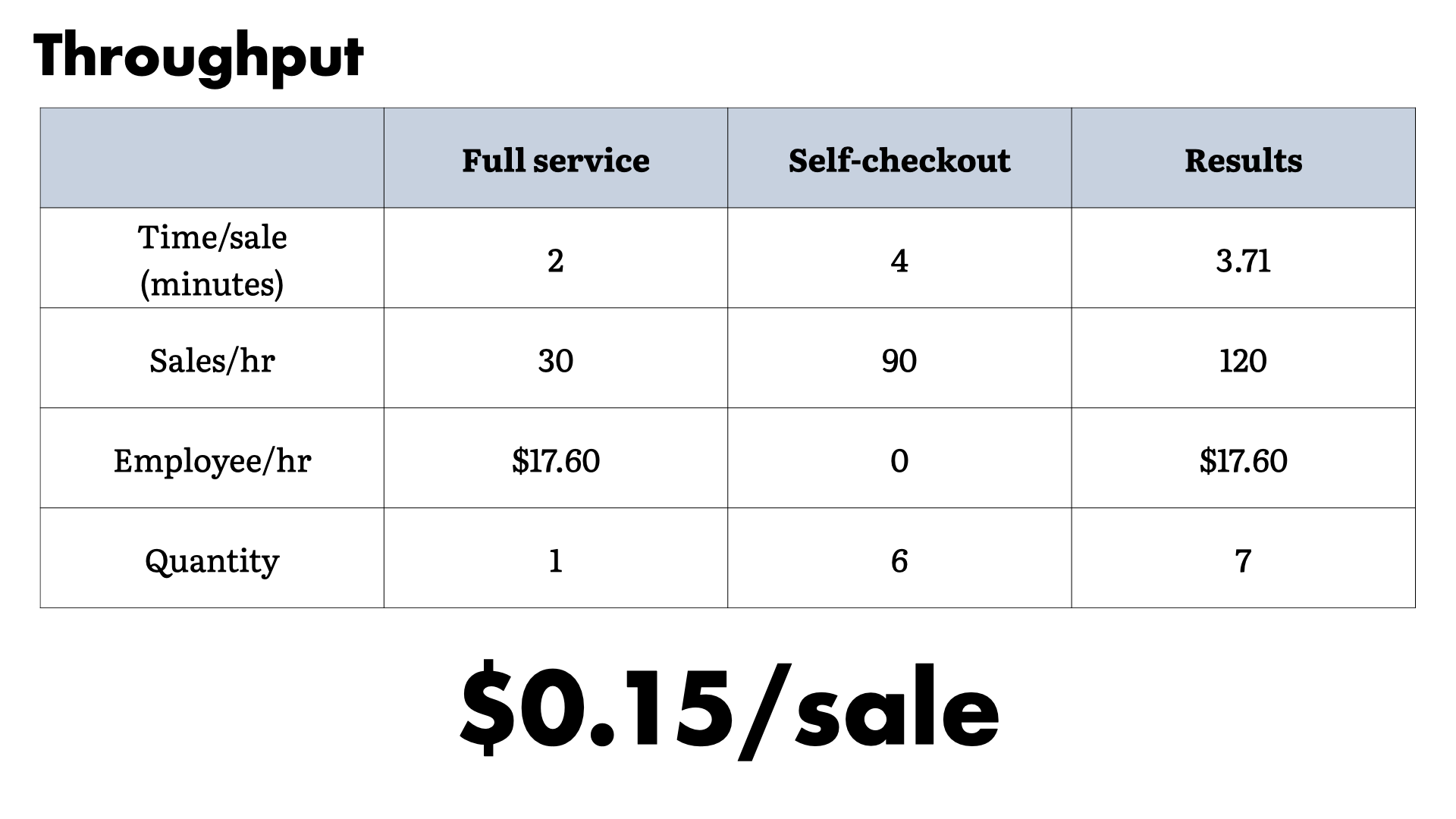

If we put ourselves in the owners shoes, the six checkouts—running at a theoretically maximum—would require about $0.59/sale in overhead.

We get to that number by looking at the number of sales each line can process during an hour and the cost to serve that line.

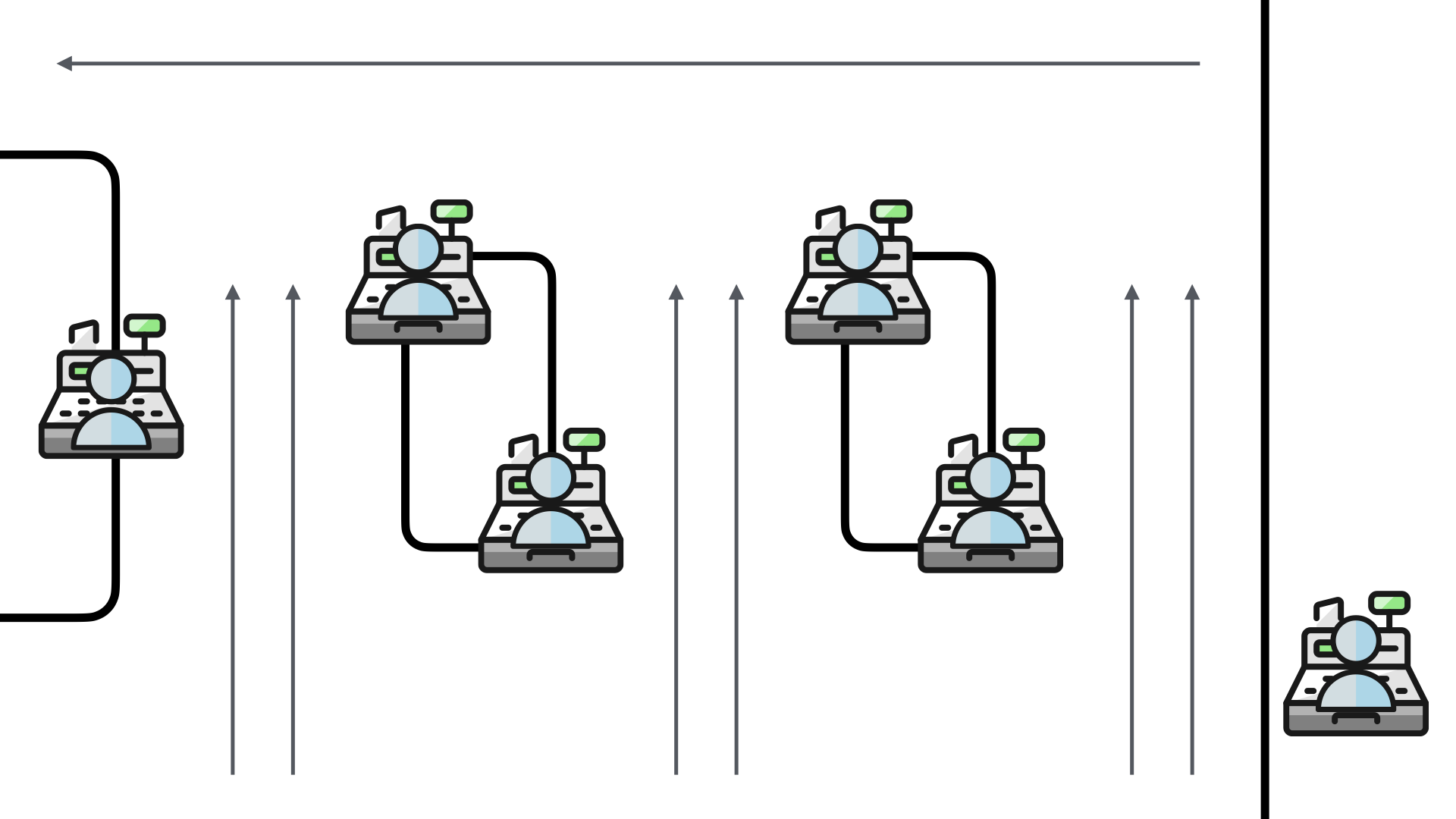

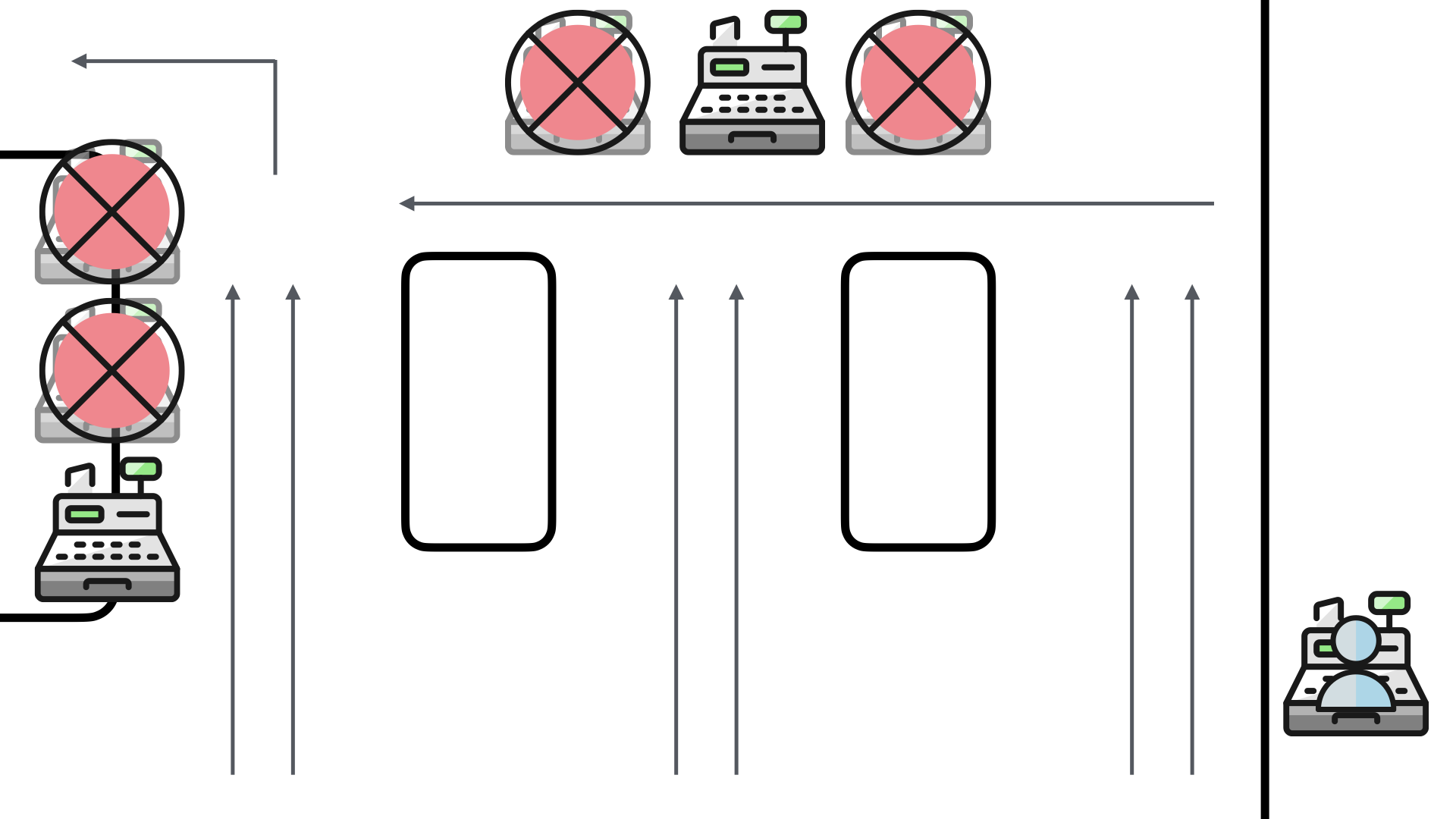

When the pharmacy deployed their self-checkouts, they made a couple of slight adjustments to the traffic flow.

The two middle lanes now were product shelves for those impulse buys. The back wall now housed 3 self-checkouts and so did the left-most checkout line.

The right-most checkout line was kept as a staffed line to help address any customer issues. This employee was also responsible for helping any self-service customers who encounter issues.

Now, when we adjust for the extra time it takes for self-service, the overhead drops significantly for the store.

They are pushing through less sales (120 vs 180), but at 25% of the overhead.

Given the average sale at a pharmacy these days, this probably isn't a great business move. However, the back end costs for employees are going to be significantly higher than maintaining the self-checkout systems.

The self-checkouts also don't have scheduling issues. They are always available and you don't need to try and predict demand. There's a consistency there that simplifies operations.

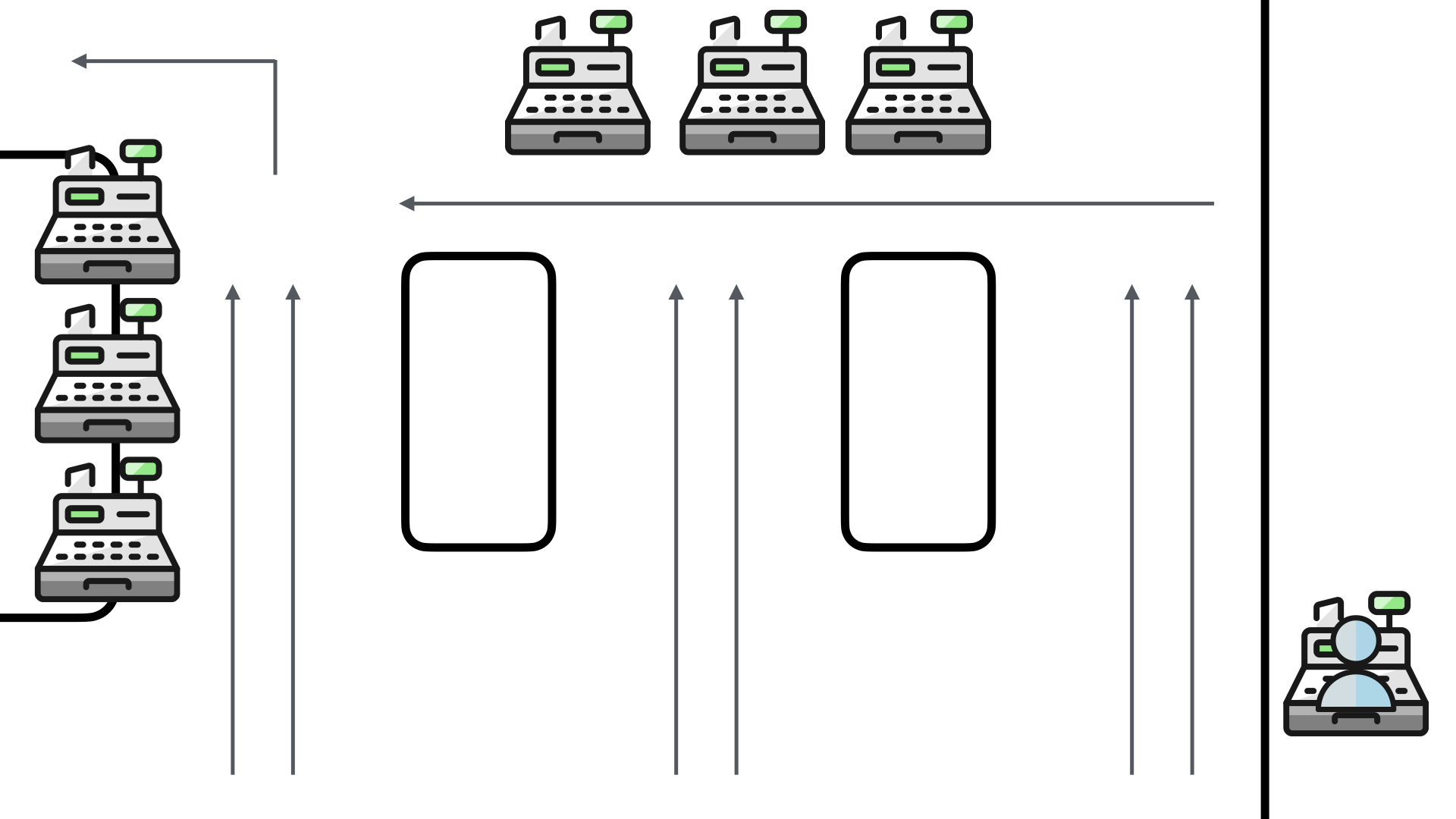

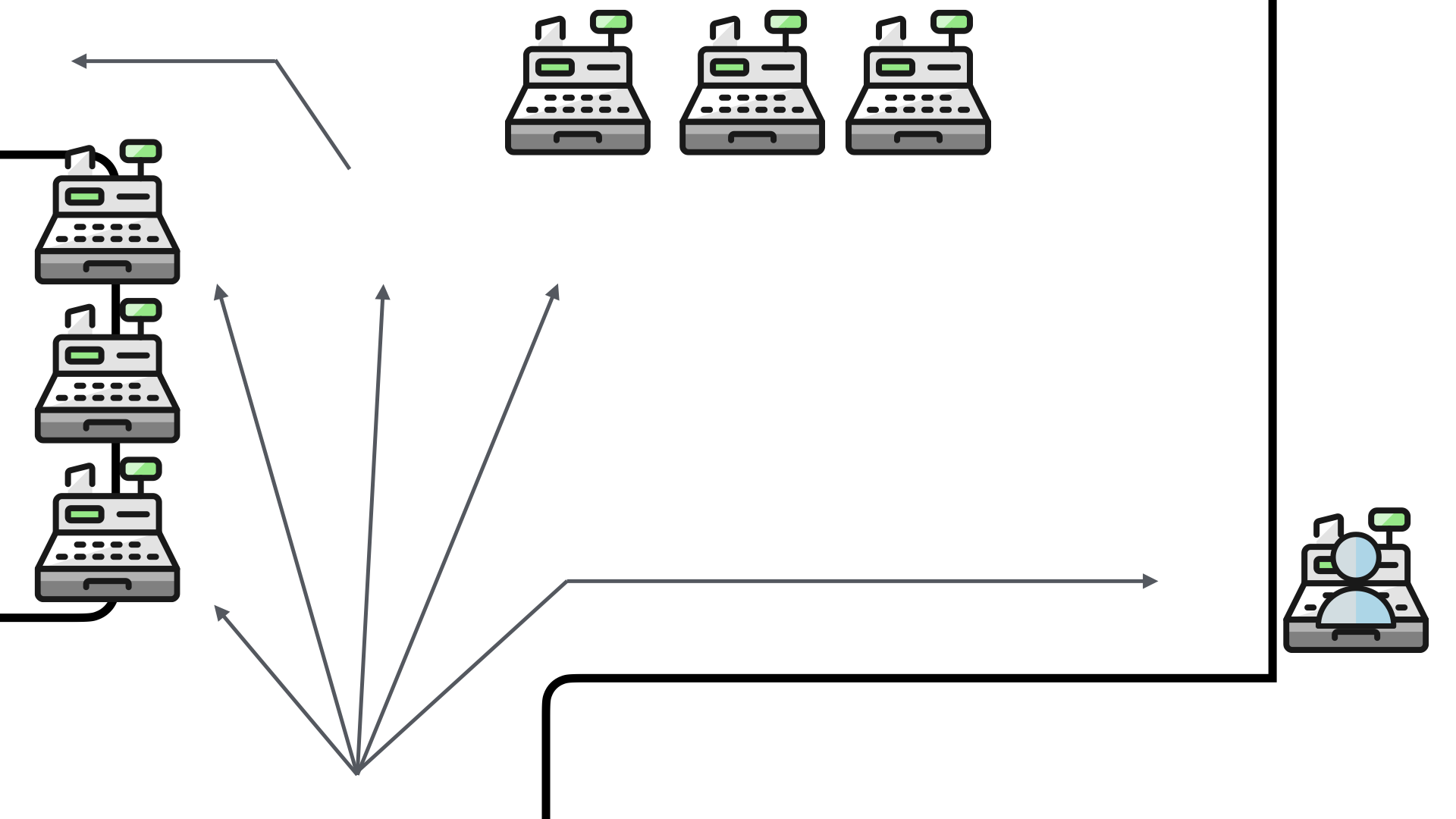

The problem—ok, a problem—the store encountered quickly was that four of the six self-checkouts weren't seeing much use.

The reason was simple, customers weren't seeing them!

The product displays which were thought to be a clever way to re-purpose the previously staffed checkouts, were interfering with the view of the self-checkouts.

Customers were queueing up like they used to for the staffed checkouts and not taking advantage of the additional self-checkout capacity.

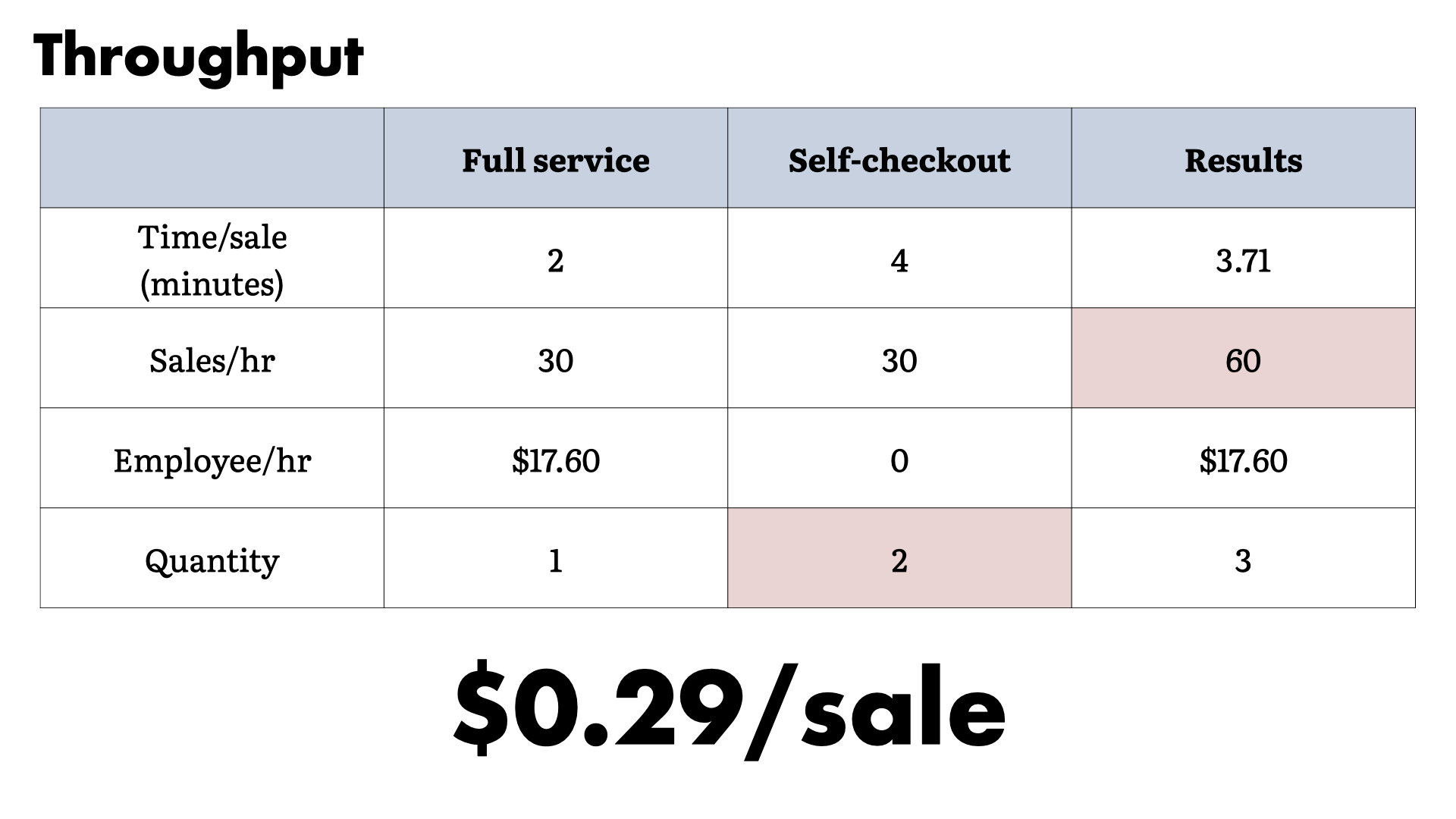

When we look at the throughput from this challenge. The overhead is half of the full service approach, not a quarter of it.

That's a huge impact to the expected savings. This is a problem that needs to be solved.

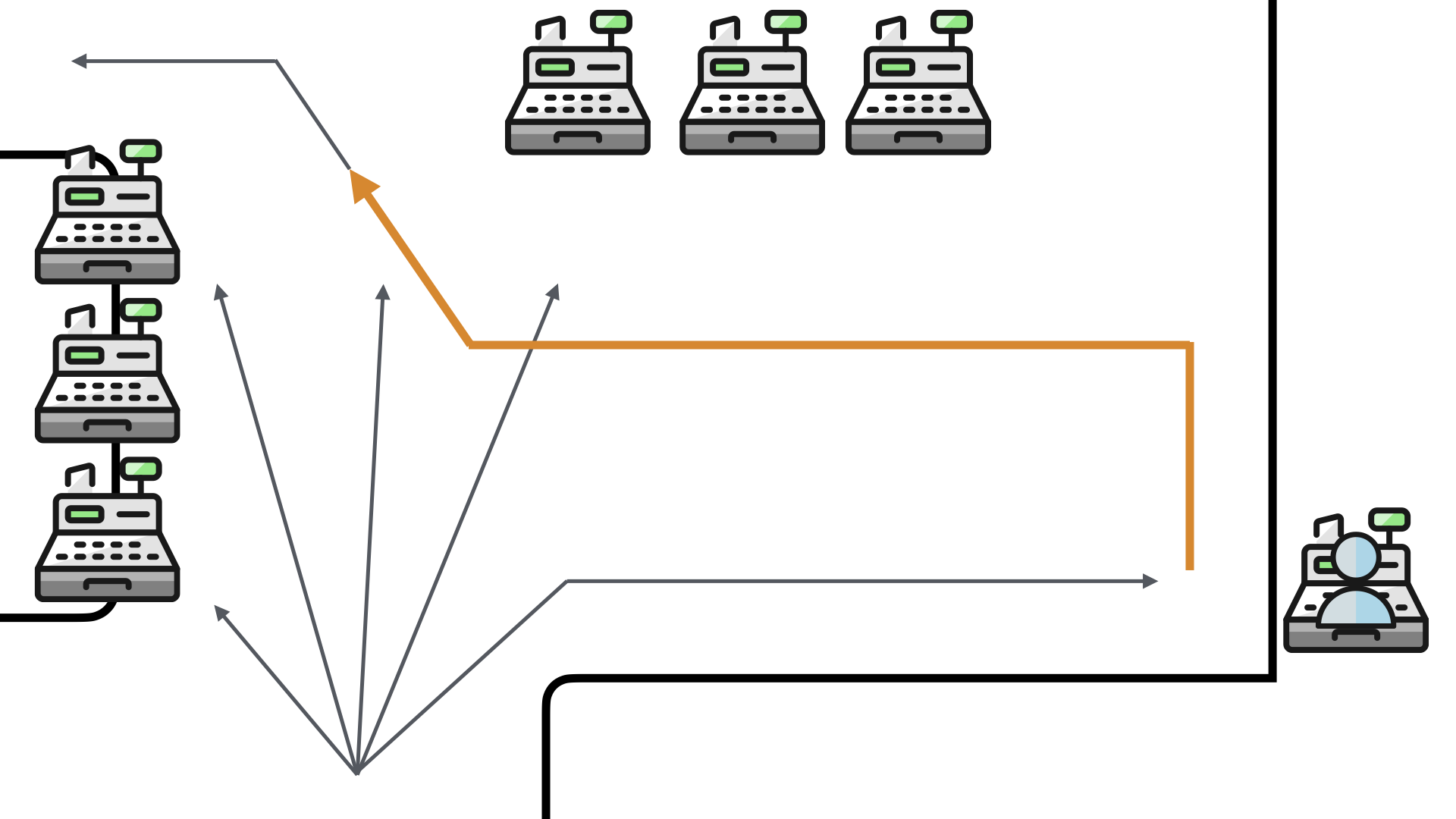

The solution the pharmacy came up with was to remove the obstructions. This makes perfect sense and really opened up the area.

While it removed the ability to convert the impulse buyers, it made it a lot easier to see the entire set of checkout options.

But there was a problem...

A significant percentage of the customers for the pharmacy are seniors. Seniors who were not having anything to do with the self-checkouts.

When presented with the suite of options, the seniors overwhelmingly selected the full service option. To the point where they were queueing up when almost all—if not all—of the self-checkouts were open.

This reduced the checkout throughput of the store dramatically.

Any guests on how the store "solved" this challenge?

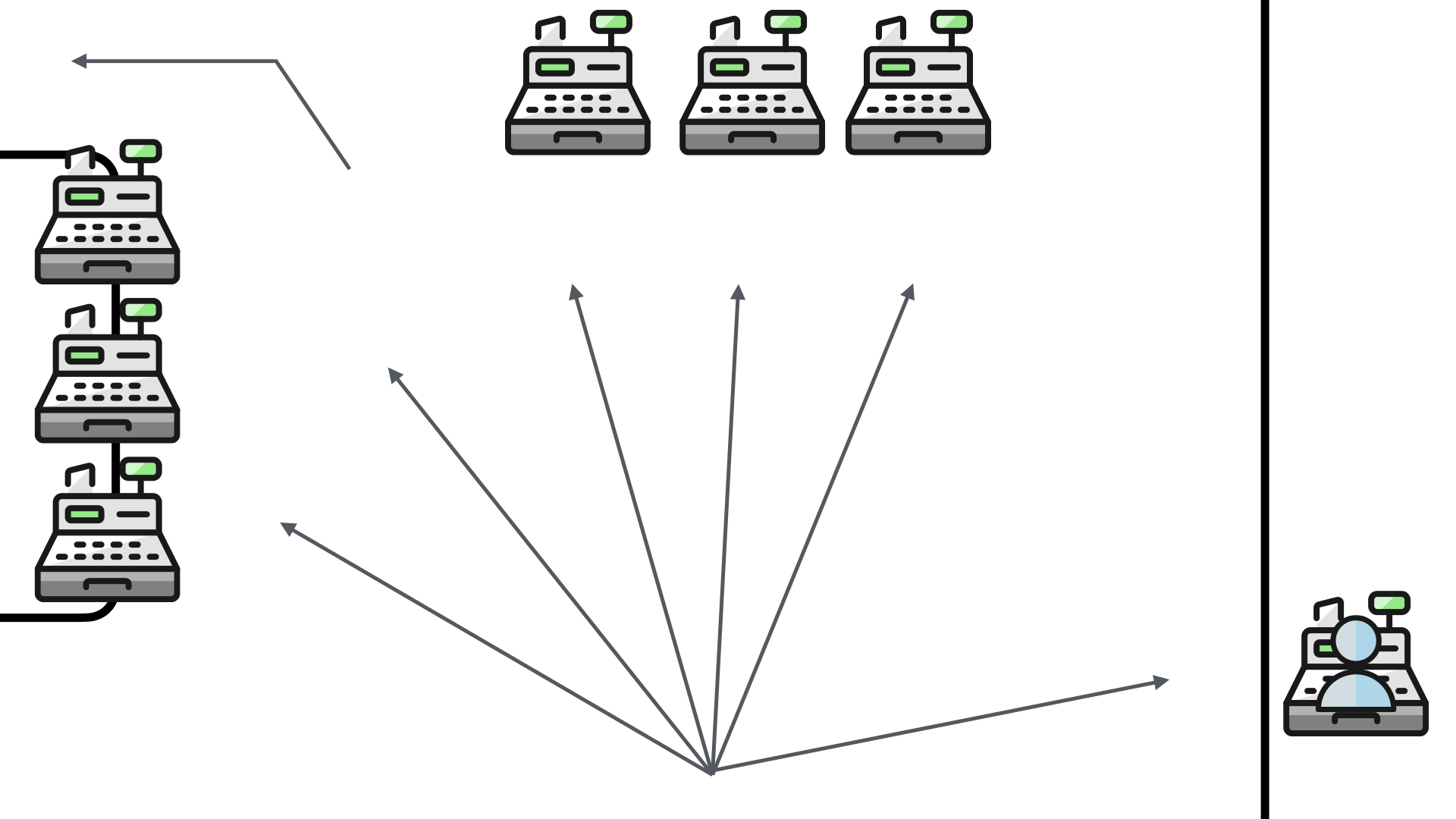

To address this issue, the store put up a new half wall. They physically blocked the direct access to the full service checkout.

The positive (?) aspect to this solution is that it helped to shape the queue. Instead of blocking traffic to the main shopping aisles, the queue now formed in the checkout area.

However, this block reduced the visibility of the full service checkout. The customers who wanted to use it had to now go out of their way to queue up for it...if they saw their preferred option at all.

This also doubled their walk for the workflow. They now had to walk to the queue, move to the full service checkout and then walk past all of the self-checkouts (again) to leave the store.

This is not a good solution and customers complained. To help address this, the store added an additional staff member to help guide more people to the self-checkouts.

In isolation, each of these decisions makes sense. Given problem X, solution Y is a reasonable approach. But, when you examine the overall workflow, the entire problem space, you see how ridiculous these steps are.

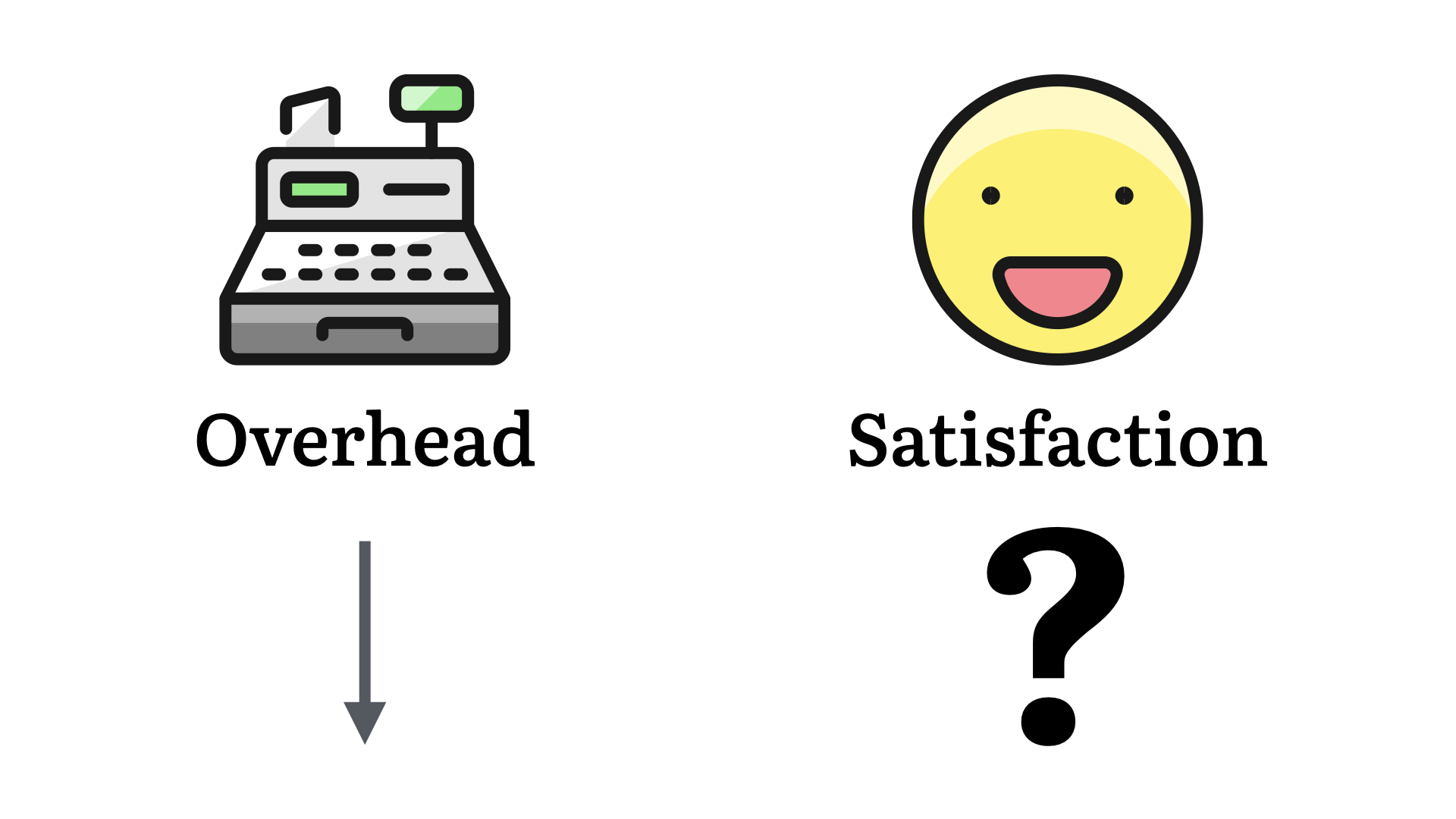

From the business perspective, the numbers are better. Overhead is down.

But what about customer satisfaction? This is much harder to measure. Anecdotally, as a customer, I can tell you it's down. How much will that impact their bottom line? I'm not sure.

For our purposes, the key takeaway is that even though the steps taken to address each issue were logical and moved towards the state goal, the result isn't what was intended.

And now...

It's not just my experience or this pharmacy, self-checkout has not been an amazing solution.

Through multiple iterations of the various platforms, a positive and smooth self-checkout is a very rare experience. This is now one more thing that we just put up with...despite the general feeling.

Again, this is a result of a series of logical decisions. The problem is that the context window for those decisions got smaller at each and every step.

The end result is a lot of effort and an outcome that may—or may not—align with the actual business goals.

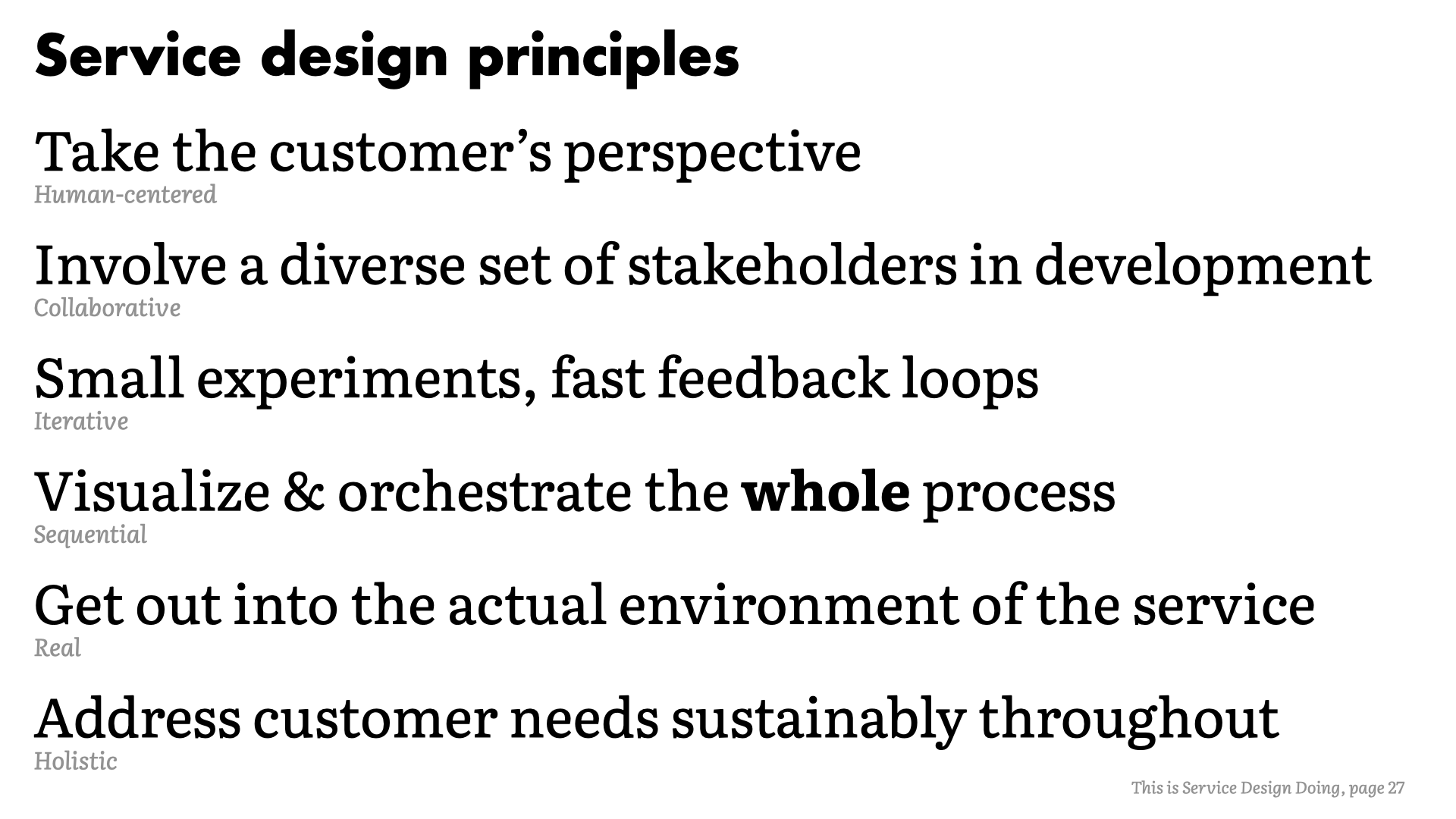

Service design principles

While there are formal methods of doing service design, at it's core, simply asking questions and listening to the feedback will improve your team's workflow significantly.

However, the principles proposed in "This is Service Design Doing" are a great way to establish a shared understanding of what you're setting out to do.

In the simplest terms, those principles are:

- Take the customer's perspective (human-centered)

- Involve a diverse set of stakeholders in development (collaborative)

- Small experiments, fast feedback loops (iterative)

- Visualize and orchestrate the whole process (sequential)

- Get out into the actual environment of the service (real)

- Address customer needs sustainably throughout (holistic)

"This is Service Design Doing" is an excellent starting point. It's not the only reference out there, but it's very approachable and the Methods book is a great playbook to help you implement changes in your team.

Risk assessments

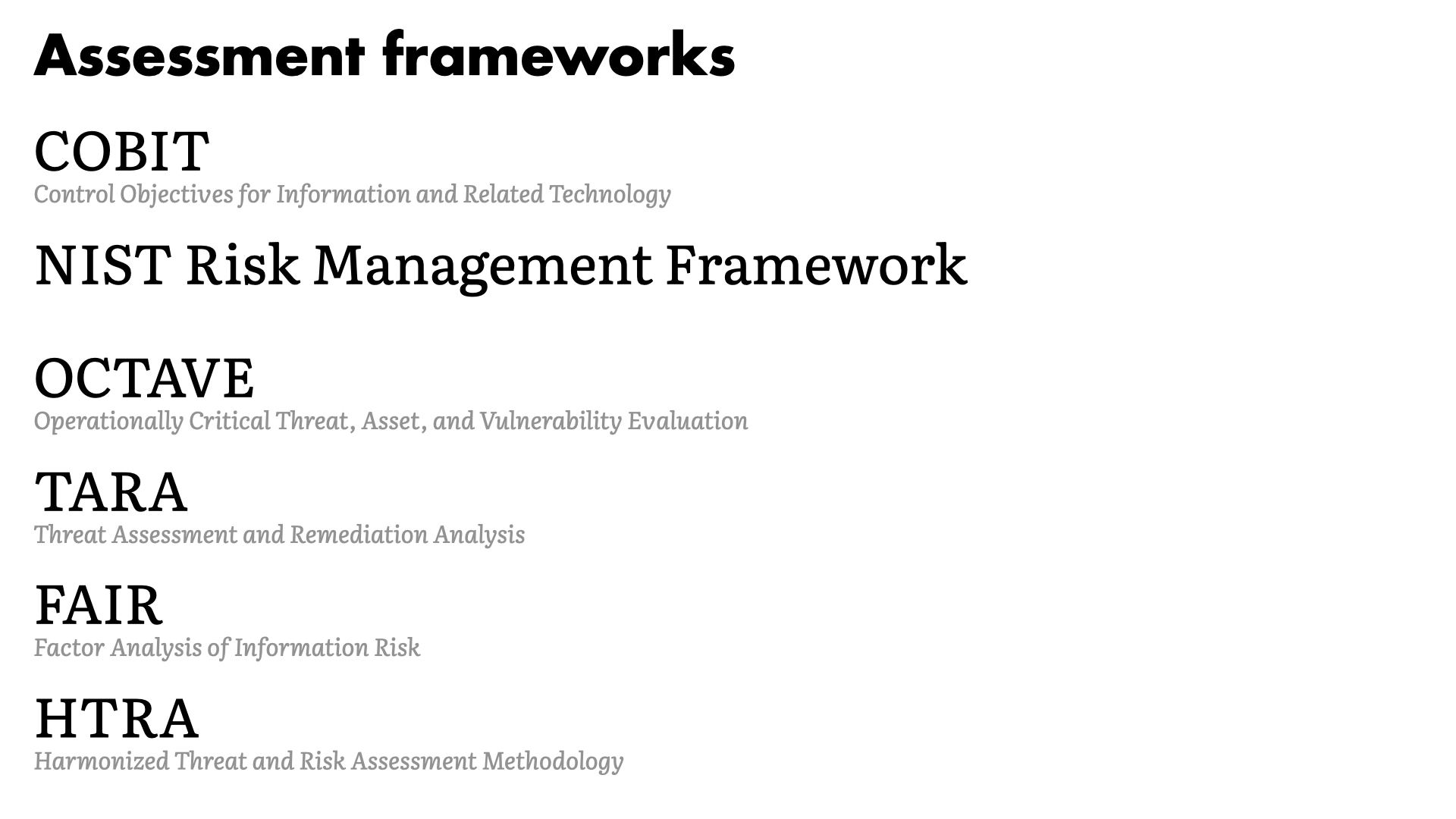

Assessment frameworks

There are a lot of different frameworks for doing risk and threat assessments. There are advantages and disadvantages to each, though really any will do.

The fact that you're conducting assessment—and regularly updating them!?!—is the most important thing.

How many folks use one of these frameworks? Or something similar?

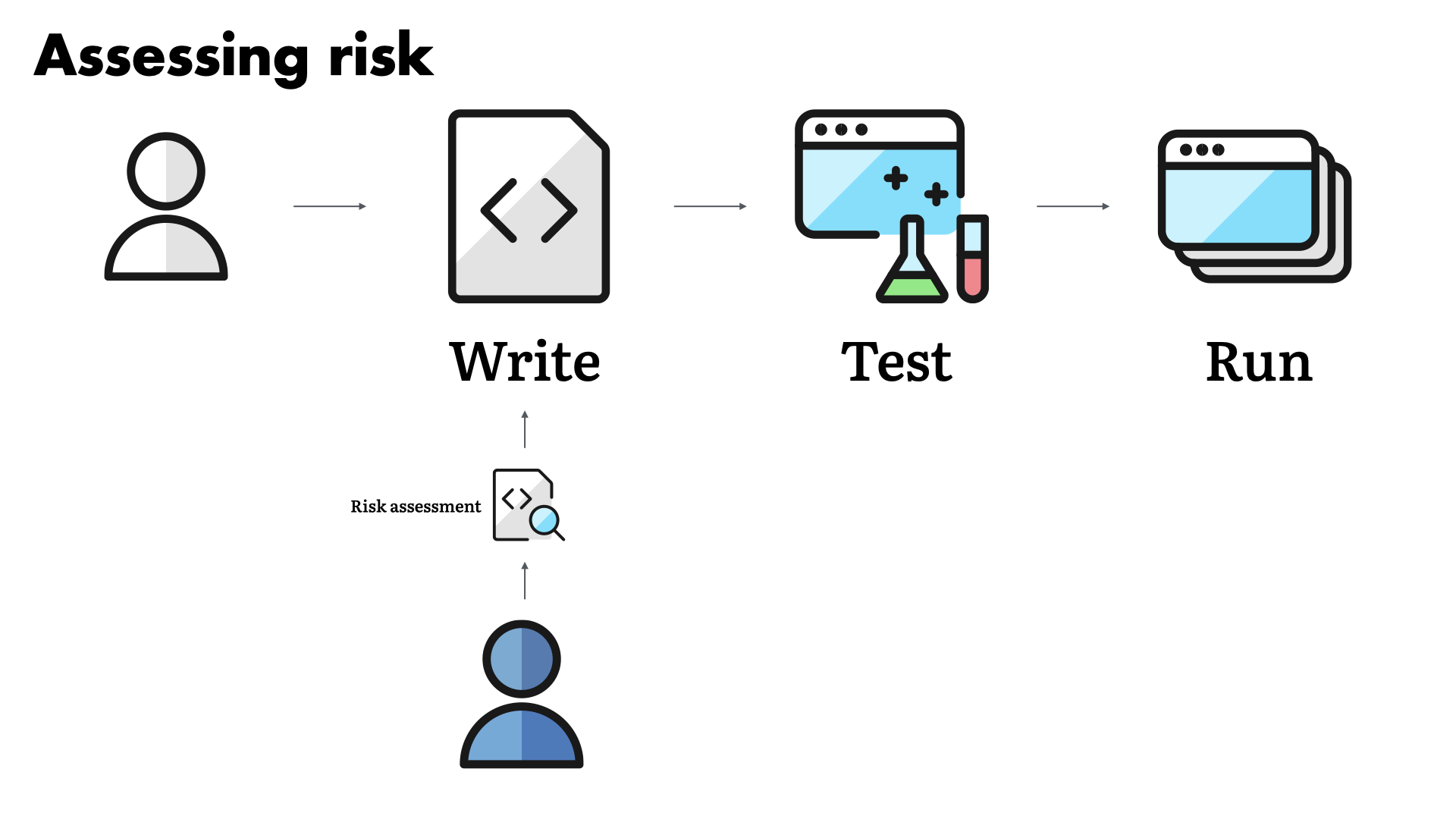

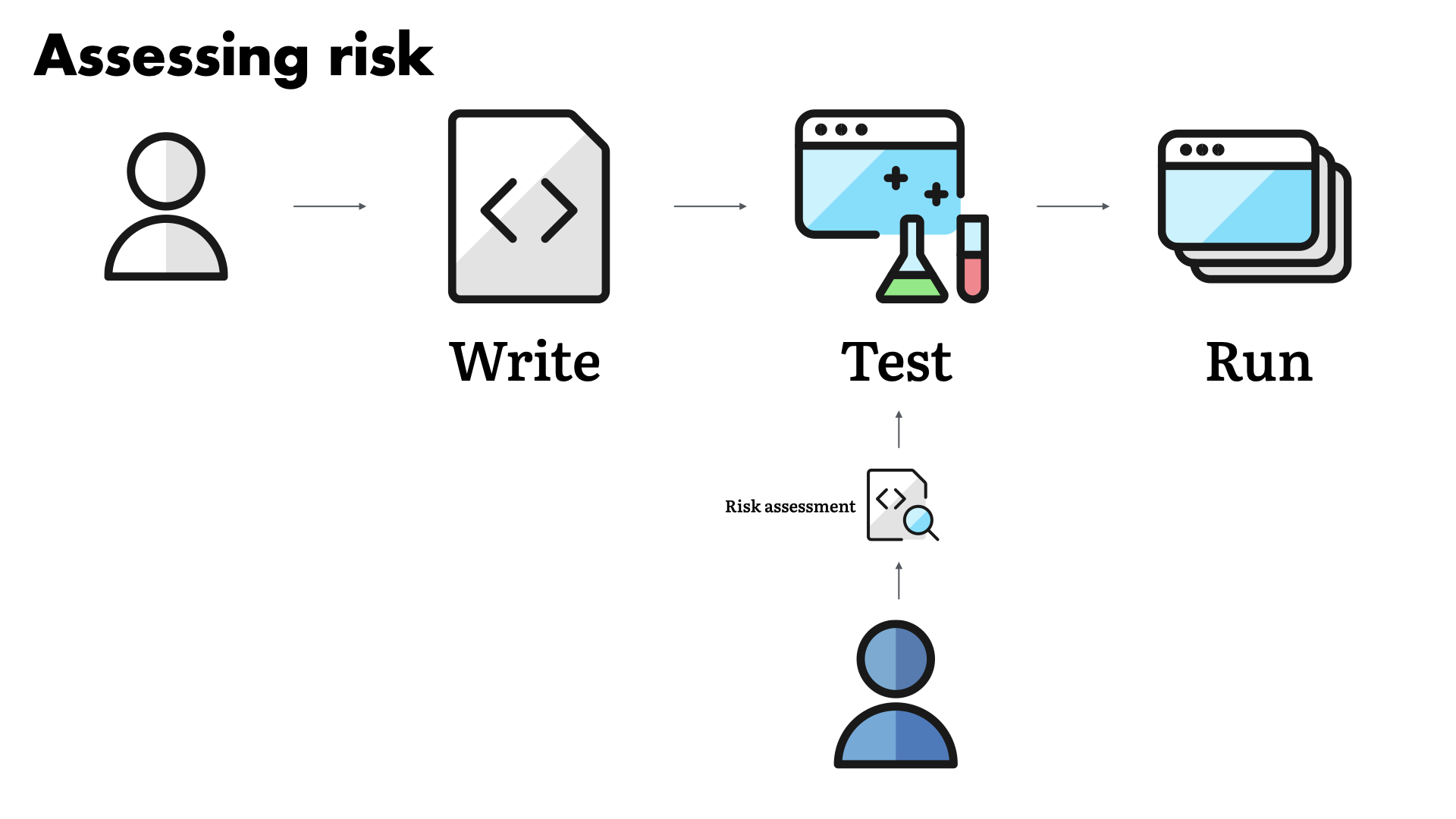

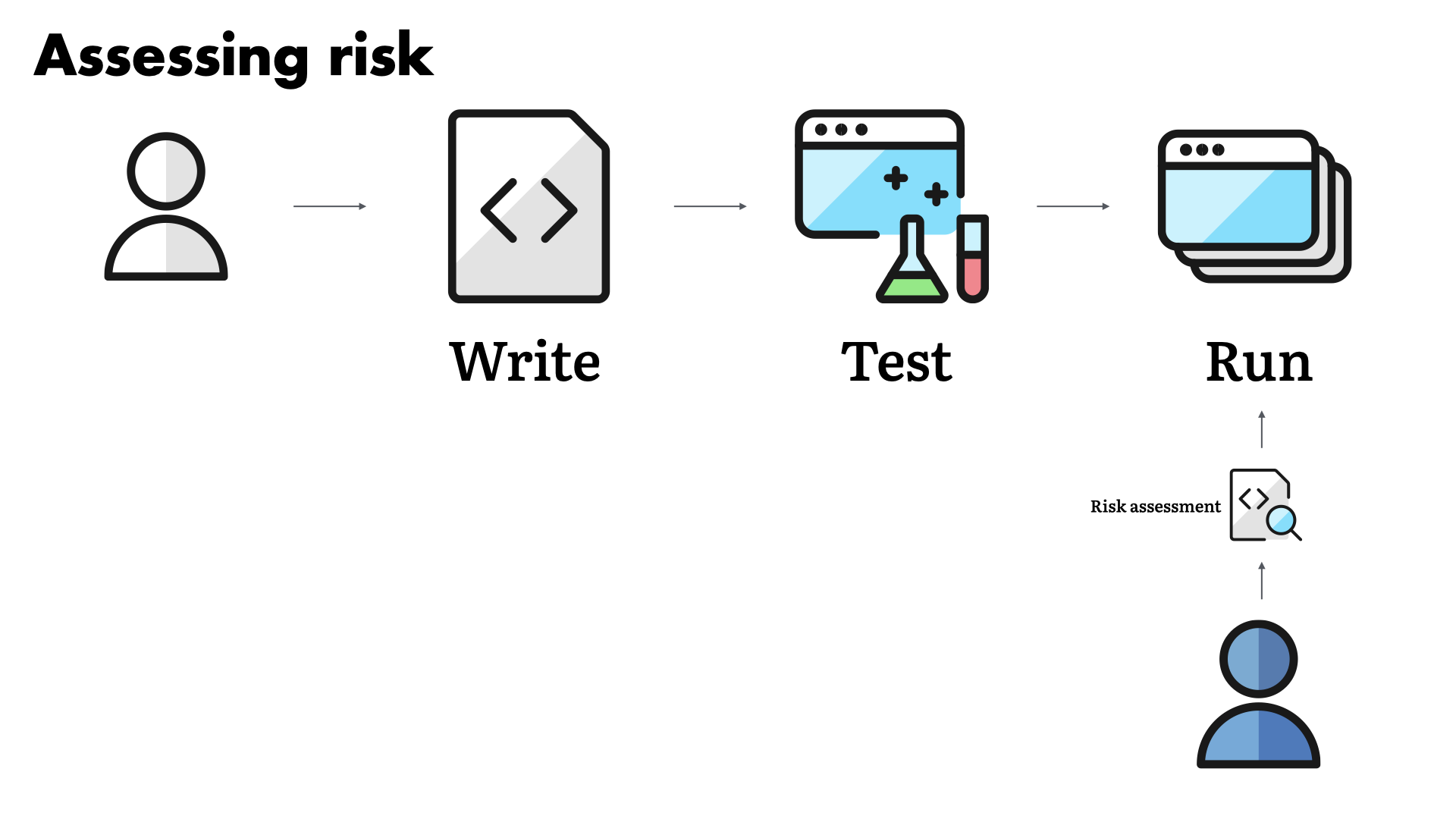

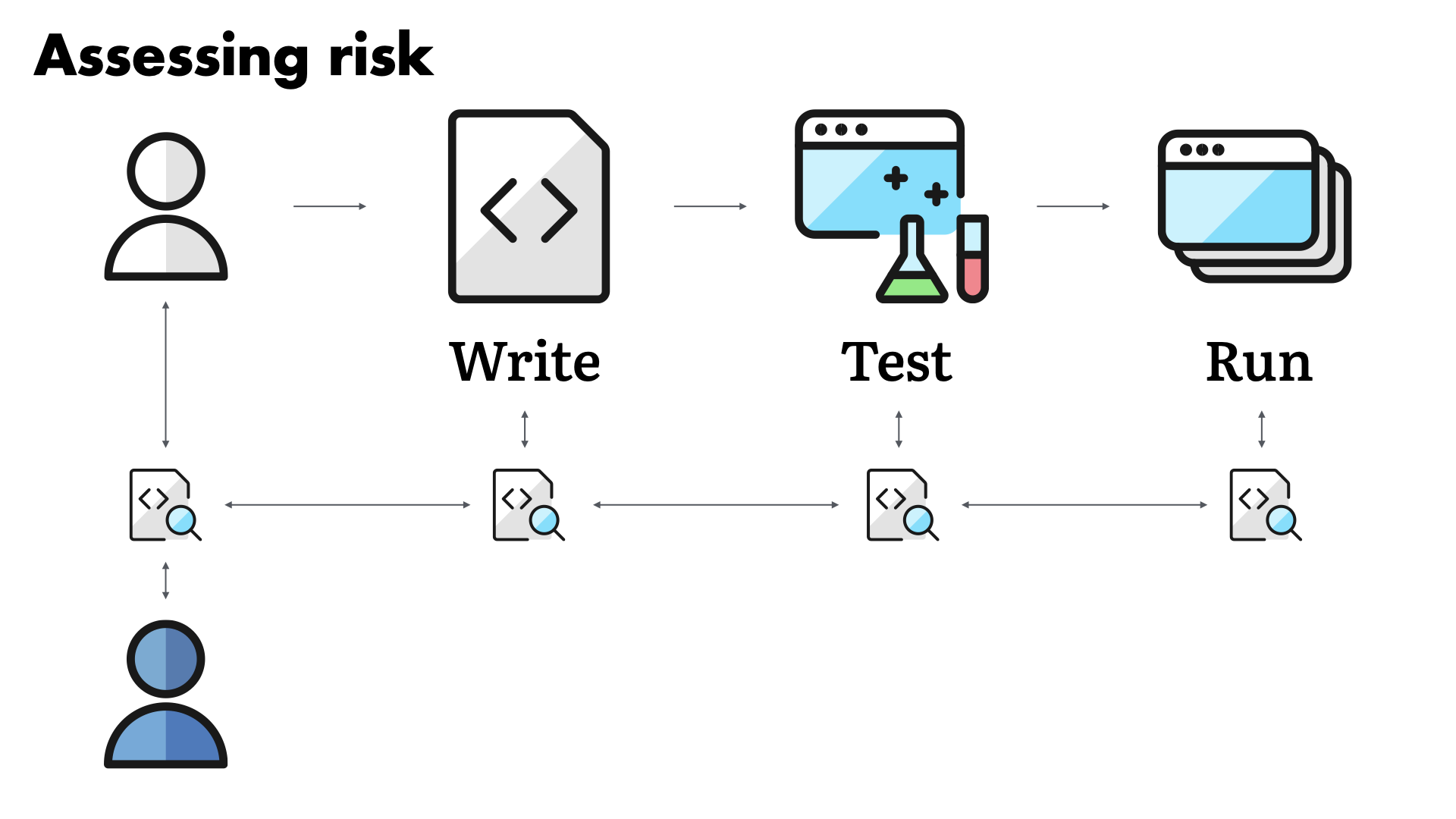

Assessing risk

Do you conduct the assessment when the team is writing the code and building the solution?

...when they are testing the solution out?

...or maybe when it comes time to run the solution?

Trying to start and then finish an assessment just as things are going to production is far too common. We—the security team—end up in this position often because of some of the service challenges we're talking about here.

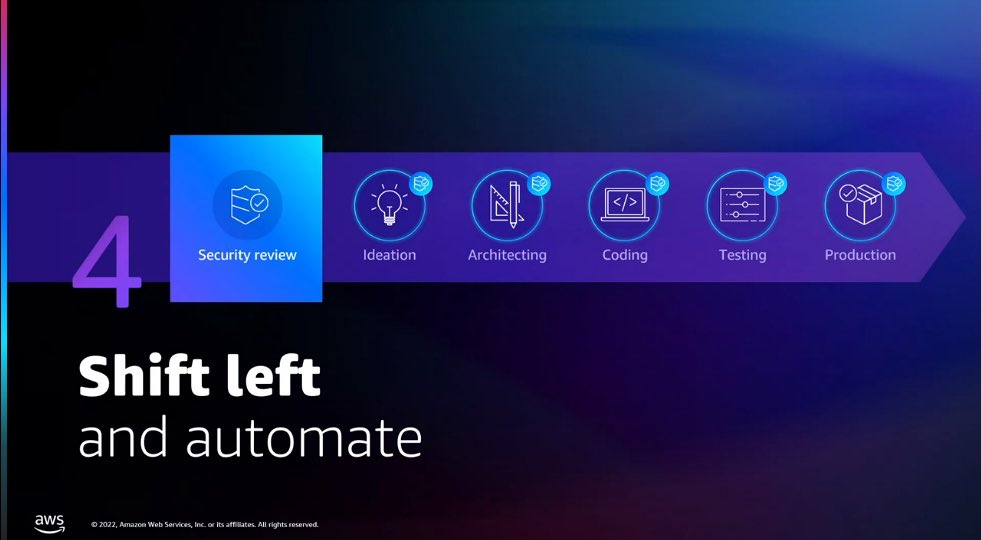

Of course the answer is that you should be doing risk assessments as a continuous process. There is assessment activity at all stages of solution development.

But, this only works if you're collaborating with the builder team. If you have the trust of other groups in the business. You have to work together and towards a common—and commonly understood—goal for this to actually work.

Getting there...

How do you end up in this utopia? This fictitious, "it's easy to put on PowerPoint" world?

The honest, open answer is, "Slowly, patiently, with a series of small steps that each get your closer to your shared goals."

Let's start by looking at the service design principles and the questions we can ask ourselves in order to start to find the path forward.

If we take the customer's perspective, we should have answers to the following questions:

- What are we doing this?

- What do I get from it?

- How can I make this easier?

When it comes to risk assessments (and other security work), often the answers are:

- Not sure.

- No idea.

- Just not do it?

Those are not great answers and they are strong indicators that we—the security team—need to be doing a much better job of communicating.

When addressing a good representation of your stakeholders, ask the following of your own team (security):

- Will the same process work for everyone?

- What are the key outcomes?

- Are we removing waste from this process?

Making small changes and getting feedback as quickly as possible is one of the most important things you can do for your work.

- When was the last time we asked if this worked?

- Do we gather data on our process?

- What adjustments have we made?

These are all questions that will help you build your feedback loops and hope you to create a truly iterative process.

In the examples we worked through today, we saw the value of taking the big picture view. Understanding the entire process is the only way to avoid the shrinking context path like we saw with the self-checkout example.

- Does our work start and stop at our team "borders"?

- How much do we know about our customers?

- What happens after the assessment is done?

Visualizing and orchestrating the whole process is key to breaking out of your silo. It's how you counter the limitations of the functional team structure.

Too many teams lay out their workflows based on their understanding and expectations of the customer. While it's possible that this might be accurate, it's unlikely.

Getting out and experiencing your customer's reality will help you understand their perspective. That understanding will lead you to better solutions.

My pharmacy didn't understand the majority of its customers. They missed the fundamental frustration that self-checkouts bring up with their older customers. No one wants to feel like they don't understand or that they are the problem and "don't get" the technology.

- Have you sat with your customers? With theirs?

- How often do you connect with the business?

- Do you know how other teams work?

- Have you tried their work, their tools?

- Are you working other make things simpler?

- Can you help the customer do more on their own?

- Is there something working really well? Can you do more of that?

Sustainability in processes is tied to complexity. Do not attempt to design a process that covers 100% of the edge cases. A workflow that solves 80–85% of the most common cases and has an allowance for the remaining 15% will be far more effective.

When making a decision, the simpler path is where you should be aiming.

The is bad

If your customers are unhappy, you have work to do. Frustrated teams work around security workflows. Not because they don't want to be secure, but because they want to get their work done.

Security is in their way. You have to avoid that at all costs.

So, do we think that the structure of our teams is influencing our workflows? And that these workflows are not serving our needs or our customers?

I do. And I think we need to change. I confident we can change and that those changes don't need to be all compassing to start.

We start by choosing to address these gaps.

We build a network of support within the business. Build understanding of how other teams work, how they communicate, and how our shared goals align.

You cannot succeed as a security team without the support of other teams in the business. The numbers simply don't add up. You need to succeed together.

The good news is that you have the same goals, you just may be speaking different languages right now or failing to share each others perspective.

You can address these challenges and improve your security by working together. And that starts with you taking a small step towards that goal.

References

- This is Service Design Doing

- This is Service Design Methods

- Reset, by Dan Heath

- This is Service Design Thinking, by Marc Stickdorn and Jakob Schneider

- Orchestrating Experiences, by Chris Risdon and Patrick Quattlebaum

- Mapping Experiences, by Jim Kalbach

- Switch, by Chip and Dan Heath

- Value Proposition Design: How to Create Products and Services Customers Want, by Osterwalder, Pigneur, et al

- Upstream, by Dan Heath

- Unreasonable Hospitality, by Will Guidara

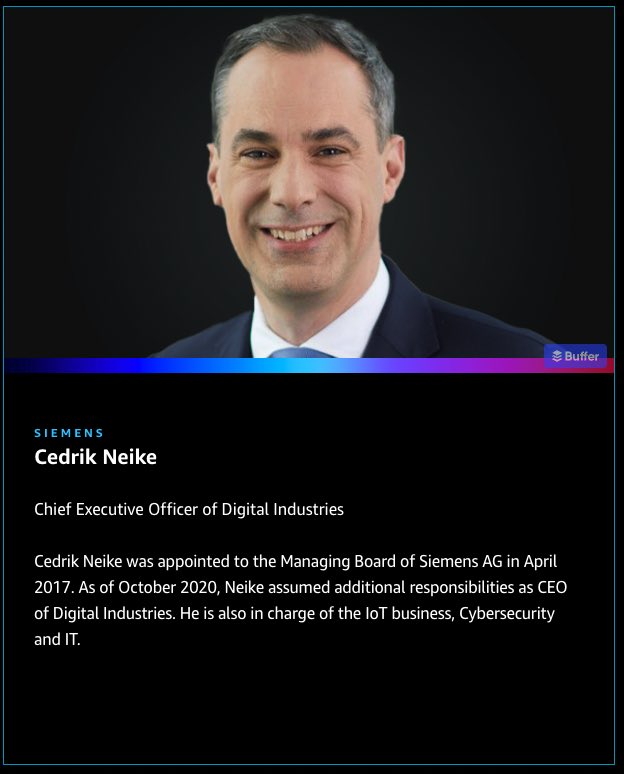

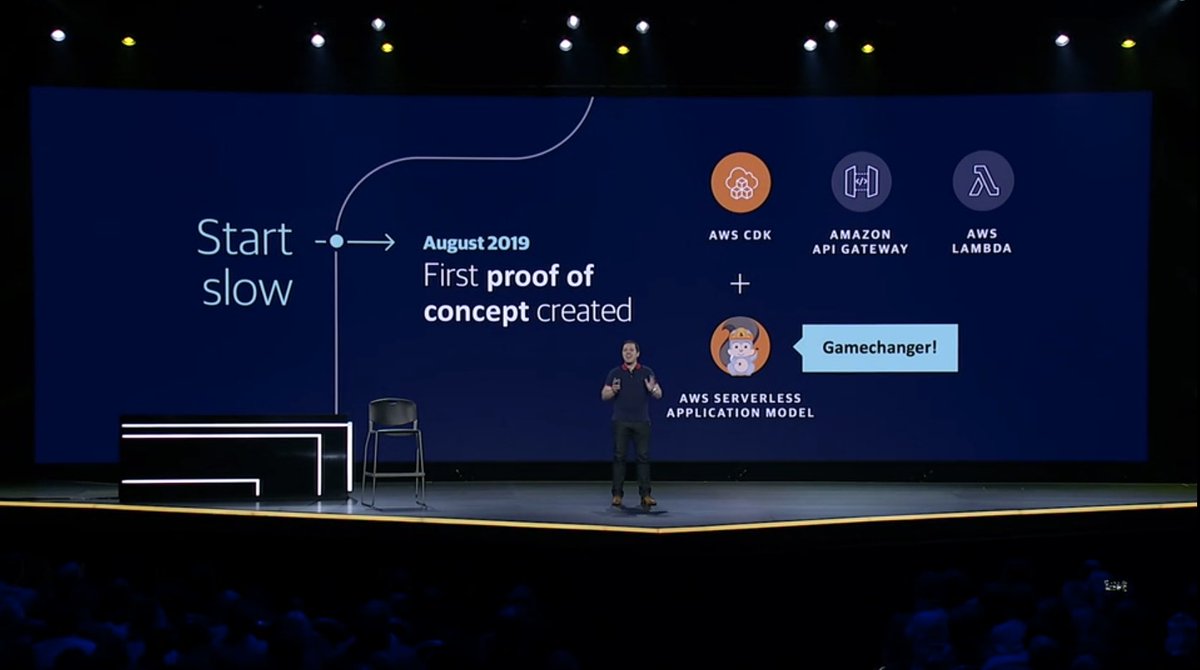

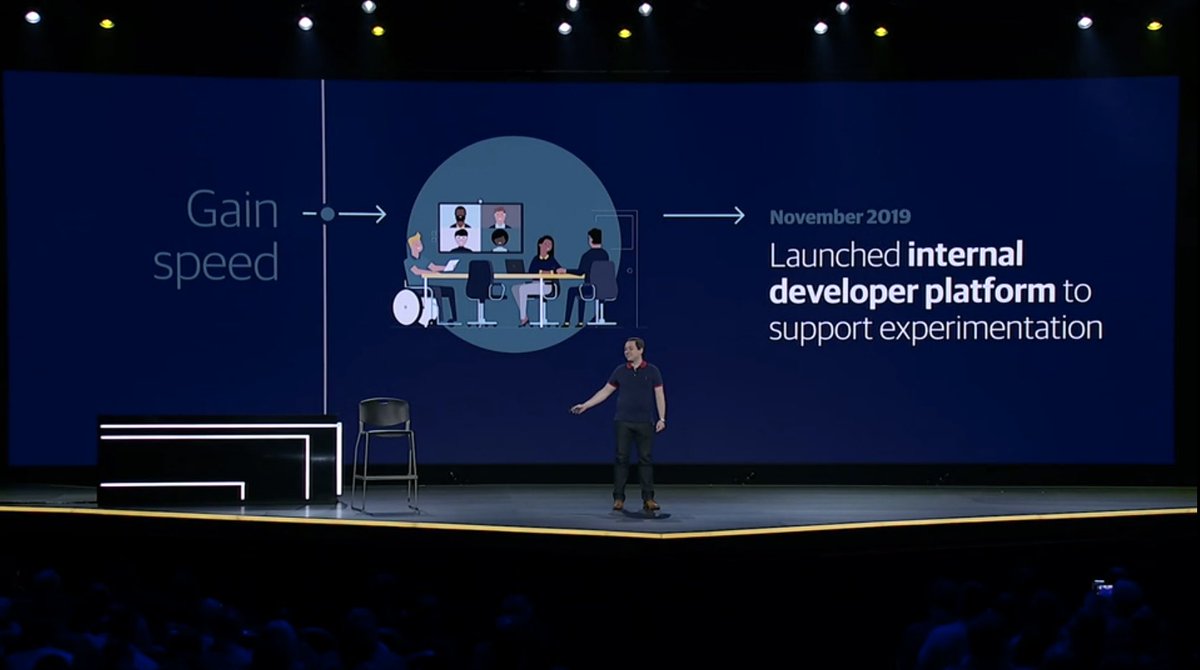

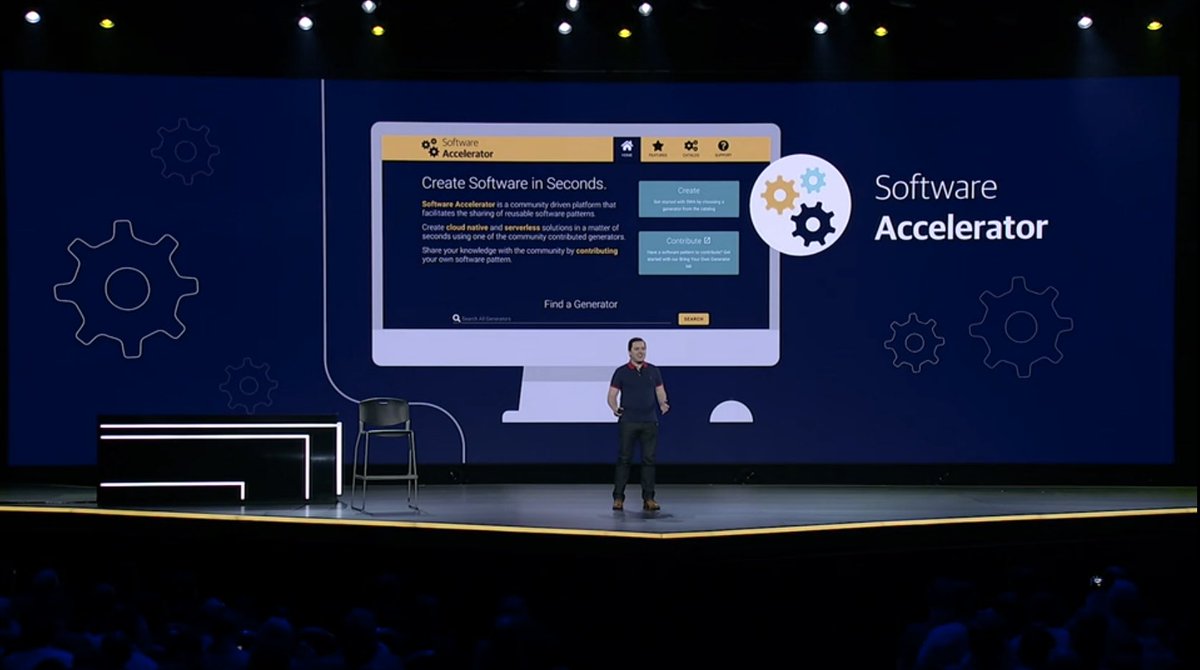

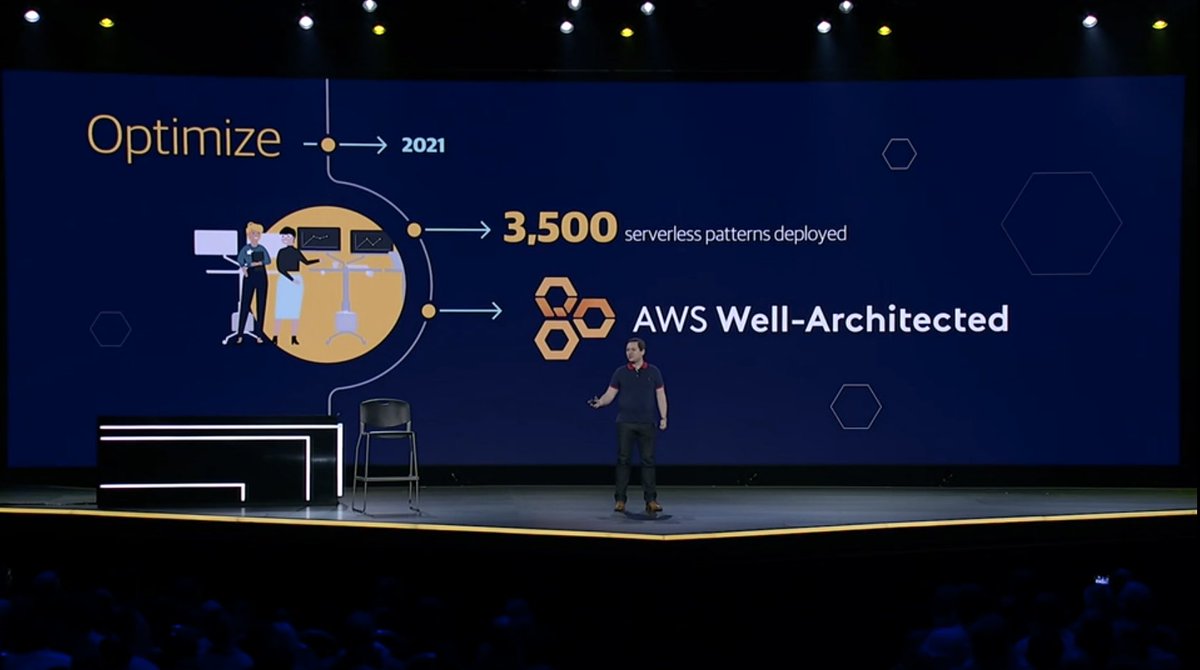

As the Vice President, Cloud Research at Trend Micro, I had a mandate to educate others about cloud security and enough leeway to experiment with how I went about it.

We had a fantastic communications team who were eager to try out new platforms and new approaches. With streaming and podcasting really starting to take off, we launched a new episode show, "Let's Talk Cloud".

Right out of the gate, we knew this was going to be a learning experience for us. We kept the show simple to start with. The first show was a discussion between myself and two of our technical leaders in the field, Jeff Westphal and Fernando Cardoso.

Jeff called in from an event where he was presenting and Fernando was in one of the Trend offices. It was a very scrappy setup, but it worked. The conversation flowed well and we were able to draw in a modest live audience.

For the remaining 5 episodes in the first season, we stayed within the Trend Micro family when recruiting guests. This made it a bit easier to justify the rough edges that we were still smoothing down.

By the end of the first season, we had a reasonable smooth running show that was gaining a lot of traction. The view numbers were nice, but what was more important was how often someone—a customer, a colleague, or a random stranger—would tell me how they had watched an episode and it got them thinking.

For the next season, we were a lot more ambitious in going after guests. We had high profile guests like Forrest Brazeal, Patrick Debois, and Tanya Janca.

Sadly, I moved on from Trend Micro before I was able to film another season. However, our work on this show kicked off an ongoing series for the company. Next up was Let's Talk Security hosted by Rik Ferguson and then #TrendTalksBizSec and #TrendTalksThreats.

Sample episodes

All episodes

Season 1

- 04-Nov-2019 #LetsTalkCloud: Real World Problems

- 12-Nov-2019 #LetsTalkCloud: Misconfigurations & Scale

- 19-Nov-2019 #LetsTalkCloud: Containers v1.1 ;-)

- 26-Nov-2019 #LetsTalkCloud: Open Source Risks

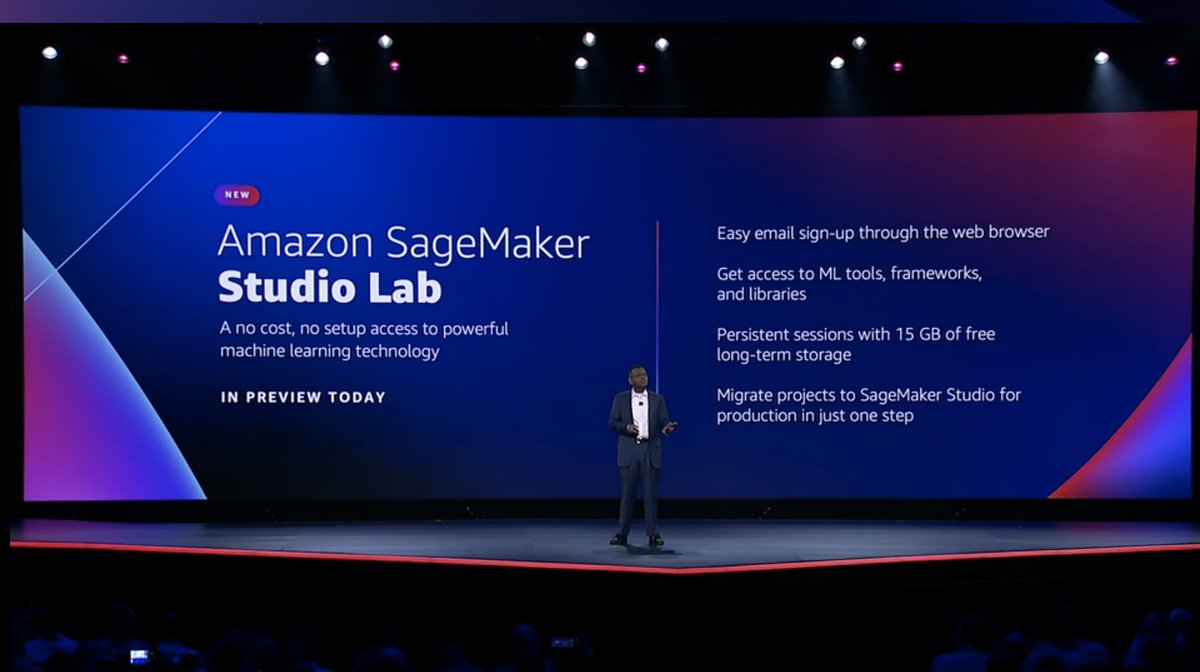

- 02-Dec-2019 #LetsTalkCloud: AWS re:Invent 2019 Kick-Off

- 10-Dec-2019 #LetsTalkCloud: AWS re:Invent 2019 re:Cap

- 10-Dec-2019 Let's Talk Cloud - Season 1

Season 2

- 24-Mar-2020 #LetsTalkCloud: We're Back

- 31-Mar-2020 #LetsTalkCloud: Transformations In The Cloud

- 07-Apr-2020 #LetsTalkCloud: Finding Security

- 14-Apr-2020 #LetsTalkCloud: The Security of Software

- 21-Apr-2020 #LetsTalkCloud: Executives vs. Engineers

- 28-Apr-2020 #LetsTalkCloud: The Unicorn Project Principles

- 28-Apr-2020 Let's Talk Cloud - Season 2

Going back through the archives of "Mornings with Mark" has been quite the experience. I've been both fascinated and a little horrified (the hair, the look, the production…yikes) re-watching some of those nearly 200 episodes.

It's interesting to remember that back then (2018—2019), a regular, dedicated vlog focused on cybersecurity and privacy on social media was pretty rare.

"Mornings with Mark" was really a space for me to explore my thoughts on these crucial topics and share some of what I was learning while traveling and teaching cybersecurity. It was also a bit of an experiment with social media and video platforms.

I ended up regularly multi-streaming to LinkedIn (where I was part of the streaming beta program), Twitter, and YouTube. Social media was very different in 2018 and the consistency of the vlog helped grow the audience over time.

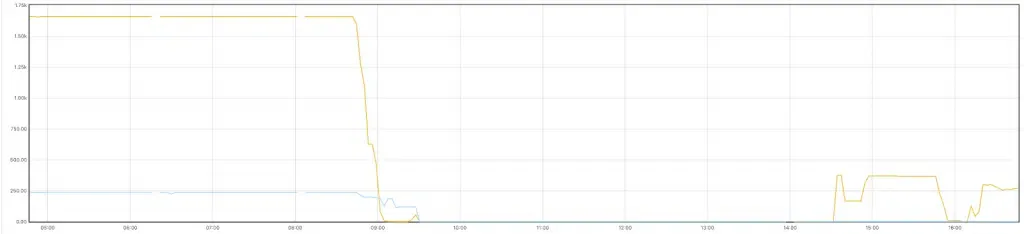

Over its run, the show averaged 250 live viewers and then another 1,000 on-demand within that week. Those numbers may seem modest, but to have that type of reach for such a simple and raw vlog was really touching.

It’s nice to know that I was able to help that many people understand security and privacy just a little bit better.

Sample episodes

All episodes

Feb/2018—12 episodes

- 12-Feb-2018 Perspectives

- 13-Feb-2018 Pyeongchang 2018 Olympic Games Hack

- 14-Feb-2018 Risk Assessments & The Risk Of No Data

- 15-Feb-2018 Blockchain For Identities

- 16-Feb-2018 Apple iOS Messenger App Crash

- 20-Feb-2018 Voice Interfaces

- 21-Feb-2018 DevOps Overload

- 22-Feb-2018 Workflow, Passwords, and More

- 23-Feb-2018 Passwords, Educating Users, and the Communal Good

- 26-Feb-2018 Cybersecurity In & Of Canada

- 27-Feb-2018 Apple iOS 11 Security

- 28-Feb-2018 New Website

Mar/2018—15 episodes

- 01-Mar-2018 Secure Systems Thinking

- 02-Mar-2018 DDoS Attacks & Community Responsibility

- 13-Mar-2018 SXSW Audience Level

- 15-Mar-2018 Nervous For SXSW

- 15-Mar-2018 Rizenfall And Needless Hype

- 16-Mar-2018 CPUs, ICOs, and Blockchains

- 19-Mar-2018 Facebook Data Misuse And Social Network Responsibility

- 20-Mar-2018 Organizational Design and OT Risk

- 22-Mar-2018 Privacy At Scale

- 23-Mar-2018 One Billion Attacks Per Day

- 26-Mar-2018 Facebook Data Downloads

- 27-Mar-2018 Working With Data

- 28-Mar-2018 Changing Perspectives & The Unraveling Of Online Tracking

- 28-Mar-2018 Facebook Data Mining & The Long Weekend Round-up

- 29-Mar-2018 Ubiquitous Digital Tracking

Apr/2018—13 episodes

- 05-Apr-2018 AWS San Francisco Summit 2018 Recap

- 06-Apr-2018 Video Streaming Options

- 11-Apr-2018 Privacy And Security vs. Usability

- 12-Apr-2018 Splitting Hairs With Facebook Testimony

- 13-Apr-2018 iOS Graykey And Going Dark

- 16-Apr-2018 Fear Uncertainty And Doubt

- 18-Apr-2018 Blocking IP Addresses

- 20-Apr-2018 The Security Team's Role In Your Org

- 23-Apr-2018 The Canadian Criminal Code on Hacking

- 24-Apr-2018 Live Streaming and Needless Complexity

- 25-Apr-2018 Poor Naming Choice For Gmail Redesign

- 26-Apr-2018 Your Role as a Security Educator

- 27-Apr-2018 The Hallway Track

May/2018—18 episodes

- 01-May-2018 F8 & The Future Of Facebook

- 03-May-2018 The F8 Fallout

- 04-May-2018 F**king Passwords

- 07-May-2018 Getting Started In Cybersecurity In A Positive Direction

- 08-May-2018 A.I.'s Security & Privacy Impact

- 09-May-2018 A.I. Amok

- 10-May-2018 What You Need To Get Started In Cybersecurity

- 11-May-2018 Making A Break To Start Your Cybersecurity Career

- 15-May-2018 Ethics In Technology & Security

- 18-May-2018 Being Transparent With User Data

- 18-May-2018 Listening To Customers

- 22-May-2018 3, 2, 1, GDPR

- 23-May-2018 Encryption Law Enforcement And Transparency

- 25-May-2018 🇪🇺 GDPR Day!

- 26-May-2018 Data Management & GDPR

- 28-May-2018 OpSec, Soft Skills, And People

- 28-May-2018 University for Cybersecurity

- 30-May-2018 Information Security vs. Cybersecurity

Jun/2018—17 episodes

- 01-Jun-2018 What's In A Name?

- 01-Jun-2018 Why Can't Security Play Nice With Others?

- 04-Jun-2018 Transparency & Backpedaling

- 05-Jun-2018 Developer Workflow 101

- 07-Jun-2018 Apple, WWDC, and Your Privacy

- 11-Jun-2018 Net Neutrality

- 12-Jun-2018 Cryptocurrency & High Value Targets

- 13-Jun-2018 Google In Schools

- 14-Jun-2018 Apple, Graylock, And Context

- 15-Jun-2018 Getting Started In Cybersecurity & Perspective

- 18-Jun-2018 Ethics In Technology And Cybersecurity

- 19-Jun-2018 Ethics And Action In Technology

- 21-Jun-2018 Culture Change Is Hard

- 25-Jun-2018 Tanacon, Security, and Lack of a Threat Model

- 26-Jun-2018 Don't Trust The Network

- 27-Jun-2018 Security Thinking Is Service Design Thinking

- 28-Jun-2018 Working Together To Improve Security

Jul/2018—14 episodes

- 09-Jul-2018 Fortnite, UI Patterns, and Desired Behaviours

- 10-Jul-2018 🧠 A.I. In Context

- 11-Jul-2018 Cybersecurity: Getting Past HR

- 12-Jul-2018 Document, Automate, Repeat

- 16-Jul-2018 Ignorance & Risk

- 19-Jul-2018 Balance & Burnout

- 20-Jul-2018 Remote Work, Cubes, & Everything In Between

- 23-Jul-2018 Getting Started In Security: Post Certification

- 24-Jul-2018 Assumptions & Outdated Mental Models

- 25-Jul-2018 Constant Negative Pressure

- 26-Jul-2018 Security Keys, UX, & Reasonable Choices

- 27-Jul-2018 HR Challenges & Getting Your First Security Role

- 30-Jul-2018 Discussions At Scale

- 31-Jul-2018 Toxicity & Security's Responsibility

Aug/2018—11 episodes

- 01-Aug-2018 Learning From Failure

- 02-Aug-2018 Easy To Use Tools

- 08-Aug-2018 Operational Security

- 10-Aug-2018 The Basics

- 20-Aug-2018 Recharged, Reset, & Rocking

- 21-Aug-2018 Cybersecurity Basics #1 - The Goal

- 22-Aug-2018 Cybersecurity Basics #2 - Vulnerabilities, Exploits, and Threats

- 23-Aug-2018 Cybersecurity Basics #3 - Passwords

- 27-Aug-2018 Cybersecurity Basics #4 - Perspective

- 28-Aug-2018 Cybersecurity Basics #5 - Encryption

- 29-Aug-2018 Cybersecurity Basics #6 - Malware

Sep/2018—13 episodes

- 04-Sep-2018 Cybersecurity Basics #7 - Hackers & Cybercriminals

- 05-Sep-2018 Cybersecurity Basics #8 - Authentication, Authorization, & Need To Know

- 06-Sep-2018 Cybersecurity Basics #9 - Attack Attribution

- 07-Sep-2018 Cybersecurity Basics #10 - Personally Identifiable Information

- 12-Sep-2018 Cybersecurity Basics #11 - Risk Assessments & Pen Tests

- 13-Sep-2018 Cybersecurity Basics #11a - Risk Assessments Redux

- 14-Sep-2018 Cybersecurity Basics #12 - Bolt-on vs Built-in

- 17-Sep-2018 The Basic Basics

- 18-Sep-2018 Security Is A Quality Issue

- 21-Sep-2018 What Do You Look To Get Out Of Conferences?

- 26-Sep-2018 Amazon Alexa Everywhere

- 27-Sep-2018 End-to-end Encryption & WhatsApp

- 28-Sep-2018 Facebook, Shadow Profiles, & Data Brokers

Oct/2018—19 episodes

- 01-Oct-2018 50 Million Facebook Accounts Hacked?!?

- 02-Oct-2018 National Cybersecurity Awareness Month

- 03-Oct-2018 How To Deliver Tough News

- 04-Oct-2018 Following Up On Tough News

- 05-Oct-2018 Bloomberg, Supermicro, and Hardware Supply Chain Attacks

- 09-Oct-2018 Evidence, Accusations, and Motivation

- 11-Oct-2018 Google+ & Infrastructure Monitoring

- 12-Oct-2018 Facebook...ugh...%$&#ing, Facebook

- 15-Oct-2018 Communicating FOR Your Audience

- 16-Oct-2018 Virtual Experiences & Content Delivery

- 17-Oct-2018 DRUGS!!! and IT Risk and Graphs

- 18-Oct-2018 Being An Educated Social Media User

- 19-Oct-2018 The War Room

- 22-Oct-2018 User Experience Is Critical

- 23-Oct-2018 Keep Decisions Up To Date

- 25-Oct-2018 Building On Fragile Layers

- 26-Oct-2018 Building On Trust

- 30-Oct-2018 Refreshing Your Perspective

- 31-Oct-2018 Automating Your Job

Nov/2018—9 episodes

- 01-Nov-2018 Know Your Audience

- 02-Nov-2018 Master Your Tools

- 05-Nov-2018 Politics & Attack Attribution

- 06-Nov-2018 The Internet Is Forever

- 07-Nov-2018 Optimize Your Tools

- 08-Nov-2018 You Can't Blame 'Em

- 09-Nov-2018 Signals And The Data Explosion

- 19-Nov-2018 Preparation Is Key

- 20-Nov-2018 Communication At Scale

Dec/2018—8 episodes

- 05-Dec-2018 Delivering Information With Context

- 06-Dec-2018 Australia, Huawei, Apple, and the Government of Canada

- 07-Dec-2018 Fortnite, A Service Delivery Example

- 10-Dec-2018 Security Metrics 🗑🔥

- 11-Dec-2018 Law and The Internet

- 14-Dec-2018 Unexpected Lessons

- 17-Dec-2018 On The Importance Of Names

- 19-Dec-2018 Squad Goals

Jan/2019—8 episodes

- 08-Jan-2019 Setting Up 2019

- 10-Jan-2019 Tracking Smartphone Data

- 15-Jan-2019 Konmari Your Data

- 17-Jan-2019 773M Credentials

- 22-Jan-2019 Zero vs. Lean Trust

- 24-Jan-2019 Facebook's 10 Year Challenge

- 29-Jan-2019 GDPR Intentions

- 31-Jan-2019 Facebook & The Value of Privacy

Feb/2019—8 episodes

- 05-Feb-2019 Cryptocurrencies & Cybercrime

- 07-Feb-2019 Cybersecurity Research Consequences

- 12-Feb-2019 Canadian Election Cybersecurity

- 14-Feb-2019 Terms of Service

- 19-Feb-2019 DNS Hijacking

- 21-Feb-2019 Your Child's Digital Identity

- 26-Feb-2019 Secret App Telemetry

- 28-Feb-2019 Warrant Canaries

Mar/2019—5 episodes

- 07-Mar-2019 The Cybersecurity Industry

- 12-Mar-2019 Services & Privacy Perceptions

- 14-Mar-2019 Cloud Costs & Security

- 19-Mar-2019 Cybersecurity Needs Coders

- 21-Mar-2019 Stadia & Secure Access Design

Apr/2019—9 episodes

- 02-Apr-2019 Exposing Secrets In Code

- 04-Apr-2019 Cybersecurity & Technical Debt

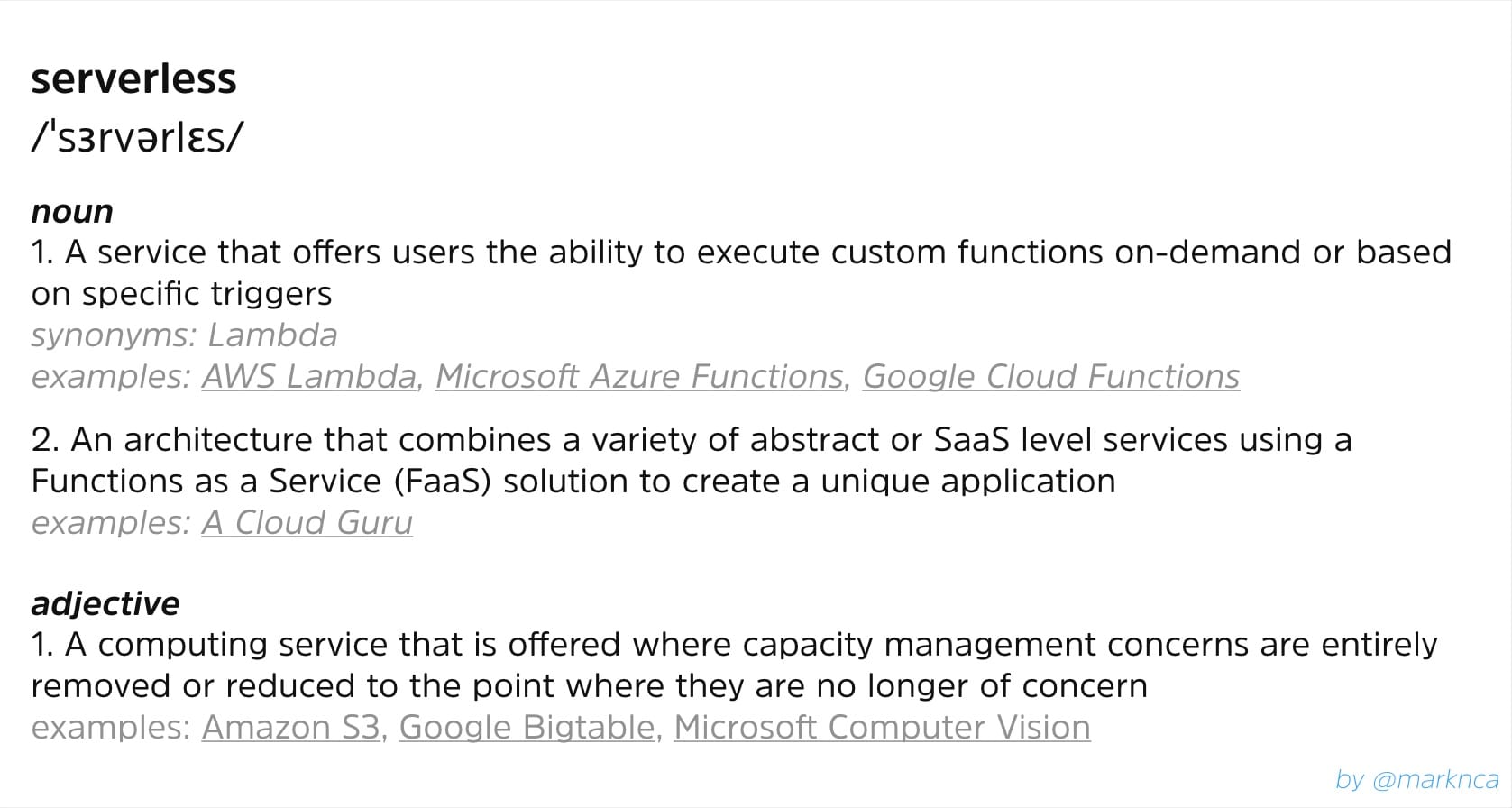

- 09-Apr-2019 Serverless Is An Ops Model

- 11-Apr-2019 Perfectionism In Tech

- 16-Apr-2019 Metadata Trails

- 18-Apr-2019 Facebook's Security Fail

- 23-Apr-2019 Facial Recognition Consent

- 26-Apr-2019 Cybersecurity Time Crunch

- 30-Apr-2019 James Harden & Cybersecurity Policy

May/2019—6 episodes

- 01-May-2019 Facebook's F8 & Information Management

- 07-May-2019 Borders & Cybersecurity

- 09-May-2019 Porn & Digital Identity

- 21-May-2019 Huawei, Android, and Cybersecurity

- 23-May-2019 Nest, IoT, and Your Privacy

- 28-May-2019 Web Browser Privacy

Jun–Oct/2019—15 episodes

- 04-Jun-2019 Apple WWDC Privacy Update

- 05-Jun-2019 Cybersecurity Motivations

- 08-Jul-2019 Update On Mornings With Mark

- 09-Jul-2019 NBA Free Agency vs Security Policies

- 11-Jul-2019 Zoom.us & The Real Cybersecurity Problem

- 16-Jul-2019 10x Engineers

- 19-Jul-2019 FaceApp: Relax You're Just Old (Now)

- 12-Aug-2019 AppSec Is Dead

- 16-Aug-2019 NULL & Input Validation

- 21-Aug-2019 Privacy Expectations

- 26-Aug-2019 Business Email Compromise

- 05-Sep-2019 Cybersecurity Patching in Context

- 11-Sep-2019 Retargeting In Online Politics

- 26-Sep-2019 E-transfer Security

- 18-Oct-2019 Biometrics and Bugs

Walking past the display of Leafs memorabilia, I turned the corner, opened the door, and took a seat in the conference tucked away in the Air Canada lounge. I chuckled at the framed magazine covers adorning the walls with a who’s-who of Canada. I set my scorchingly hot Tim Hortons tea on the desk and waited to join the province-wide broadcast on CBC Radio.

In that pause, I took a moment of self-reflection and giggled quietly, half expecting a Mountie on a moose or a Québécois lumberjack with a mountain of syrup-drenched pancakes to pass by.

It felt deeply Canadian. Yes, an unbelievable, absurd, comical amount of Canadian-ness compressed into one moment, but that didn’t diminish my enjoyment. The smile that spread across my face stayed with me the rest of the day.

First steps with the network

My first appearance on the network was specifically Canadian as well. In 2014, the CBC was looking for expert commentary on how the Canadian Revenue Agency (CRA) was responding to the serious, widespread software vulnerability.

Having already started to appear in the media semi-regularly the previous year, I was a good fit for the article with my decade of experience in the Canadian public service. My commentary appeared alongside the director of the Canadian Internet Policy and Public Interest Clinic (CIPPIC) and Dr. Christopher Parsons from—at that time—the Citizen Lab.

I was humbled that my commentary was featured with such prominent experts in the field. Experts that I regularly read and still do!

That piece really sparked a passion in me. I enjoyed doing the analysis and offering a pragmatic voice on technology issues. A voice that I hoped—and still hope—helps to balance out other voices in the field.

Even then, I knew that my opinions often run counter to the louder voices that can grab the headlines. I’m ok with that. I’d rather go on the record saying something I believe in, something that I can stand behind even a decade later.

I’m also ok being that pragmatic voice. It’s not as flashy, but I believe that it can deliver more nuance and help make complex issues accessible to everyone.

Off and running

Over the next 8 years, I would appear more frequently on various CBC properties. From St. John's to Victoria, I always tried to make time to support CBC journalists and hosts who were looking to help Canadians understand what was going on in the world of technology.

I was thrilled when things started to snowball as my comments were published more frequently. This led to a regular spot on TV, appearing on The Exchange with Peter Armstrong. I also covered issues for the CBC News at 6 in cities across the country and was featured in segments on the CBC News Network channel.

Easier—logistically at least—were the radio segments. I've always been an early bird, so when I delivered a couple morning drive-time segments, I started to get called more frequently. I get it, there's not a lot of folks willing to try and distill complicated issues into something easily understandable before 8 am.

CBC Ottawa Morning

Those early morning segments lead to a regular radio column on CBC Ottawa Morning. Once every couple of weeks, I would chat with the host for 6-8 minutes and summarize the news of the moment and try to contextualize it for the audience of 100,000+.

I absolutely loved the challenge of it and got a lot of joy out of helping folks in the region to better understand specific issues.

The process was pretty straight forward. Sometimes the show would reach out the day before and ask if I could talk about a news story. Other times, I would reach out and suggest a topic flying a bit under the radar.

We'd agree on a topic and I would do an initial brief to help the show's researchers start to dig in to prepare the host for the discussion. After that, I would conduct my own research and start to outline the key areas of the issue, its larger context, and try to highlight a few hooks that would help it all land.

I'd circle back to the show with a couple of bullet points to help point the conversation in a productive direction and that was really it for formal preparation. I'd make sure to study my notes and go over key points so that the conversation could flow smoothly while still being informative.

It was great practice for a workflow that continues to help my daily. Being able to identify a topic of interest and then quickly map the landscape around it has been a game changer for me.

This workflow not only satisfies my natural curiosity, but it helps me to consistently contribute to my team and my community.

Eight years of teaching and learning

From 2014 to 2022, I made over 100 appearances on air and in print for the CBC. Each and every time, I tried to help Canadians better understand how technology impacted their lives and communities.

Looking back, I can see how I’ve grown as a communicator. Starting out with safer commentary like a Timbits player taking the field for the first time. With practice, I’ve become more confident expressing my opinions and I’ve found my voice. I moved from just starting facts to crafting explanations that break down complicated issues into simpler, relatable analogies to help everyone understand.

I’ve learned the value of consistently coming back to a topic over and over again. Just because I may be a little tired of talking of security and privacy fundamentals, doesn’t mean everyone is. It’s the patient repetition, the calm explanation of the key issues that truly reaches people.

Technology is complicated. There’s no getting around that. People are hungry to understand the questions technology raises and the questions it helps to answer.

Like that Air Canada lounge seeped in Canadiana, sometimes you need to go above and beyond to get the point across. For me as a security communicator, that means finding the hook inside the story that builds a bridge for the wider audience.

I loved my time on the CBC. It helped me grow as a communicator and touched on a nostalgia I didn't fully appreciate.

Various appearances, 2014—2022

CBC regularly archives content from their site. Here are a few articles, videos, and radio segments that are still available to the public.

- Oct/2018 Are classroom apps good for your kids — or simply 'surveillance'? | CBC News

- Jan/2018 If you're going to blame a cyberattack on North Korea, you'd better show your work | CBC News

- Oct/2017 Shopify defence | CBC.ca

- Sep/2017 Equifax breach provokes frustration for Canadians | CBC News

- May/2017 Facebook hack stalls dog rescue work | CBC News

- Mar/2017 YouTube boycott over offensive content | CBC.ca

- Mar/2017 Ads on Google | CBC.ca

- Oct/2016 Samsung phones on fire | CBC.ca

- Jul/2016 Phoenix pay system to blame for twice breaching public servants' private data, says deputy minister | CBC News

- Jun/2016 CBC News: Ottawa June 08, 2016 | CBC.ca

- Jun/2016 Dog charity pays hackers ransom to retrieve computer files | CBC News

- Nov/2015 The Exchange | CBC.ca

- Sep/2014 Home Depot offers credit monitoring amid card breach worries | CBC News

- Jun/2014 Bitcoin has a future, but maybe not as a currency | CBC News

- May/2014 Watch Dogs: Ubisoft game spotlights hacking, privacy concerns | CBC News

- Apr/2014 Baloney Meter: Are there discrepancies in the CRA's Heartbleed timeline? | CBC News

Research notes

Here is a sampling of reference notes and materials that I prepared for various segments over the years. These focus in the last few years when I was active with the CBC.

I've archived them here on the site for my own memory, but also to show some of the behind the scenes process that goes into doing a regular technology column on a show.

- Dec/2022 ChatGPT Delivers Ideas and Answers on Demand, If You Know How To Ask

- Nov/2022 Mastodon's Promising Federated Approach Will Frustrate You More Than Twitter

- Oct/2022 Has the EU Finally Made the U in USB-C Actually Stand for Universal?

- Aug/2022 Why is it so hard to law enforcement to track down harassers?

- Aug/2022 Canadians Are Reliant on Rogers Whether We Like It or Not

- Jun/2022 Is Google LaMDA Sentient?

- Apr/2022 Twitter To Add Edit Button...Finally

- Jan/2022 Despite 5G’s Capabilities, Mobile Providers Can’t Connect With Airline Industry

- Oct/2021 Facebook Sets Out To Build The Multiverse...and Hopes To Hide There

- Oct/2021 Lessons in Designing Blast Radius The Hard Way; One Mistake Crashes Facebook For Hours

- Sep/2021 Instagram delays launch of app for kids

- Jun/2021 Apple vs. Facebook Battling For Your Privacy

- Feb/2021 Clubhouse's Entirely Predictable Privacy and Moderation Issues

- Jan/2021 Major Ransomware Services Busted

- Jan/2021 Parler Pas: Fringe Social Network Offline

- Dec/2020 Politicians Playing Among Us

- Sep/2020 How AI Could Help Ease Your Zoom Fatigue

- Aug/2020 Legacy Authentication Risks

- Jul/2020 Should I Worry About TikTok?

- May/2020 The New Office: Home?

- Apr/2020 Stop Drowning Online During Isolation

- Apr/2020 Contact Tracing via Smartphones

- Feb/2020 Smartphone Addiction

- Jan/2020 Privacy at CES 2020

- Jan/2020 New Rules for Youtube

- Dec/2019 Digital ID in Canada

- Nov/2019 Protecting Yourself Black Friday Scams Online

- Nov/2019 Data Retention in Canada

- Nov/2019 Catching Distracted Drivers With Technology

- Sep/2018 Family Locator Apps

- Aug/2018 VPNs

- Aug/2018 G Suite for Education

- Aug/2018 3d Printing

- Jul/2018 Deep Fakes Was That Real

- Jul/2018 Smartphone Addiction Intended Consequence

- Jul/2018 Facial Recognition Discussion Required

- Jul/2018 Fortnite a Good Example

- Jul/2018 Google Duplex Are We Ready

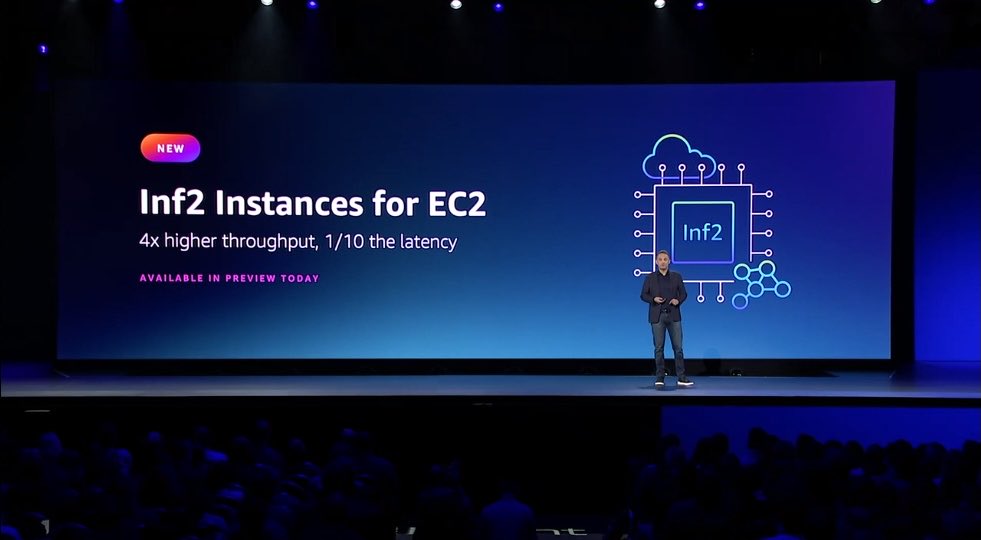

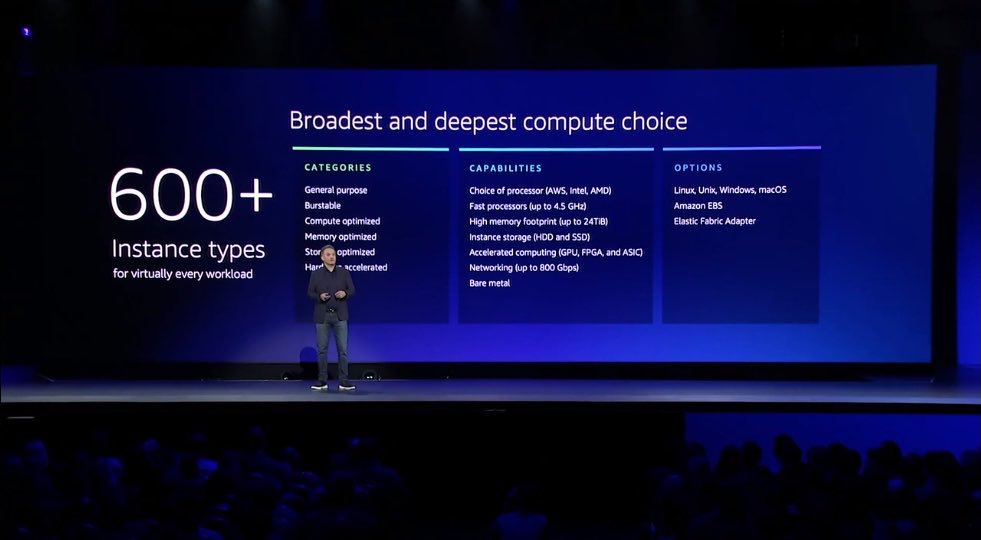

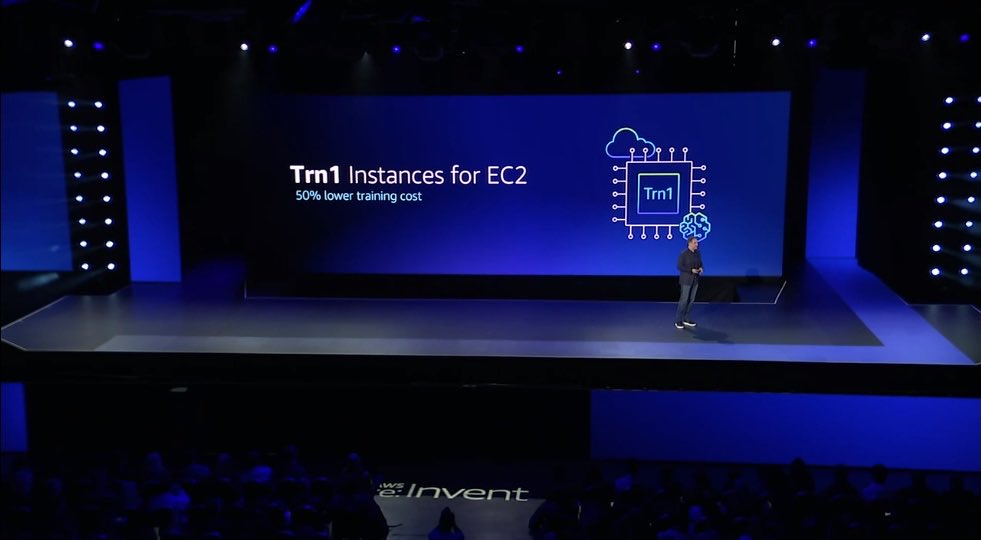

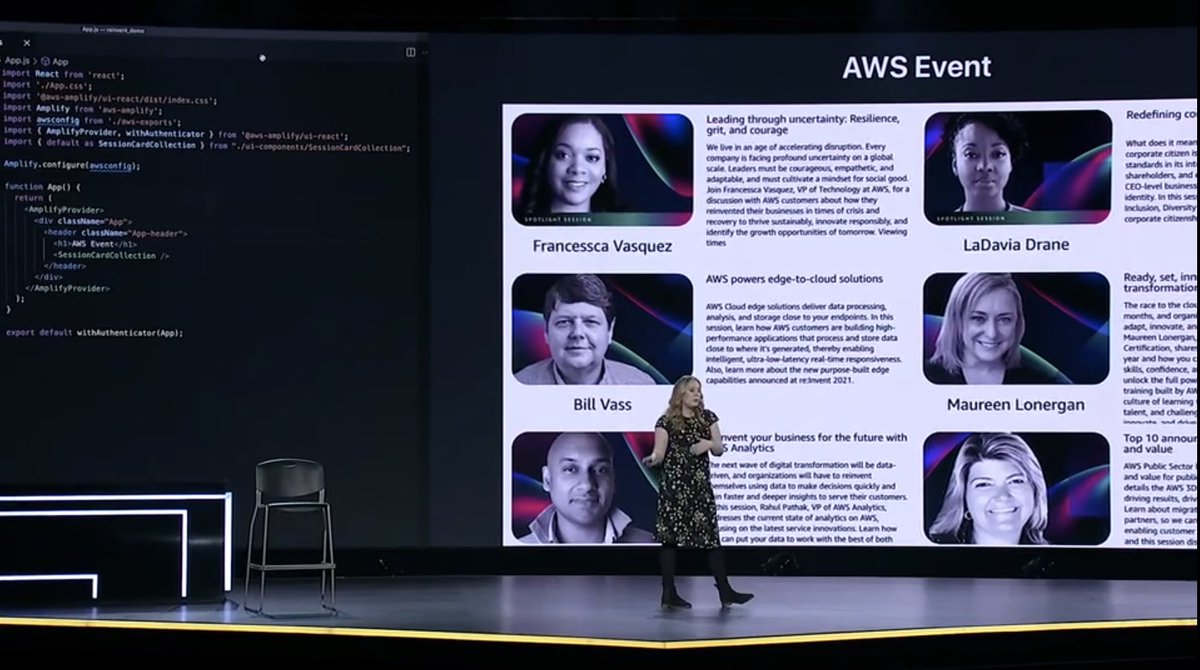

In the fall of 2012, 5,000 people gathered in Las Vegas for the first AWS re:Invent. I was there and spent almost all of my time with my laptop open, surrounded by other builders, working together to try out new techniques and tricks that we were sharing with each other.

That spirit of community was infectious. After the event, a lot of the connected we made shifted online. And year after year, I would see those friendly faces are various events around the world and we all did our best o make it back to Las Vegas in November for the biggest conference in cloud.

Monstrous growth

re:Invent grew almost too big. Every year it would expand to move venues and add more sessions. The event was scaled back in the pandemic, with the 2020 edition moving entirely online.

As the world has moved through the pandemic, the conference has grown back to it's previous size and beyond. Almost 60,000 people attended the 2024 event.

it's at the point now where I don't think the hours in the day will permit anything more to be jammed into the week...though I'm sure I'll be surprised.

What should I do?

I've participated in every edition of the conference. As a builder from the start, an AWS Community Hero for ~6 years, and now as an Amazonian. During the period of rapid growth, I started writing an annual guide to the conference.

It started simply enough. I was trying to remind myself how to prepare for a physically and mentally exhausting week. I love attending this show, seeing my friends, making new ones, and learning a ton. But, it can take a lot out of you.

I started to experiment with how I approached the conference. I figured out little tricks that made my week easier. I genuinely wanted others to get the most out of the week too.

Eight times, I published my guide, starting in 2016:

- 5 Ways To Get The Most Out Of AWS re:Invent 2015

- 5 Ways To Get The Most Out Of AWS re:Invent 2016

- The Ultimate Guide to Your First AWS re:Invent (2017)

- The Ultimate Guide to AWS re:Invent 2018

- The Ultimate Guide to AWS re:Invent 2019

- The Ultimate Guide to AWS re:Invent 2020

- The Ultimate Guide to AWS re:Invent 2021

- The Ultimate Guide to AWS re:Invent 2022

Define 'ultimate'

You'll notice that the 3rd edition of the guide introduced the adjective, "ultimate". I debated whether or not to do this at the time.

It's a bold claim and I'm deeply uncomfortable drawing attention to myself.

However, that guide is also a 19 minute read. It's comprehensive to say the least. I think the "ultimate" description is accurate. The guides quickly became a months long effort.

Not because they took that long to write, but information about the show changed in the lead up. AWS would announce the basics (where, when, etc.) and then add more details as they locked things in.

In addition to the level of details, the guides started to get a lot of attention. Each year the audience grew. People would reach out to me with great feedback and share how they had come across the guide and how it helped them.

All said, over the eight guides, more than 500,000 people read them. That's a crazy amount of people and inline with the majority of attendees.

Copycats?

While some companies did try to copy the guides, more simply wrote up their schedules and linked to my work. I really appreciated that and tried to keep things as neutral as possible.

The personal recommendation approach resonated with people. I'd like to think that it helped to seed the idea for the official AWS guides to the event. These guides were written by individuals in the community and helped a specific audience select sessions at re:Invent. I wrote the security guide for the first few times and I'm happy to see the effort continuing to this day.

Constant #protips

Looking back at the guides, there are a few tips that still hold up and probably always will:

- Wear a good pair of sneakers that you've already broken in

- Pack snacks

- Hydrate often

- Chap stick and hand cream—casinos are absurdly dry

- Plan ahead to eat at reasonable times

- Don't be shy–take advantage of being there in-person

- Have fun!

A fun show

The guides were a way for me to share my excitement for the show. I always feel an odd combination of exhausting and exhilaration when I attend AWS re:Invent.

There is so much to learn. So many people to connect with. It's a great reminder of the unlimited possibilities that drew me into technology in the first place.

While I don't write the guides anymore, I'm happy I did. I'm even happier that I still get to attend re:Invent—and re:Inforce!—even if it's a little more stressful helping to deliver the show vs. trying to take it all in.

Most of all, I'm glad that I was able to contribute to the amazing cloud community in a meaningful way. I'm happy I still get to contribute and more than a little relieved, those contributions don't needs 3+ months of work each year!

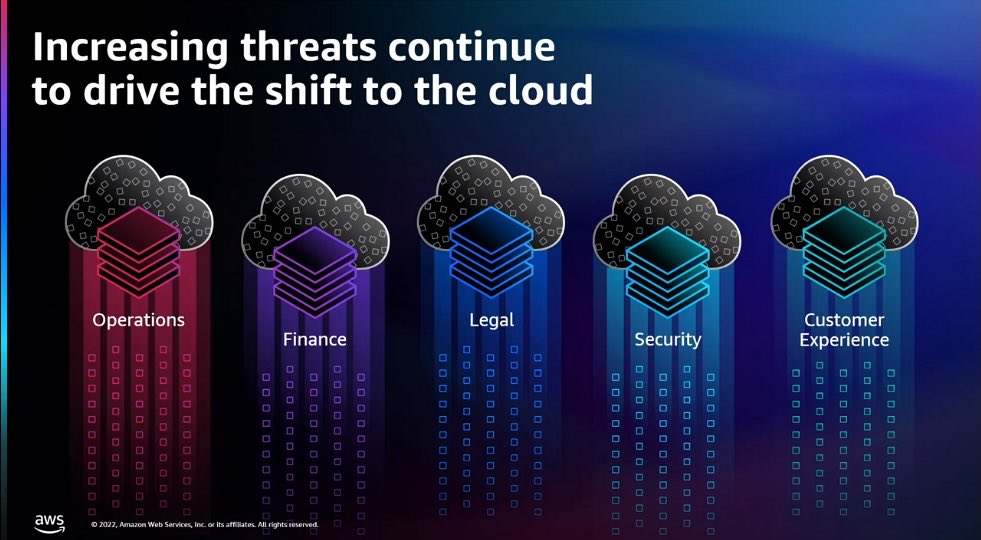

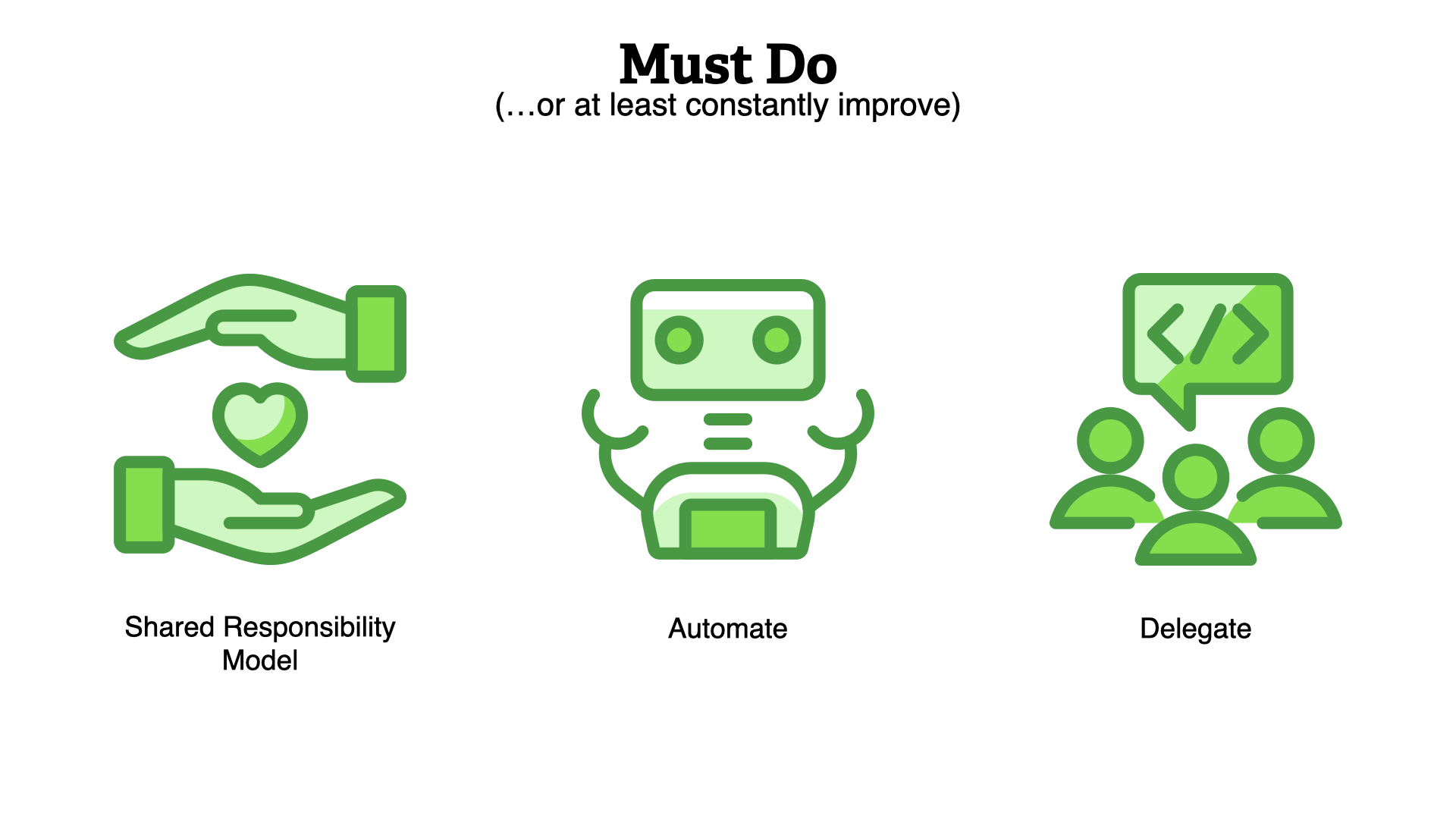

]]>Security is everyone’s responsibility. How is that supposed to work? Our teams have struggled for a long time trying to move away from reactive work to planning and building for a more resilient future.

Is that shift even possible given our small teams and the never ending stream of issues to respond to? How can you scale your security practice in any meaningful way?

Security issues are often deeply technical and nuanced. Delegating work is a constant challenge and it feels like we’re explaining the same things over and over again. Security teams are stuck.

In this talk, we’ll dive deeper in the roles security teams play within most organizations. We’ll explore the common approaches to running a security practice, what works and what doesn’t.

Then, we’ll start to examine communication techniques that can have a positive impact. We’ll look at how you can shift your work from constant response to more impactful efforts by laying the groundwork for others to succeed.

You’ll walk away with a better understanding of the problem your team is facing and some small steps you can take now to enable other people with your organization to make better security decisions.

You are a dedicated security professional. You understand your area of expertise deeply and are working the best you can to help improve the security of your organization.

You're working on a team of like-minded individuals. While it can be challenging always facing threats and trying to help reduce risk, you generally work well together.

The challenge is that your team is accountable for the security of the organization.

But you work with a lot of teams in the rest of the business. Those teams are responsible for various business goals. They are working just as hard to meet those goals.

It can be hard to keep up.

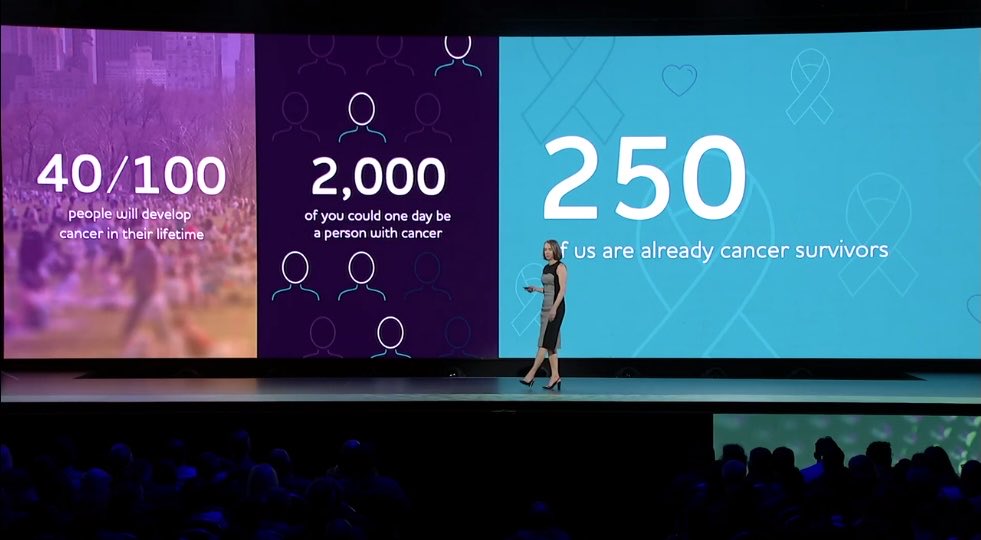

Why is it hard to keep up?

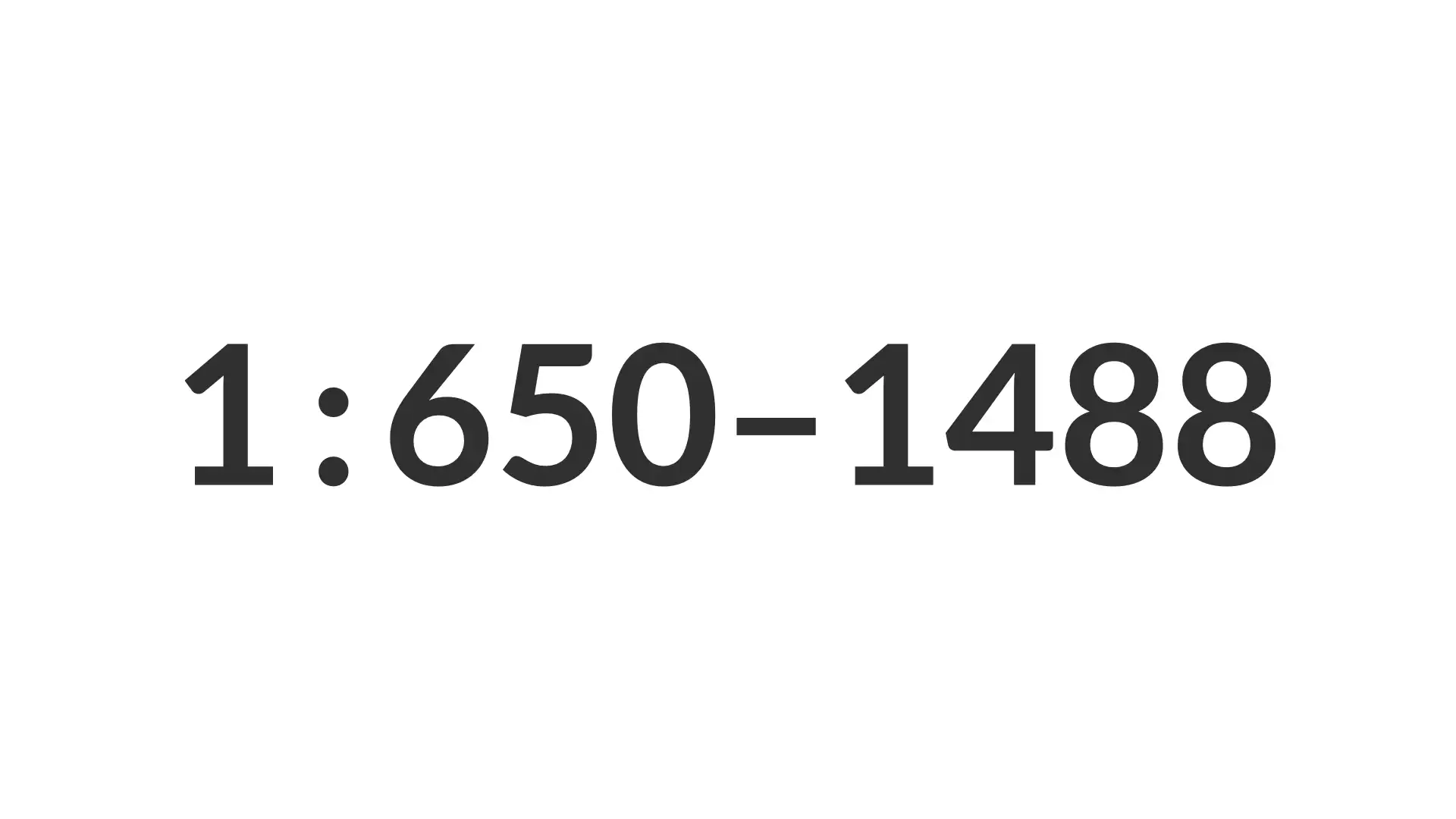

A few years ago, a couple of different analyst firms looked at the ratios of security professionals to the rest of the business.

They found that there was about one full-time security resource for anywhere from 650 to 1,488 other employees.

That's one person responsible for the tools, processes, and output of at least 650 others. Is that even possible?

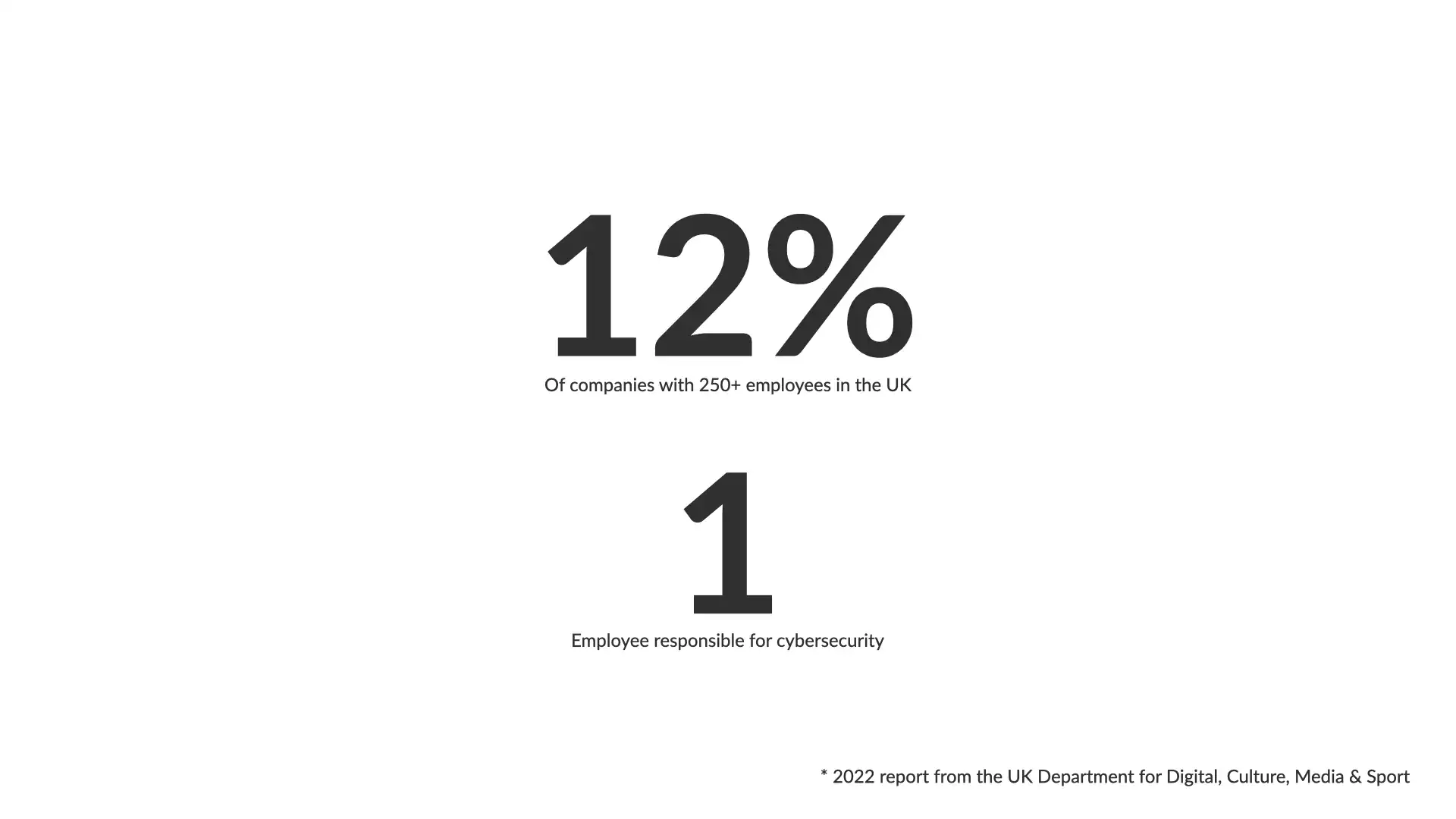

In 2022, a report from the UK Department for Digital, Culture, Media & Sport provided a similar metric.

They found that 12% of businesses with 250+ employees had 1 person responsible for cybersecurity...and that wasn't necessarily a full-time assignment.

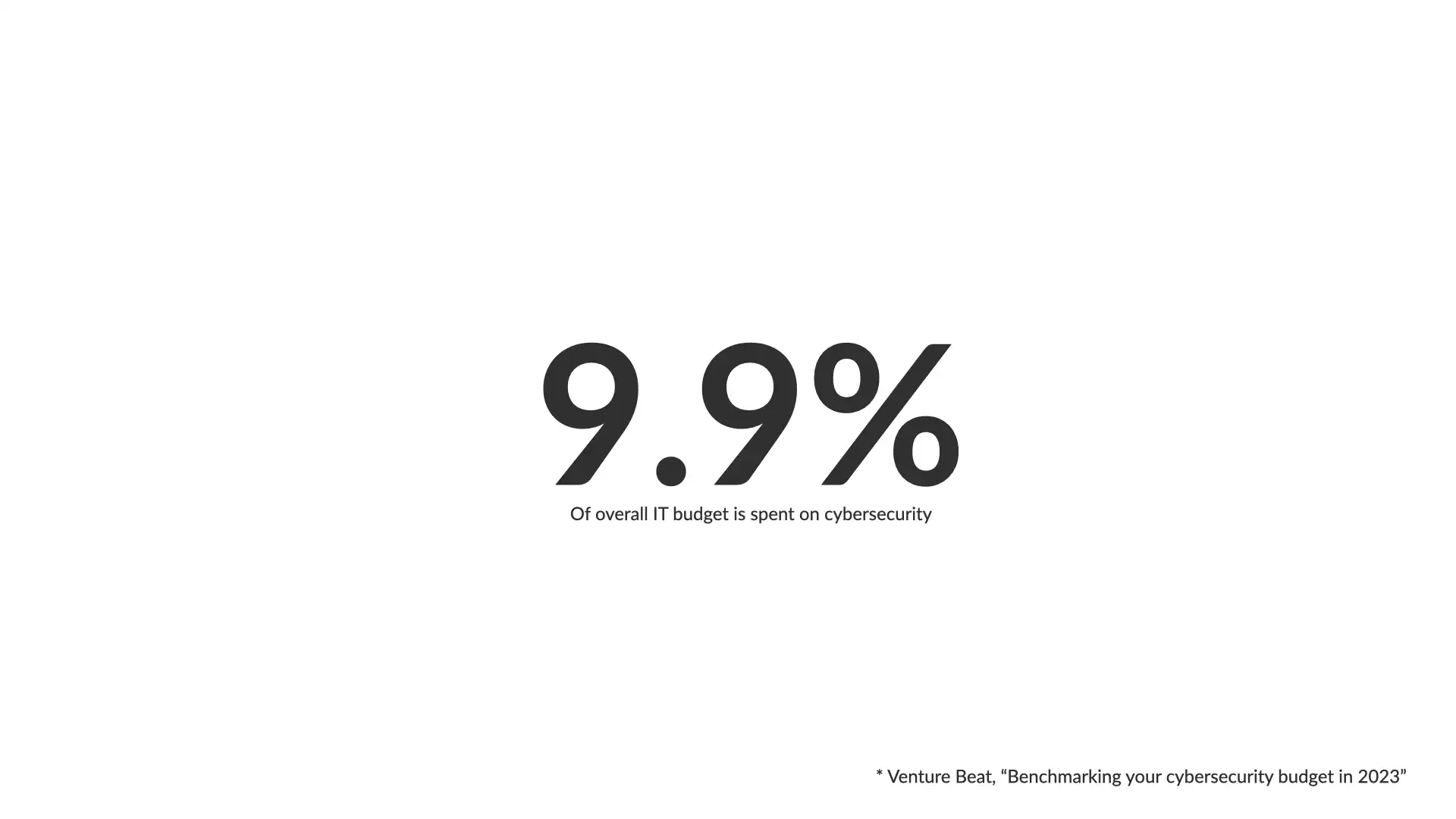

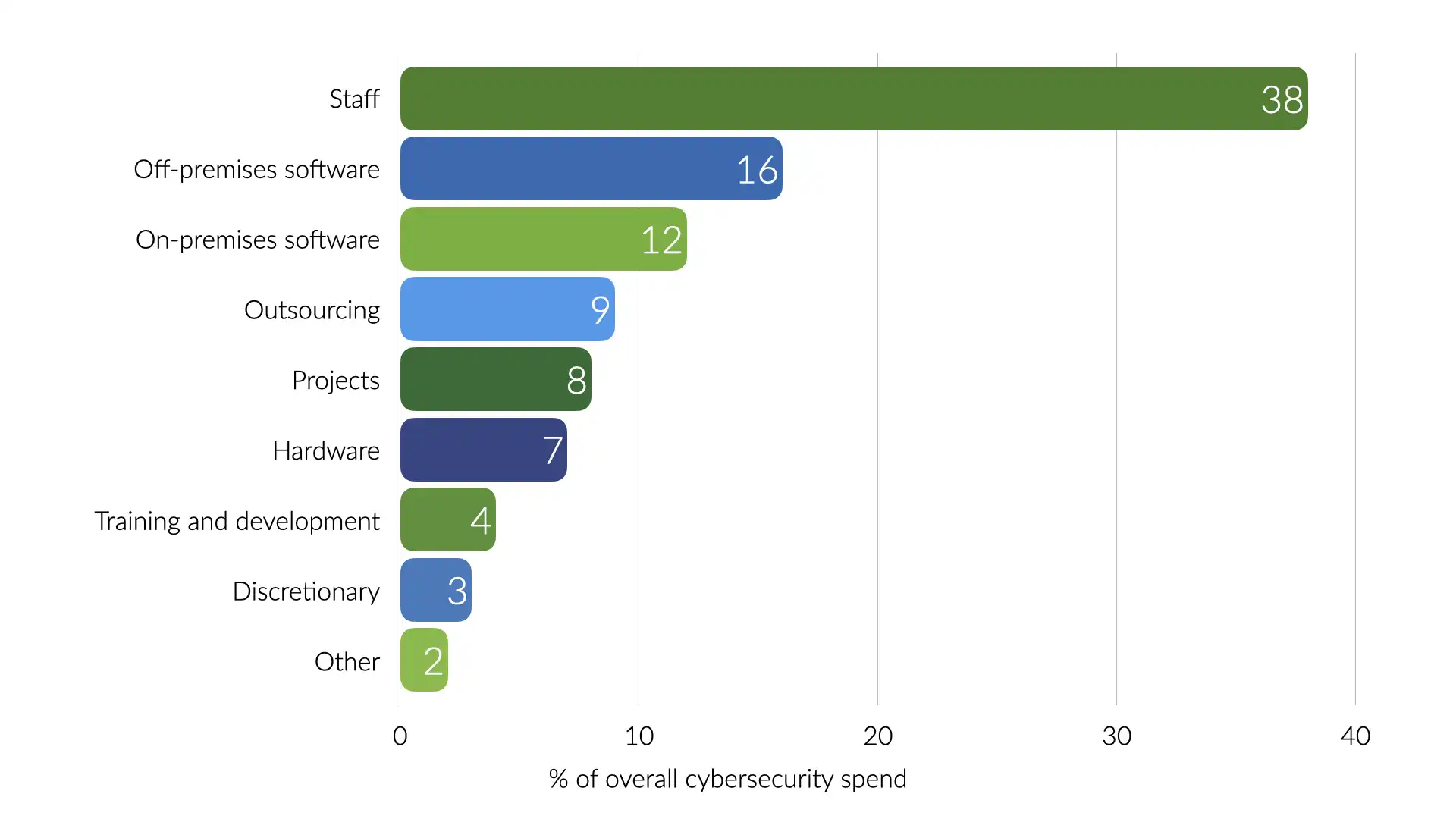

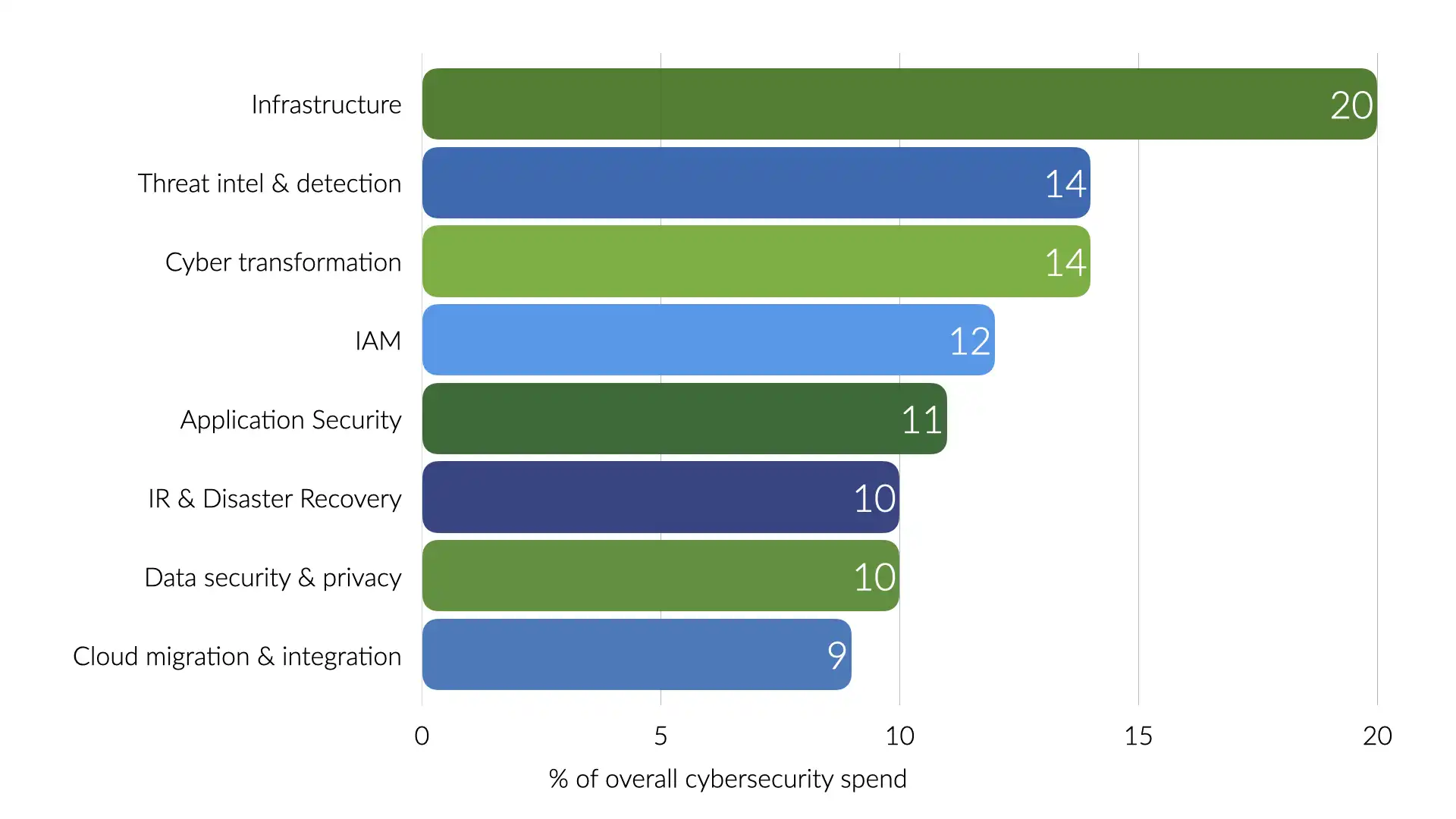

In 2023, Venture Beat conducted a survey and found that most organizations spend just shy of 10% of their IT budget on cybersecurity.

38% of that spend was on staff. That works out to 3.8% of the overall IT budget spend on security personnel.

That sounds like a lot, but there are some of the most highly compensated individuals on staff. Good for those in the industry, still representative of a disproportionate ratio of security folks to the rest of the business.

The Venture Beat survey provides even more insights. Most of the security spending is going to infrastructure and threat intelligence and detection.

That loosely translates into outer perimeter controls and figuring out what's already causing issues within your systems. Very little directly into scaling up the security team or preventing security issues in the first place.

The result of all of this is a lot of security folks feeling burnt out. Security teams are overworked, constantly fighting fires and trying to answer why a significant chunk of the IT budget is being spent on simply not losing ground.

We should do better. Can we?

Organizational design

...or lack there of

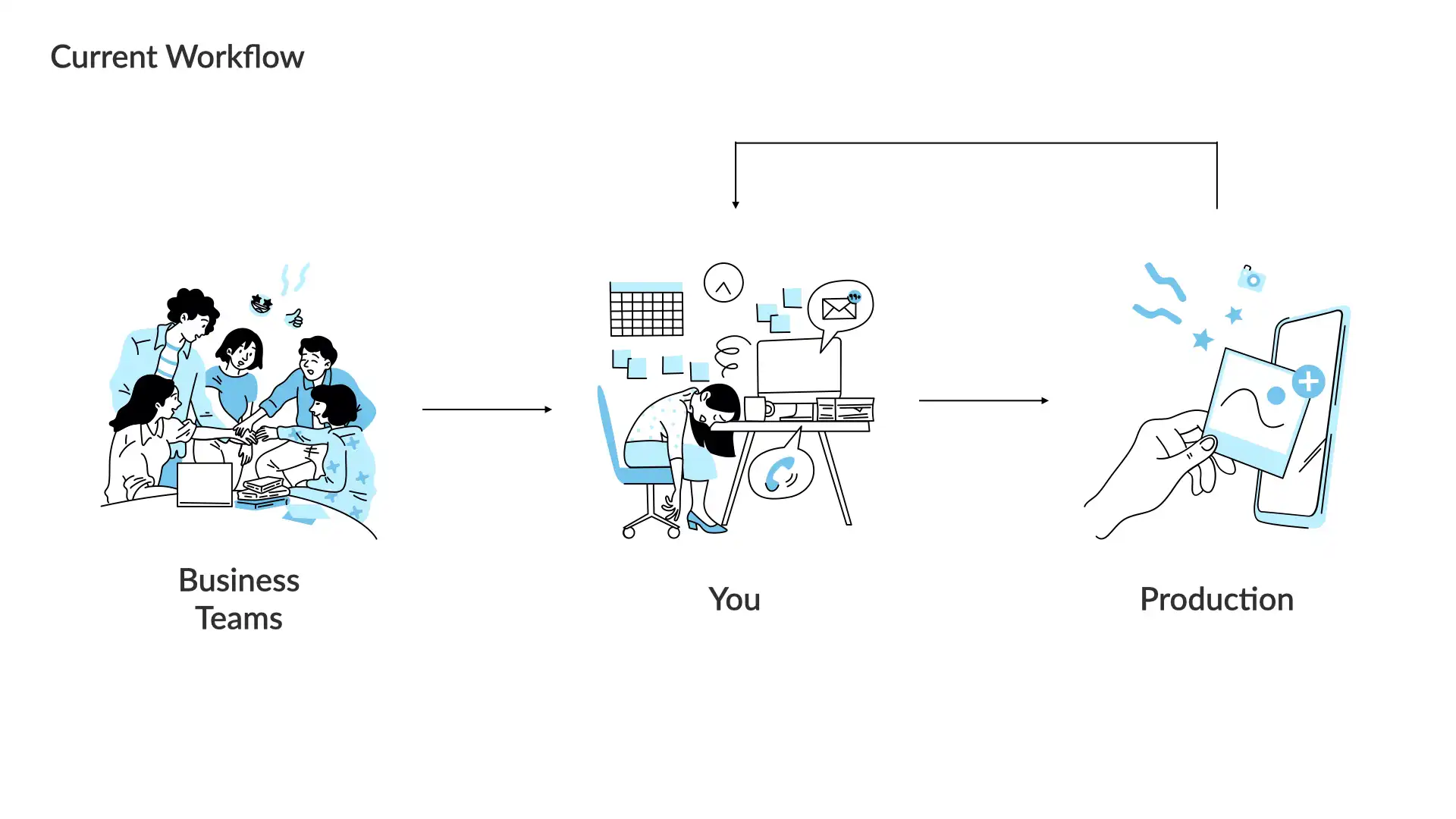

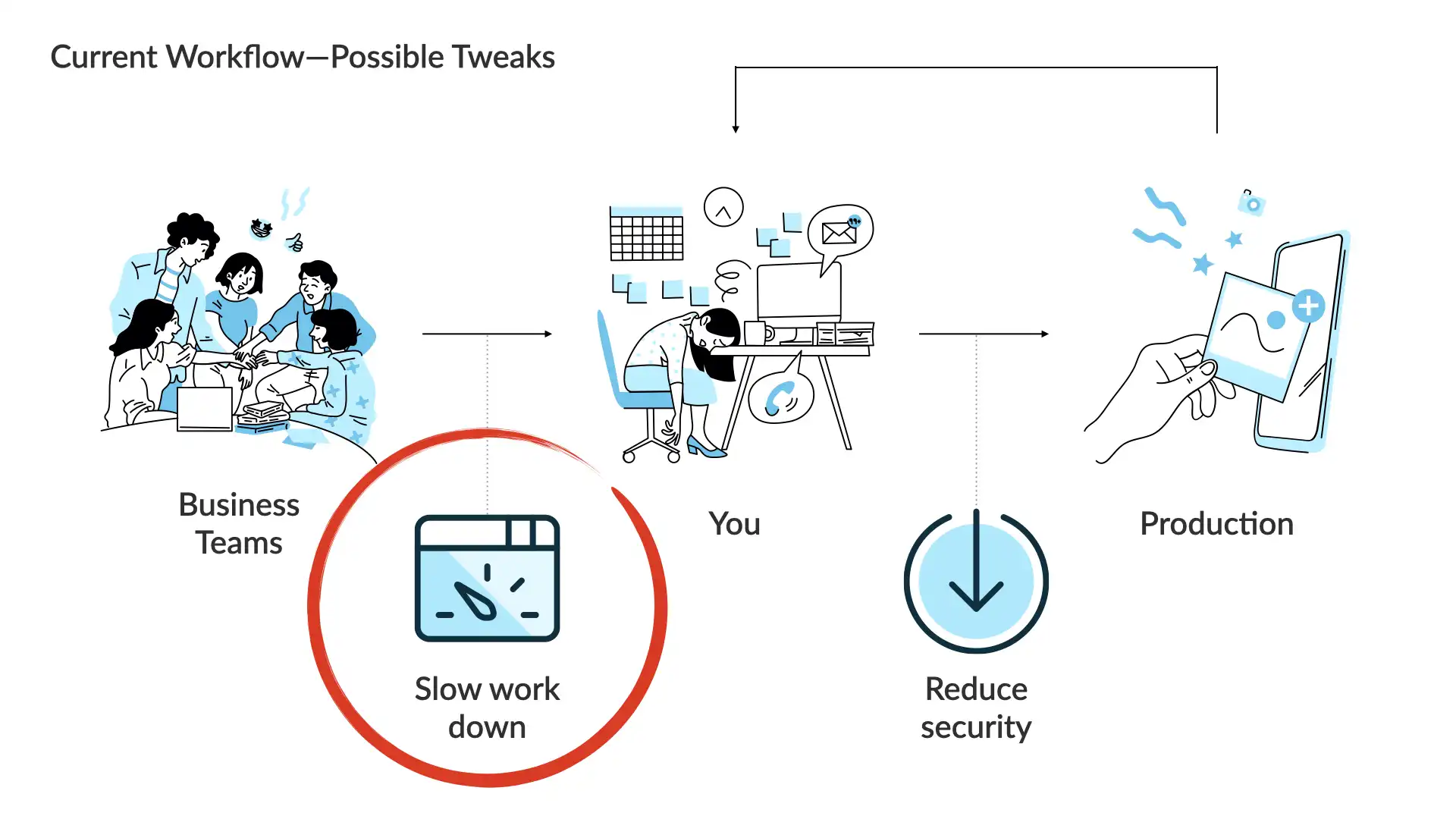

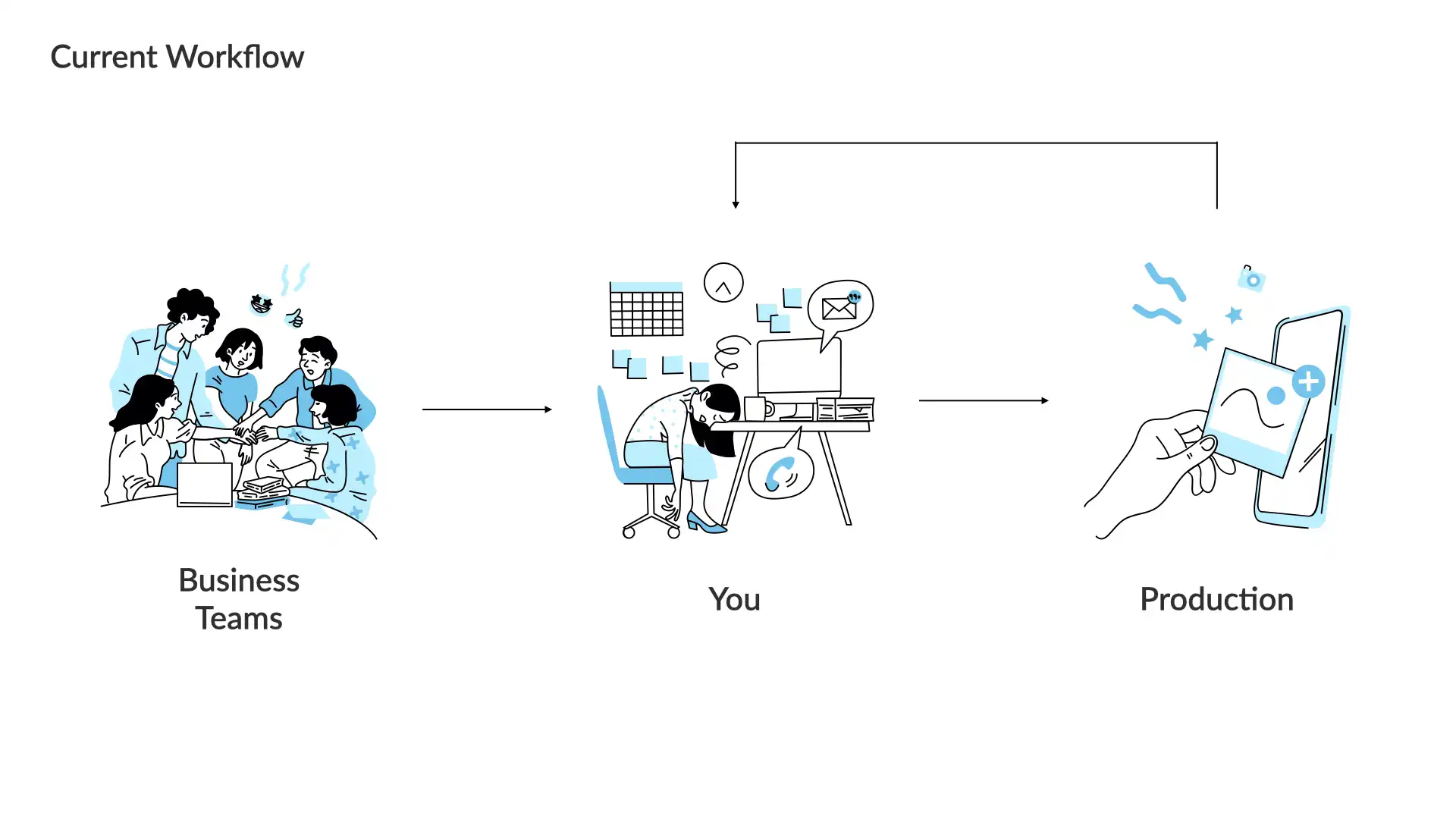

The current workflow for most security teams is simple.

A business team has built or bought something and they want to get it into production as quickly as possible. They do have business goals to meet after all.

You, the security person, is the gate they must pass before that happens.

This works-ish. Sadly, it leads to a lot of "hero" behaviour which prevents the actual challenge from being addressed and piles more pressure on the security team members.

The fundamental challenge comes back to that ratio. There are a very limited number of security team members and way, way more business teams.

Security is almost always the slow down or roadblock for their productivity...even thought security is working at 100% or more of expected capacity.

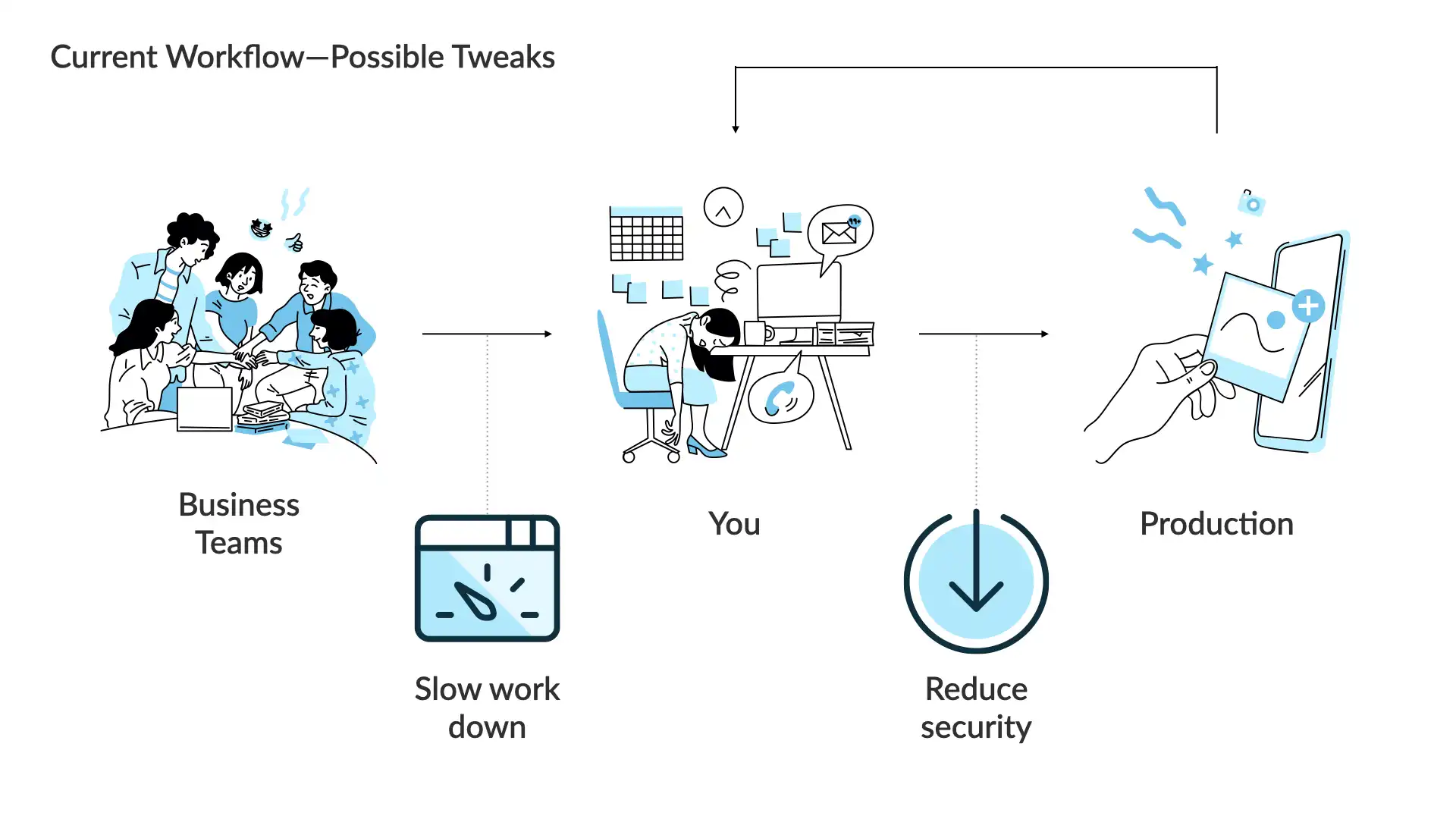

Keeping things at a high level, there are only 2 ways to smooth out this workflow.

You can slow down the incoming work.

or

You can reduce your security goals.

No security team should accept a reduced security posture as a matter of standard practice.

We need to continue to raise the strength and effectiveness of the security posture of our organizations.

We might be able to slow the incoming work down though...we're come back to that in a few.

Now, you can add more folks to the security team. You can scale up the team to handle more work.

This can help.

But, hiring anyone is an ongoing expense (something about always wanted to be paid 😉) and it takes time for new team members to come up to speed.

And as we've already looked at, the ratio of security team members to the rest of the business is so disproportionate that it's unlikely you'd be able to get it down to anything reasonable to actually address these challenges.

This is not a path that will successfully solve this issue.

So, what approach will work?

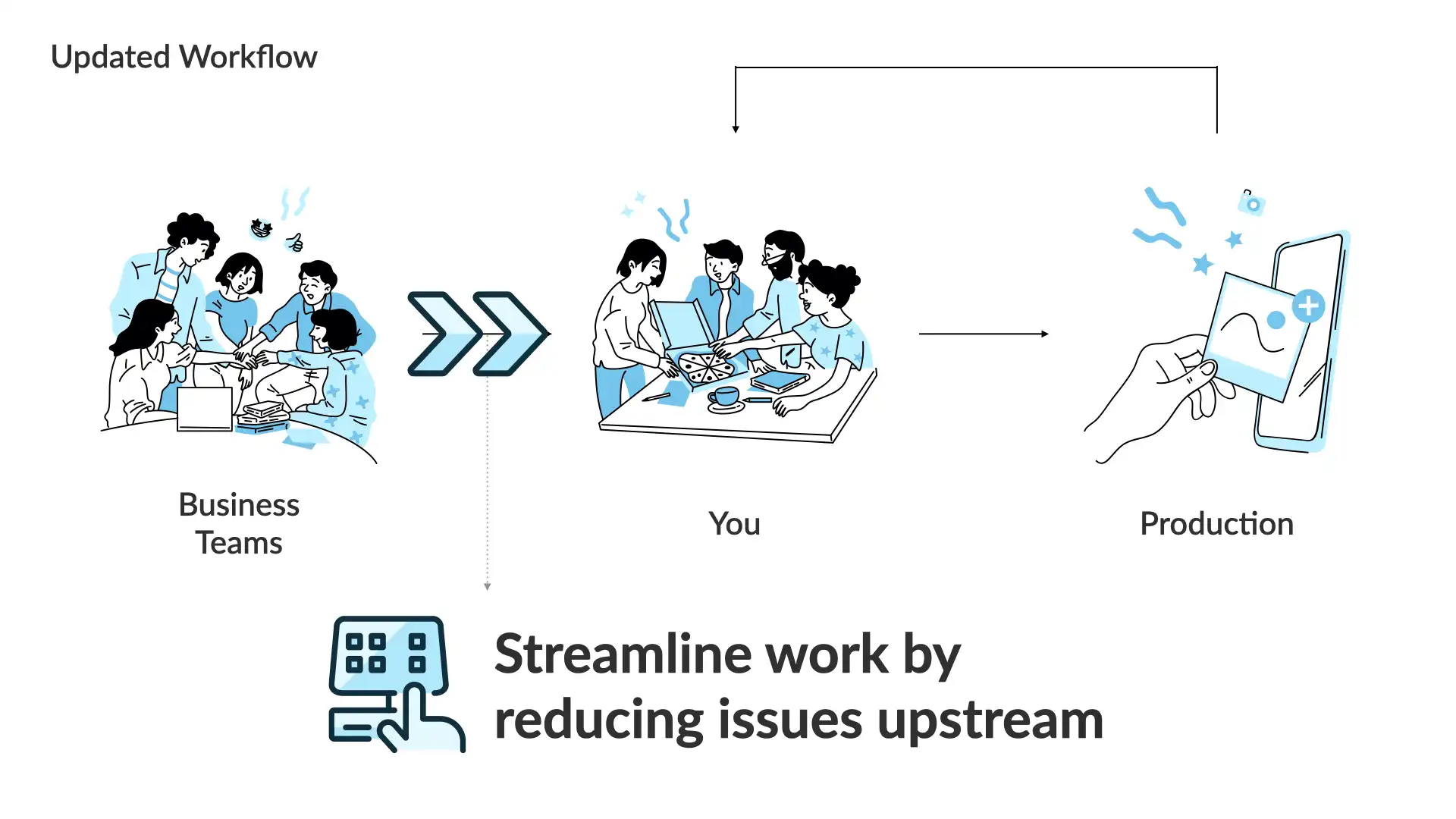

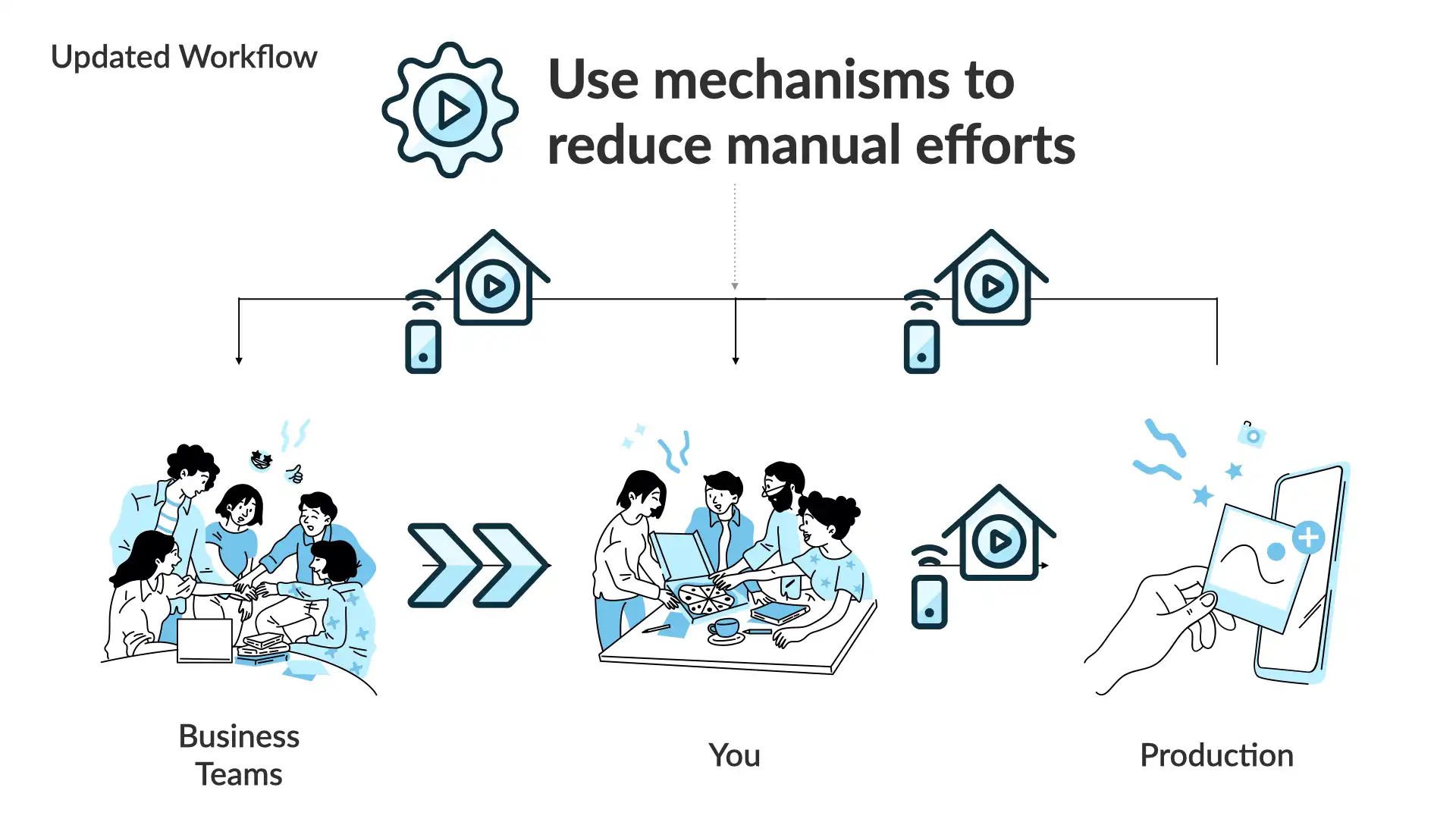

We—the security team—need to work with our business teams to reduce the issues upstream.

We need less security issues coming to us before systems are rolled out to production.

How do we do that?

Our general approach will be to use mechanisms to reduce our manual efforts.

A mechanism (in this context) means that we're going to try and create a tool of some sort—a process, an automation, etc.—and get folks using it, all while making sure it's delivering what we actually want.

What we don't want is more process and red tape. If something isn't serving the business' end goals, get rid of it!

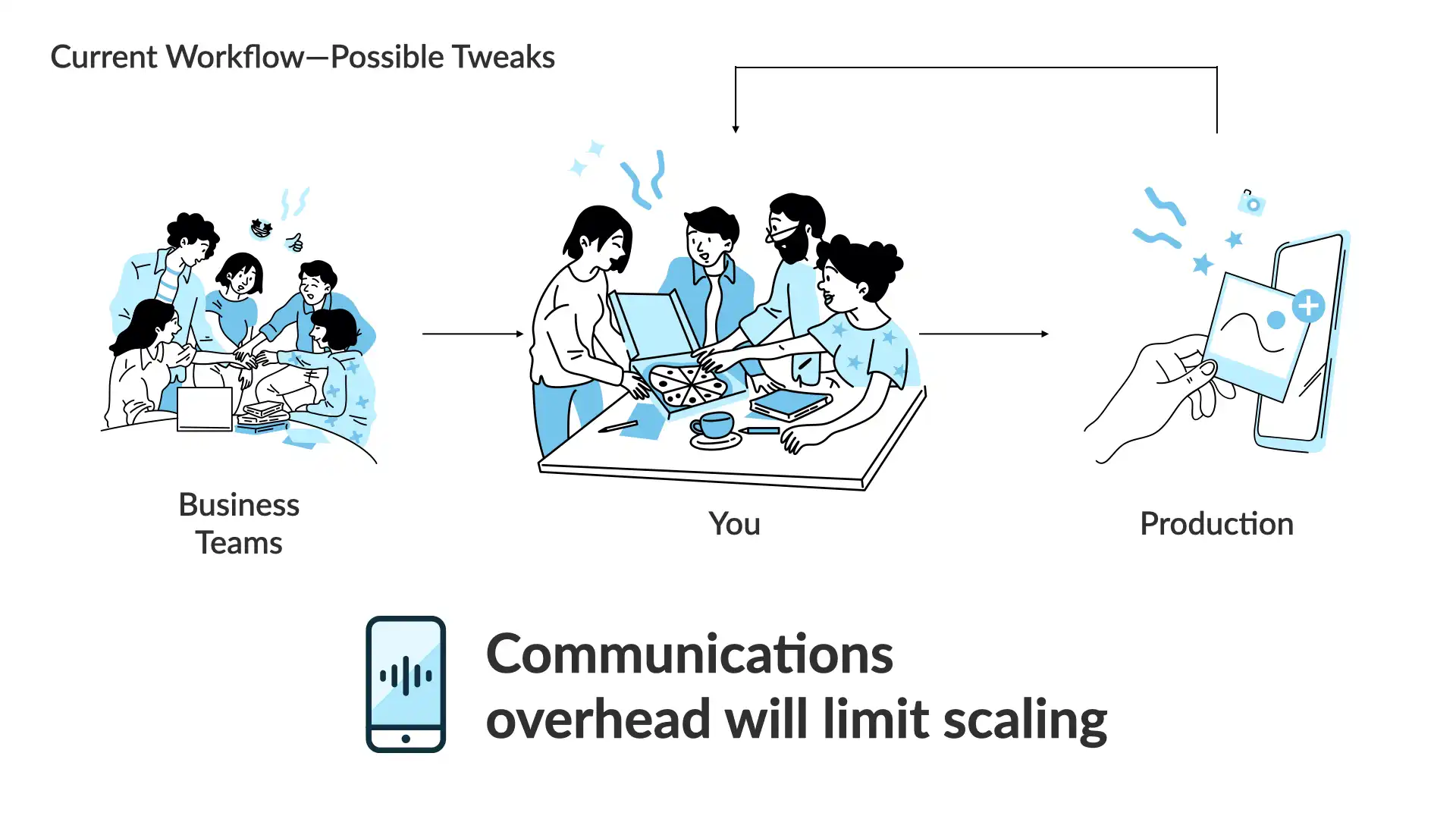

Mechanisms and automation

...sort of

There's a lot we could look at here, but for this talk, we're going to look at the communications side of things.

Can we change the way we communicate and reduce the amount of work our teams are receiving? Can we make it easier to communicate in a more productive way?

Yes, we usually lean into technology to solve problems. We eagerly roll out code and additional layers of systems to address issues as we come across them.

That's not necessarily a bad thing. But, more frequently that we'd like to admit, we just end up with more overhead and challenges that are harder to address because the systems we just deployed have added more constraints!

We're going to take a deeper look at a breach notification from here in Canada. Don't worry, this will be a positive example that we'll be examining to see if we can make some tweaks to improve it even further.

But let's start with a general template for a notification...

The formula for a breach notification—e.g. letting people know there was a security incident and they were affected—is very straightforward...at a high or conceptual level.

It is:

- What happened?

- What information was affected?

- What have we done in response to the breach?

- What does this mean for you?

- More information and how to make a compliant (with a regulator, etc.)

- Signed by a representative of the company

Remember, we're not trying to blame anyone. We're trying to learn!

We're going to dive into a breach TransLink had in 2020. TransLink is responsible for the regional transit network in metro Vancouver.

They were breached in 2020 and the entire recovery and review process took 7 months. That includes the clean up and work with the privacy regulator. The initial incident response appeared to be quite quick.

Overall, I think there communications were good. When compared to a lot of security comms, they probably should be seen as excellent.

But, I'm a bit picky and I think TransLink could've made a couple of small tweaks to really knock it out of the park.

From the TransLink primary web page for this incident:

"

In December 2020, TransLink was the victim of a cyberattack. Upon detection, we took immediate action to shut down multiple computer systems as a protective measure and launched an investigation.

Over the course of the investigation, we worked tirelessly with cybersecurity experts to understand what happened and determine what information was unlawfully accessed. We also worked with law enforcement authorities and notified the Office of the Information and Privacy Commissioner for BC.

This investigation has been a complex and time-consuming process that took months to complete. It involved extensive analysis, the use of e-discovery tools, and manual data reviews.

The privacy review concluded in June 2021.

"

As you can see, that is a solid opening. However, it does fall into some very common traps. Let's make a couple of edits...

In December 2020, TransLink was the victim of a cyberattack. Upon detection, we took immediate action to shut down multiple computer systems as a protective measures and launched an investigation.

Over the course of the investigation, wWe worked tirelessly with cybersecurity experts to understand what happened and determine what information was unlawfully accessed. We also worked with law enforcement authorities and notified the Office of the Information and Privacy Commissioner for BC.

Here is what you need to know about your information.

This investigation has been a complex and time-consuming process that took months to complete. It involved extensive analysis, the use of e-discovery tools, and manual data reviews.

The privacy review concluded in June 2021.

Why those changes?

The original was too complicated, not empathetic, and it didn't set a shared context.

The same changes we made shifted the opening to quickly state what had happened, hint at the scale of effort to respond, and then quickly dives into the number one thing the reader of the letter would want to know.

Of all the common traps the original fell into, the most egregious—yes, even in the context of a good communication, there can be things that are egregious!—is that it's written from what the organization wants you know about the situation, not what the reader wants or needs to know!

Yes, breach notifications and other security communications can be used to reduce damage to an organizations reputation. However, it's critical that you remember that both parties in this communication are victims.

The organization—TransLink in this case—was the victim of cybercrime. The intended reader of this letter were also victims of that same crime.

As long as the origination wasn't derelict in their care of the information, this post shouldn't be written with the tone of "it's not my fault!", but one that lands more along the lines of, "we are both impacted here, but let's start to fix this by focusing on you".

Let's go for a complete re-write. We'll start with a strong and direct opener written with the reader and their position in all of this top of mind.

"

In December 2020, TransLink was hacked. When we found this out, we worked as quickly as possible to protect your data.

"

Simple. Straight to the point. With the first sentence, the reader knows what this communication is about and what happened.

The second puts TransLink in a positive light and it's also—without all of the fancy terminology or long-winded explanation—an accurate description of what happened.

We continue...

"

We brought in cybersecurity experts to help. We also contacted law enforcement and the Office of the Information and Privacy Commissioner for BC.

"

This next section is primarily a regulatory requirement. They need to let the reader know that they've complied with the local privacy legislation.

But, we frame it here as a follow-up to the statement about working as quickly as possible to protect your data.

This way, it shows—in plain language—the effort that the organization went to in response to the breach.

The next line is critical and it's often missing from these types of notifications.

"

We’ve contacted the people whose data was accessed during the hack to help them.

"

Remember, the original text that we're rewriting was published on the TransLink website. It went out to everyone. That makes sense due to the scale of the breach and the nature of the organization. This agency is the regional transit authority and its work impacts everyone in the area.

We add this line as a direct answer to the question in every readers mind, "Was my data breached?". This direct statement answers that near the top, helping the reader focus on the rest of the message.

We follow that up with an explanation of what the reader can find on this page.

"

This webpage contains information about what happened. It listed what data was accessed and what steps we’re taking to try and make sure this doesn’t happen again.

"

And finally, we closing this section with a catch-all to help answer any questions the reader may have after reading the rest of the page. This is may be implied, but by stating it, the reader is reminded of the dynamic and that organization is trying to help reduce the overall risk and any potential harms that may come from the breach.

"

If you have any questions after reading this information, we’ve set up a few different ways to get in touch with us directly. Those methods are listed at the bottom of this page.

"

Again, the communication from TransLink during this incident was great. But, with a few small tweaks, I think we've improved it to focus on what matters most to their target audience.

Our updated version heads off a lot of questions by answering them directly. We also reduced the complexity of the writing making the text easier to read. We've dropped the level equivalent from about 2nd year of University to middle school level (as per the Gunning fog index). That makes the entire text much more accessible.

This approach should reduce the number of inbound requests to the organization. And it's an approach you can use internally to do the same for your team.

Clear communication can reduce your workload.

Let's look at another positive example. This one is from CISA, the Cybersecurity and Infrastructure Security Agency in the US. CISA is the national coordinator for critical infrastructure and resilience in the United States and often acts as a cybersecurity centre of excellence for their public service.

We're going to dig into their Log4j vulnerability guidance page. They got this page up quickly when Log4j went public and used it as the single source of truth for the issue. They updated repeatedly with information about the vulnerability as it came to light and made sure that the page was as comprehensive as possible.

Here's a section of the CISA page that we'll be looking at. It's solid.

But, I do want to point out one approach that may create challenges for the intended audience...

Each of the highlighted passages are technical terms or industry specific language.

That's not necessarily a bad thing. CISA was a specific target audience in mind—security experts.

However, given their position within the US public service, they are also going to have a lot of general IT folks and other various interested folks reading this too.

The question is, can we reduce the specific language without reducing the effectiveness of the writing or the technical details?

We won't go through each term point by point, but here's a quick example of what we could swap out:

- "active, widespread exploitation" => "attackers are currently using this"

- "unauthenticated remote actor" => "attackers don't need to login to use this successfully over the internet"

Yes, sometimes a longer sentence is a clearer one. When in doubt, a longer sentence with less niche terms and more straightforward language is probably going to be more effective.

This also required more context. While this page is for a specific vulnerability, it has a wide ranging impact that is crying out for more context.

The second paragraph with, "...is very broadly used in a variety..." doesn't provide enough context. Something like this might've been more effective, "Log4j is a key building block of a lot of software and most people are unaware their systems are using it. It helps developers write log information that's helpful for troubleshooting, that's why it is a part of a lot of unexpected systems."

Last example, again a positive one.

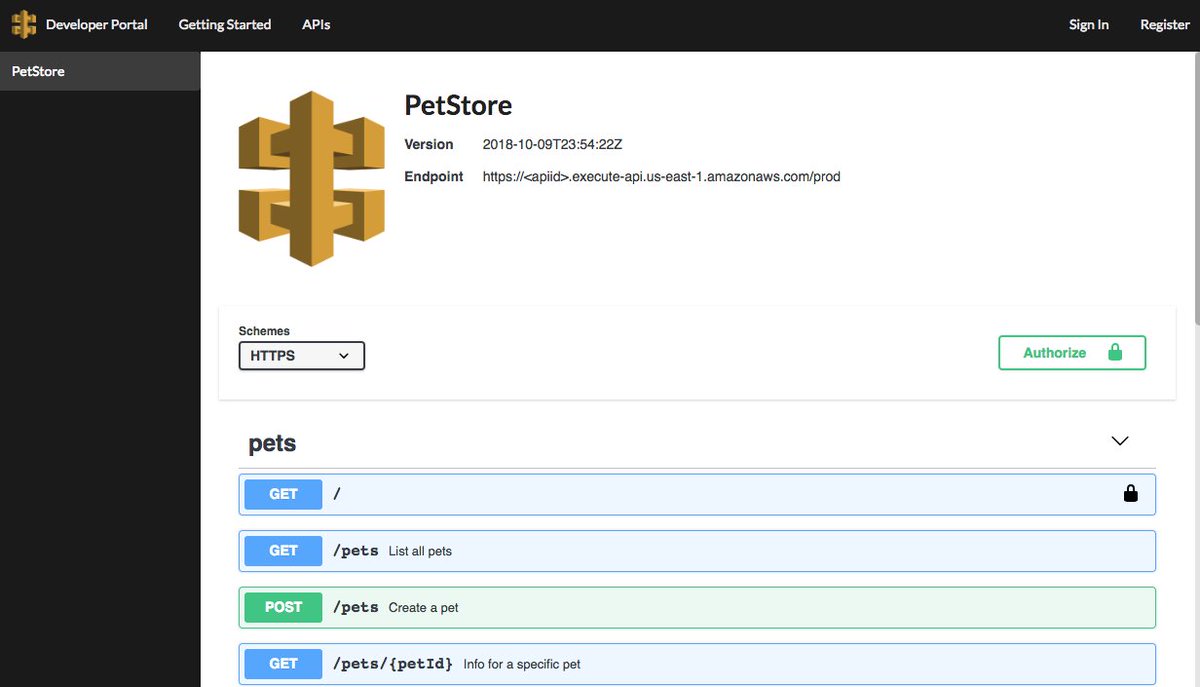

This time, we'll look at an open source project called Prowler. This is "an open-source security tool designed to assess and enforce security best practices across AWS, Azure, Google Cloud, and Kubernetes".

It's a great project and helps a lot of organizations improve their security posture.

In this example, we're going to look a specific detection from the platform and how it aims to help developers and security folks avoid a security issue.

Here's the detection information in full. It's typically delivered as a JSON object in the platform or teams will route these to Slack or some other system where they are typically working.

This is a solid detection. The description is crystal clear. The risk is well constructed and the recommendation isn't too bad.

But two things jump out at me here.

The first is the opening sentence of the risk, "The use of a hard-coded password increases the possibility of password guessing." That doesn't accurately convey the level of risk.

How much does this increase the possibility of the password being guessed? Is that actually the case with this detection? Why is this worth the time to fix?

The second challenge is the recommended fix. Sure, AWS Secrets Manager could help address the issue. But are there other approaches that would work here? Are there other secrets managers that would work?

Again, the original is solid.

But if it provided more of the why in the risk it would be more useful.

"Hard-coded passwords can be stolen by attackers or accidentally exposed in a source code repository. Avoid this pattern if at all possible, as attackers can easily compromise the account the password has access to."

Similarly, the recommendation can be expanded to help the recipient find the best solution for their situation.

"Using a tool to manage secrets—like AWS Secrets Manager—keeps passwords and other secrets out of your code. This partner makes it easier to update that information (e.g., change the password), while keeping it more secure as the function requests the password only when it's needed."

A couple small adjustments and we've reduce the dots the recipient is required to connect!

As we've seen in the examples we've discussion—and again, they are all positive examples!

We can make some small adjustments to our approach to communication to help everyone make better security decisions and help reduce the incoming requests to our team.

For communications:

- Keep it simple

- Focus on the reader

- Create shared context

- Be empathetic

Working upstream

We've talked about communications with an eye to how clearer communications can reduce incoming requests to your security team.

We're going to take that a step further and talk about education. One gap most security teams have today is a failure to help the rest of the business understand how to prevent security issues.

I'm not talking about security awareness training (don't even get me started on that) or a patch management process. I'm talking about genuinely investing the time required to help other folks outside of the security team understand how security first thinking can help them.

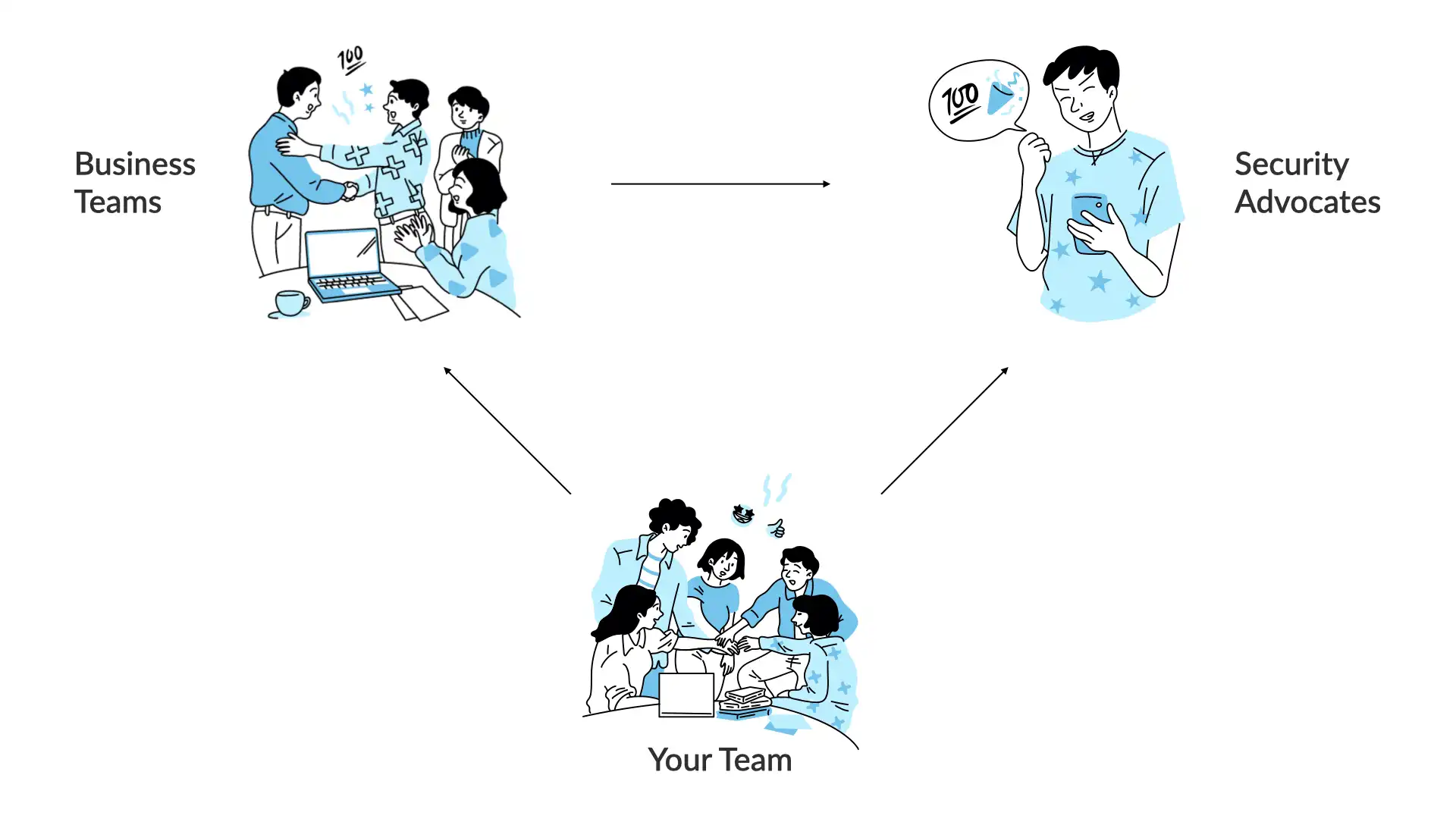

Your team works regularly with a number of business teams.

As we discussed in the intro for this talk, that ratio is heavily weighted towards the business teams. You can't keep up with the work coming from all of the different business teams.

One way to help with this is to recruit other folks within the organization to advocate for more security-first or security-focused decisions.

Programs that help build this type of internal community go by a few different names—Security Champions, Security Guardians, etc.—for simplicity we'll call them "Security Advocates". Folks in this group—either "officially" recognized or not, are the people that other teams lean on for security help.

Most organizations have folks filling these types of roles for a variety of specializations. Whether it's usability, performance, accessibility, a specific framework, data analysis, etc., there's always that "go-to" for a certain topic.

Even when you don't have a specific program to nurture and expand this community, this type of dynamic still manages to surface. Making it an actual recognized effort has a lot of benefit. The foremost being you can track your efforts and invest (time, money, etc.) where it's having the biggest impact.

Once you've identified these folks, you can start to shift the dynamic between your team and the business teams.

Even if you don't identify these advocates, you should try to shift the dynamic between the security team and the business teams.

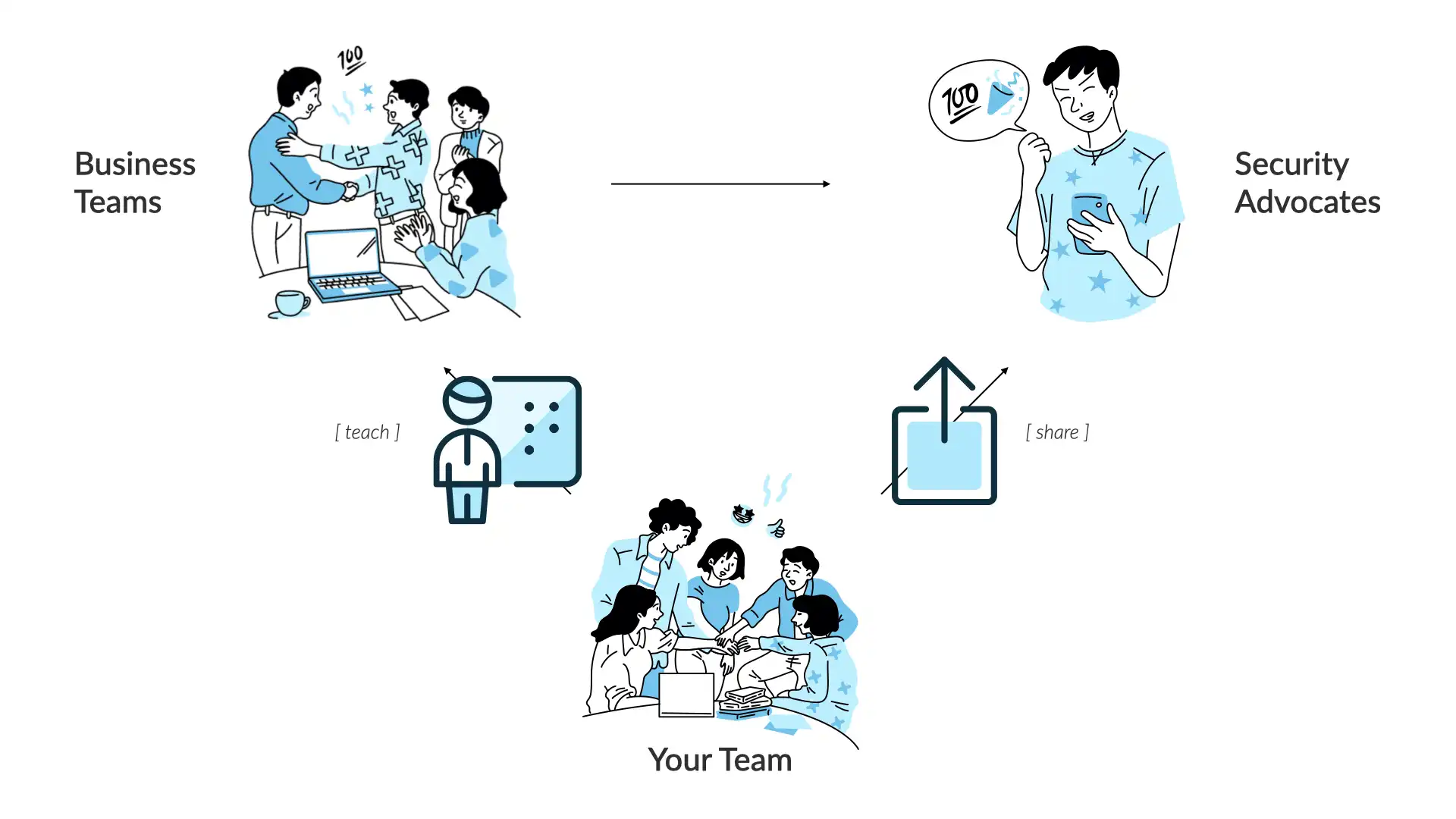

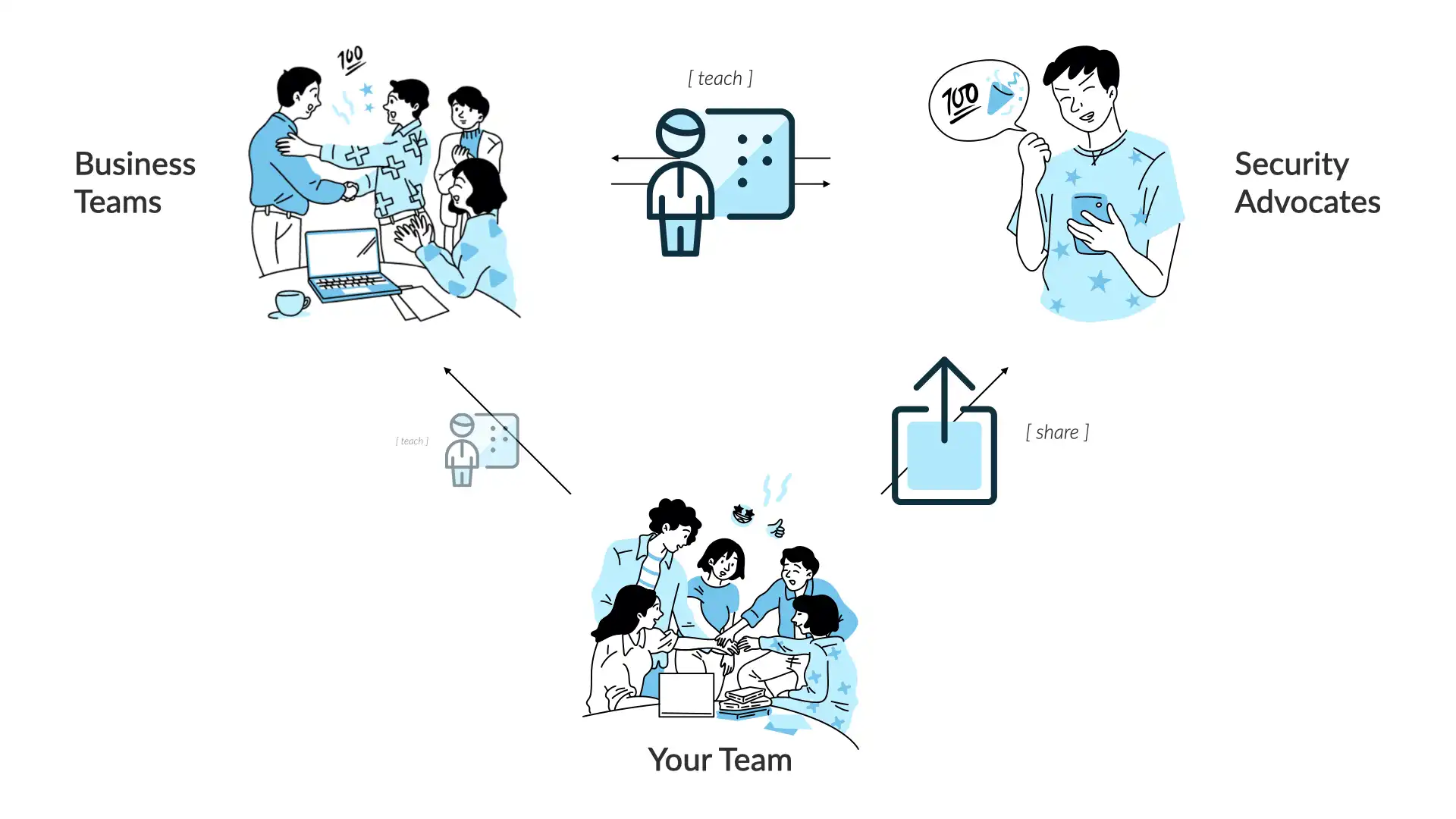

Your goal as a security team should be to try and teach the business teams about security as often as possible. With few exceptions, you should try to evolve your current workflows to try and move as much of that work to the business teams as possible.

Now, I know what you're thinking. Why would other teams take on our work? Why do would we want to cede these responsibilities to those teams, what are we supposed to do?

For your work, don't worry. There is and will always be more than enough security work to go around. 🤦

For the business teams, the advantage is easy to understand. They are best positioned to understand the full context of the risk decision (what are the risks of this new feature/solution/product?) and understanding how security can help them meet their business goals, helps them to make better decisions. That improvement helps reduce the time it takes to get things out the door and meet their goals more quickly.

Remember, this is not a complete move of security decisions to the business team. The goal of this effort is to move the decisions that are best made by an informed and educated business team to that team. The security team should be contributing to organization-wide challenges and cross-team risks.

As these efforts mature, your team will do less teaching and more sharing with teh security advocates. They in turn will take on more of the teaching role.

This can happen organically. But in each case where I've seen this type of effort succeed, it's been through a well understood and funded program.

That can mean any number of things, but it's common to have some sort of incentive structure for the advocates. Whether that's perks or specific compensation rewards or a faster path to advancement. Find what works for your organization's culture and make sure that this type of program is set up so that everyone involved sees the benefit.

You may see this and think it'll never work for your organization. Business teams don't care enough about security to give it this type of prioritization. The cooperation you see today is only because teams have to deal with security (whether by regulation or policy).

When I've discussed that idea with executives around the world, I see a common problem. Most people think of security as work to stop bad things from happening. While that's part of it, that's only a fraction of the work under the security umbrella.

The goal of security is simple. It's to make sure that what you build works as intended...and only as intended.

That's a positive goal. Stopping bad things is a negative goal and it's impossible to actually track that. The positive goal is easier to get people to rally around.

When you understand that security is trying to make sure that the work a team is doing works and only does what it's supposed to, now everyone understands they are working towards the same goal!

Security and the business have the same goals.

They all want:

- Low-risk changes to production

- Resilient systems

- Visibility into their data and the processes they use

To meet those goals, you need to provide the why.

Why does this request matter? Why is this risk an issue?

If you help people understand the why, they can make better decisions moving forward. We want people to think through each situation that comes up. Technology is too complicated to map out each potential challenger beforehand.

If people understand the context of a requirement, they can make better decisions. As the expert, it's up to you to provide that understanding.

Remember, that you are the security expert. No one shared your context. You have a broad understanding of the thread landscape, the controls within your organization, and the overall risks the business is trying to balance.

The business teams are just trying to get their work done! They have goals they are working towards and are trying to navigate the various systems and processes to the best of their abilities. They are experts in something else entirely and should not be expected to be or become security experts.

Your goal is to make security frictionless. Or maybe a better call out is your goal is to use fiction judicious, helping other people make better decisions.

How can you start? Here are a few ideas for some simple techniques to get the ball rolling:

- Open office hours

- Review design docs and ask questions

- Record quick video explainers for security questions

- Join team channels and learn!

Let's a take look a how the business team and the security team approach the same issue.

There was a vulnerability in the popular django python framework in 2022. This framework is used to help build web apps and APIs. The vulnerability was an SQL injection—sending bad database requests to generate unexpected results—that could expose data that shouldn't be available.

This was an important issue to fix, but not an emergency. Think weeks, not days.

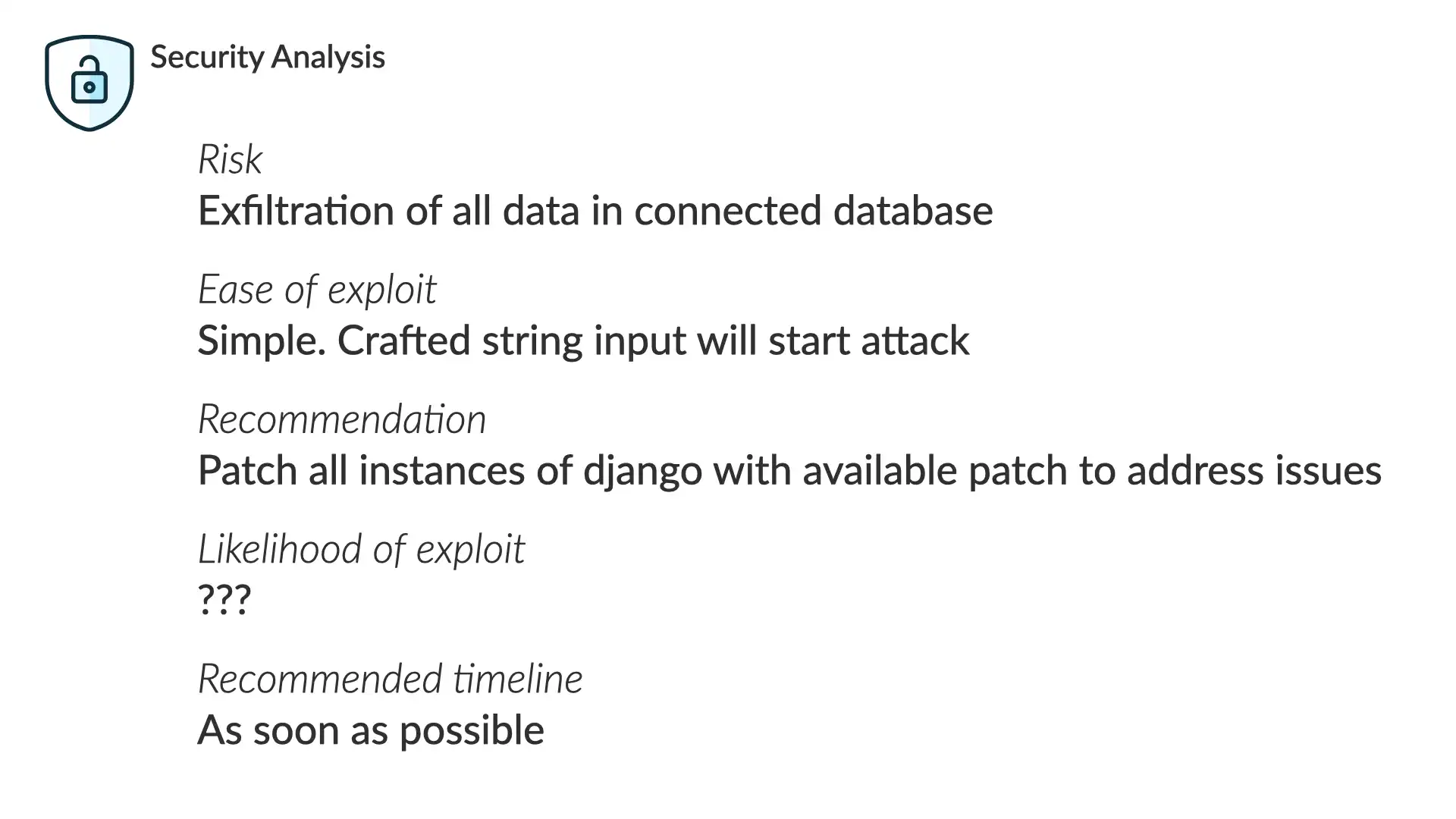

If we put on our security hat, we see that...

Risk

Exfiltration of all data in connected database

Ease of exploit

Simple. Crafted string input will start attack

Recommendation

Patch all instances of django with available patch to address issues

Likelihood of exploit

???

Recommended timeline

As soon as possible

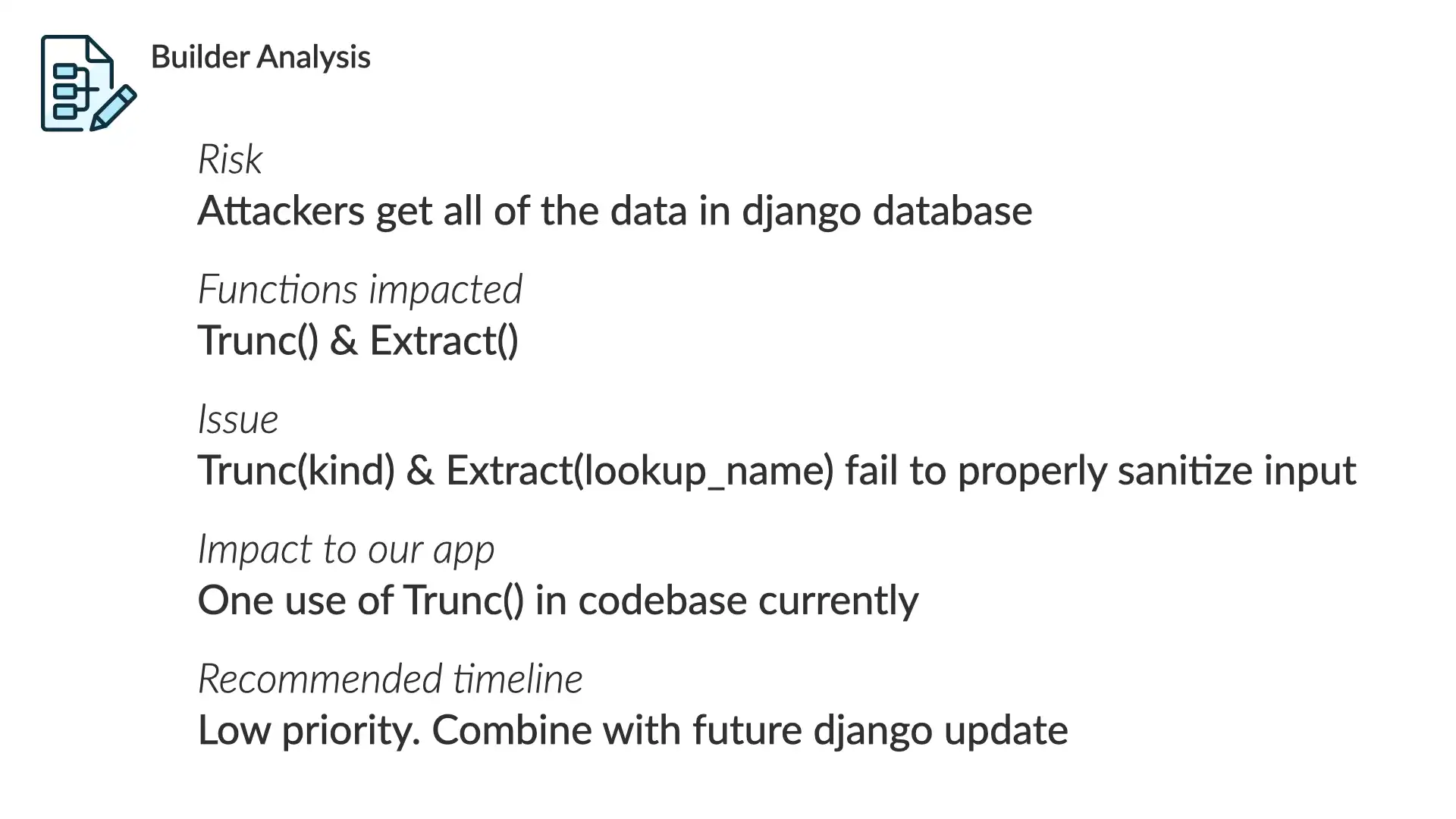

With our builder/business had on...

Risk

Attackers get all of the data in the django database

Functions impacted

Trunc() & Extract()

Issue

Trunc(kind) & Extract(lookup_name) fail to properly sanitize input

Impact to our app

One use of Trunc() in codebase currently

Recommended timelines

Low priority. Combine with future djano updates

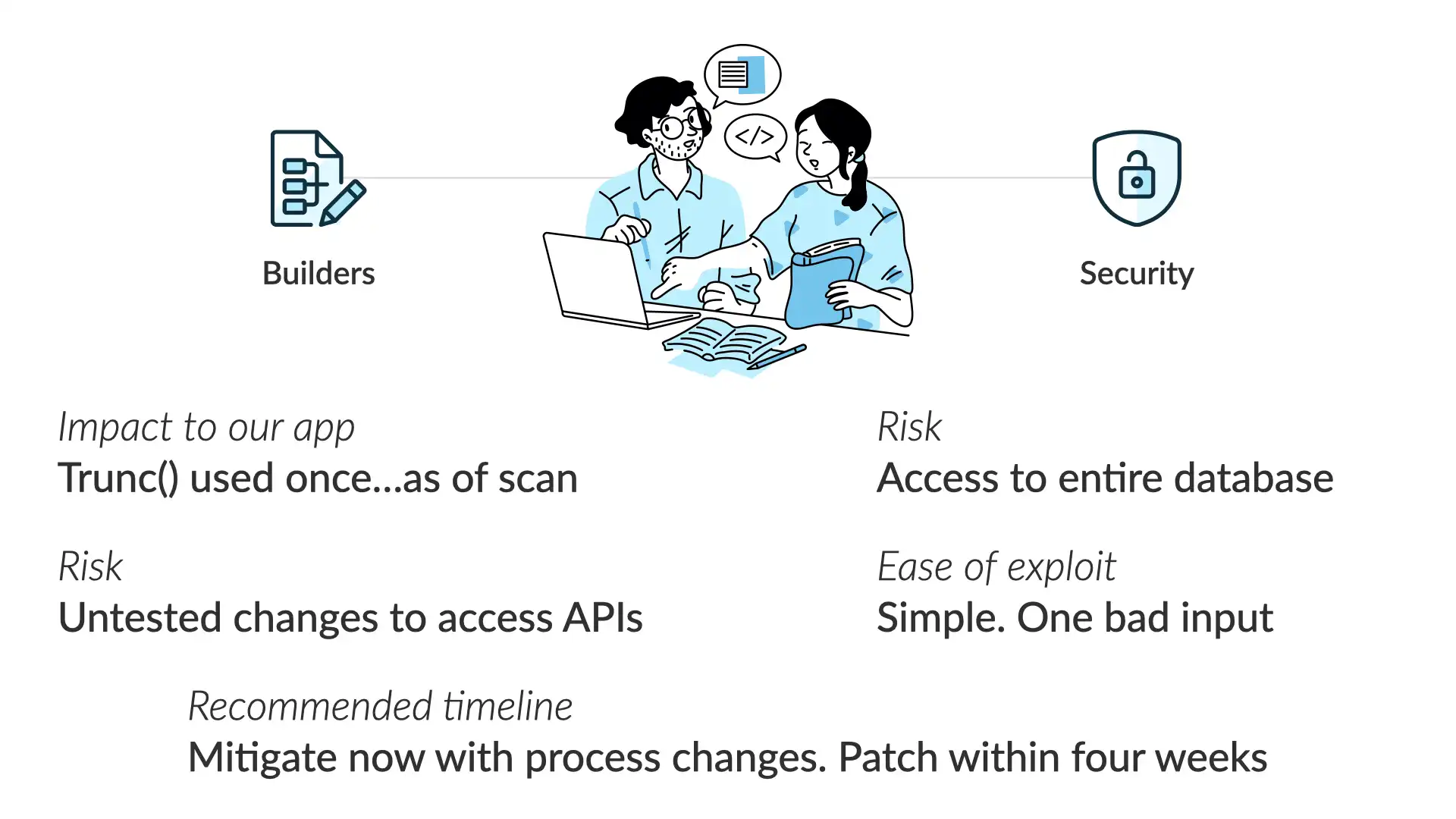

If we line up these perspectives—by working together as we've discussed—here's where we end up:

Impact to our app

Trunc() used once...as of our last code scan

Risk

Access to the entire database

Risk of the fix

Untested changes to access APIs

East of exploit

Simple. One bad input